Agentic AI Cloud: How Autonomous Agents Will Reshape Cloud Architecture

Get a free topical map and start building content authority today.

Agentic AI cloud describes cloud environments designed to host, orchestrate, and govern autonomous software agents that plan, act, and adapt without continuous human direction. This article explains what agentic AI cloud means, why it matters, and how architects can plan and operate systems that incorporate autonomous agents while meeting reliability, security, and compliance goals.

- Focus: practical patterns for building an agentic AI cloud and key trade-offs.

- Detections: Dominant intent — Informational

- Includes a deployment checklist, a short real-world example, and 3–5 concrete tips.

Agentic AI Cloud: core concepts and why it matters

Agentic AI cloud brings together multi-agent systems, model serving, event-driven orchestration, and governance controls so autonomous AI agents can run reliably at scale. Core components include agent runtimes, orchestration layers, state management, secure model access, telemetry and observability, and governance hooks such as policy evaluators and human-in-the-loop checkpoints.

Definitions and related terms

- Agent — an autonomous software instance that senses environment inputs, plans actions, and executes tasks.

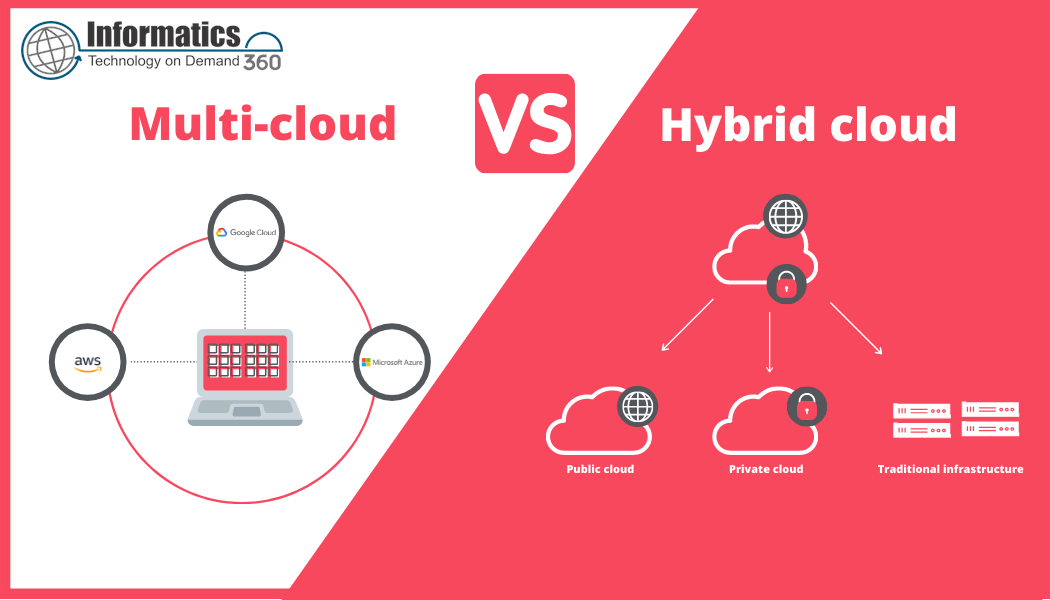

- Multi-agent system — coordinated agents interacting through messaging, shared state, or marketplace mechanisms.

- MLOps and model governance — practices for model lifecycle, monitoring, and compliance in production.

- Orchestration — scheduling, scaling, and policy enforcement for many agents (also called cloud-native AI orchestration).

How an agentic AI cloud is built

Designing an agentic AI cloud means combining cloud architecture patterns with agent-specific capabilities: event-driven compute (serverless or containers), stateful storage for agent memory, reliable messaging, secure model access, and governance APIs for policies and audits. Integration with identity and access management (IAM), secrets management, and compliance controls is essential.

Architecture pattern: agent-orchestrator-observer

One practical pattern uses three layers:

- Agent runtime — containerized or sandboxed processes that run a single agent instance, with constrained resource quotas and sidecar libraries for telemetry and policy checks.

- Orchestrator — schedules agents, handles retries, and enforces global policies (rate limits, approved model lists, data retention rules).

- Observer / governance layer — immutable logging, audit trails, and human review queues for high-risk actions.

Risk controls, standards, and references

Adopt established guidance for AI risk and privacy. For example, align practices with the NIST AI Risk Management Framework for identifying, measuring, and mitigating risks across the model lifecycle. NIST AI RMF is a practical starting point for risk taxonomy and lifecycle controls.

Governance hooks to implement

- Pre-action policy evaluation (deny or require human approval for sensitive actions).

- Immutable action logging for audit and forensic analysis.

- Runtime model versioning and reproducible inputs for accountability.

Deployment checklist: CLOUD-AGENT Checklist

The CLOUD-AGENT Checklist gives a concise framework for readiness before running agents in production.

- Consent & Data Controls — verify data provenance and legal permissions for training and inference data.

- Logging & Auditability — enable immutable logs and tamper-evident storage.

- Isolation & Sandboxing — run agents in constrained sandboxes with minimal privileges.

- Uptime & Scalability — autoscaling for peak loads and graceful degradation patterns.

- Detection & Recovery — monitoring, anomaly detection, and automated rollback strategies.

- Agent Policy Enforcement — pre-defined policy sets for each agent role.

- Telemetry & Observability — distributed tracing, metrics, and per-agent health signals.

- Governance Integration — human review paths and regulatory reporting capabilities.

Practical tips for architects and engineers

- Design agents as ephemeral, idempotent workers: reduce side effects and make retries safe.

- Use layered policy enforcement: local agent checks plus global orchestrator rules to avoid single points of failure in governance.

- Partition sensitive workloads into dedicated environments with stricter auditing and human oversight.

- Instrument agents with rich context (what triggered the action, model version, input hash) to enable reproducibility and debugging.

Common mistakes and trade-offs

Trade-offs often center on autonomy versus control. Granting broad agent authority simplifies workflows but increases risk and complicates audits. Over-constraining agents reduces usefulness and may cause brittle behavior. Common mistakes include:

- Skipping immutable logging — losing forensics and compliance evidence.

- Mixing sensitive and non-sensitive data in the same agent instance — increases blast radius on compromise.

- Underestimating emergent interactions in multi-agent deployments — unexpected coordination can create loops or resource contention.

Core cluster questions for internal linking and further research

- How to design agent lifecycles and state management for scalable multi-agent systems?

- What monitoring signals best indicate agent drift or unsafe behavior?

- Which access control models work for dynamic agent identities in cloud environments?

- How to integrate human-in-the-loop review without creating bottlenecks?

- What cost and resource allocation strategies minimize waste for unpredictable agent workloads?

Short real-world example

A financial services firm deployed an agentic AI cloud to automate routine fraud investigations. Agents fetched transaction data, scored anomalies with a model, and prepared a recommended action. High-risk recommendations were routed to a human analyst via the governance layer. Key outcomes: time-to-resolution dropped by automating low-risk cases, traceability improved through immutable logs, and human reviewers retained control over escalation decisions.

Operational considerations

Plan for observability at the agent level (per-agent traces and metrics), and for cost management since many active agents can increase compute and storage spend. Use autoscaling and burstable instances with quotas. Implement retention policies for agent memory and logs to meet privacy requirements.

Related technologies and stakeholders

Related entities include multi-agent systems, MLOps pipelines, orchestration technologies (Kubernetes, serverless platforms), reinforcement learning agents, policy evaluators, and IAM providers. Stakeholders typically include cloud architects, security teams, compliance officers, ML engineers, and product owners.

Conclusion: practical next steps

Start with a narrow, well-scoped pilot: choose a low-risk domain, implement the CLOUD-AGENT Checklist, instrument rich observability, and align with a recognized risk framework such as NIST AI RMF. Iterate on governance and controls as agents demonstrate real behaviors in production.

What is agentic AI cloud and why does it matter?

Agentic AI cloud refers to cloud platforms and operational practices built specifically to host autonomous agents safely and at scale. It matters because agents enable new automation capabilities but introduce emergent risks that require explicit architecture and governance.

How should security be handled in an agentic AI cloud?

Security centers on isolation, least privilege, secrets management, and immutable logging. Combine runtime sandboxing with orchestration-layer policy enforcement and continuous monitoring for anomalous behavior.

Can existing cloud platforms support autonomous agents?

Yes. Most cloud platforms provide primitives—container orchestration, serverless compute, message queues, and managed databases—that can be combined to host agents. The difference for agentic AI cloud is emphasis on governance APIs, auditability, and agent-specific orchestration patterns.

How to measure the safety and reliability of agentic AI cloud deployments?

Measure safety through action error rates, false-positive/negative outcomes, policy violation counts, and mean time to detection/recovery. Reliable metrics include agent uptime, queue processing latency, and successful rollback frequency.

What are the first steps to pilot an agentic AI cloud?

Define a narrow use case, apply the CLOUD-AGENT Checklist, instrument telemetry, and run a short pilot with human-in-the-loop review for all high-risk actions. Use risk frameworks and standards to document controls and expected outcomes.