AI Agents for Health Insurance Claims and Support: A Practical Implementation Guide

Get a free topical map and start building content authority today.

AI agents in health insurance: what they do and why it matters

AI agents in health insurance are software-driven virtual assistants and automation tools that handle tasks such as claims adjudication, policyholder inquiries, prior authorizations, and routine case triage. Organizations that implement these agents aim to reduce manual workload, speed up claim turnaround, and improve member experience while maintaining compliance with medical privacy and regulatory standards.

This guide explains practical use cases for AI agents in health insurance, a named implementation framework (the CLAIM Framework), compliance considerations (including HIPAA), a real-world scenario, a short checklist, five core cluster questions for content planning, and actionable tips to start or improve deployment.

Benefits and use cases of AI agents in health insurance

Claims automation and adjudication

Automating claims processing replaces repetitive rule-checking and data entry with workflows that use robotic process automation (RPA), natural language processing (NLP), and machine learning (ML) models to extract data from attachments, validate benefits, and route exceptions to human reviewers. Expected outcomes include faster claim turnaround, fewer manual errors, and improved throughput for high-volume claim types.

AI customer support for health insurance

AI customer support for health insurance combines conversational agents, FAQ retrieval, and task orchestration to answer coverage questions, guide members through appeals, and initiate common transactions like ID card requests. Integration with member portals and telephony can create seamless handoffs between bots and human agents.

Fraud detection, prior authorization, and underwriting

AI agents can flag potentially fraudulent claims, pre-screen prior authorization requests using clinical-similarity models, and support underwriters by summarizing risk indicators. These agents are typically part of a hybrid workflow where models score or prioritize items and humans make final decisions.

How to implement AI agents: the CLAIM Framework

The CLAIM Framework is a practical checklist for deploying AI agents in health insurance: Compliance, Logging & governance, Automation design, Integration, Monitoring. Each pillar helps teams move from experimentation to safe, repeatable operations.

Compliance

Design data access and retention policies to meet legal requirements and industry standards. For health data in the United States, align designs with HIPAA privacy and security rules; official guidance is available from the U.S. Department of Health & Human Services: U.S. HHS HIPAA guidance. Also map regional regulations, such as GDPR where applicable.

Logging & governance

Implement immutable audit logs for decisions, inputs, and model versions. Use a model registry and change-control process that includes human review checkpoints for high-risk automation.

Automation design

Define what the AI agent will do autonomously versus what requires human-in-the-loop review. Use clear business rules, confidence thresholds, and explainability tools for model outputs.

Integration

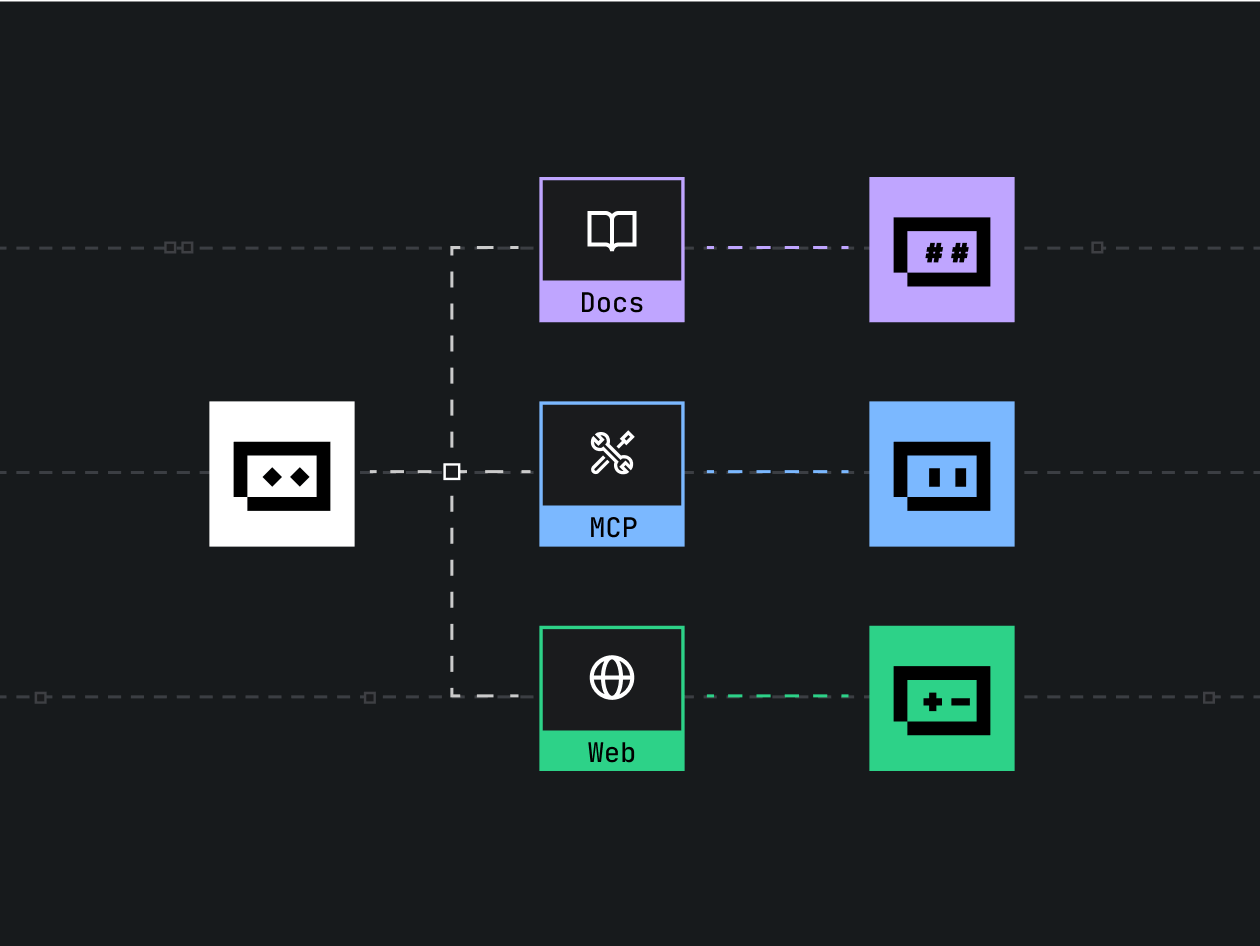

Integrate with claims management systems, EHRs, member identity services, and contact center platforms. Standardize APIs, and plan for message queuing and retry logic in case of downstream outages.

Monitoring

Track operational KPIs (e.g., throughput, escalation rate, accuracy) and clinical/business metrics (e.g., claim reversal rate). Establish a continuous feedback loop to retrain models and update rules.

Technical and compliance considerations

Data handling and privacy

Maintain least-privilege access to PHI, encrypt data in transit and at rest, and anonymize or tokenise data used for model development when possible. Coordinate with privacy officers and legal counsel to document lawful bases for processing.

Model explainability and fairness

Use interpretable models or post-hoc explanation tools to surface why a claim was flagged or routed. Evaluate models for biased outcomes by cohort and set corrective controls, such as fairness constraints and monitoring dashboards.

Systems resilience and scalability

Design agents for predictable latency, failover behavior, and graceful degradation. For high-volume claims, batch and streaming architectures may be combined to balance latency and cost.

Real-world example: automated medical claims triage

Scenario: A regional insurer receives thousands of outpatient claims daily. An AI agent pre-processes incoming claims to extract diagnosis codes from attachments, validates member eligibility, and assigns a risk score for potential clinical review. Claims below a low-risk threshold auto-adjudicate; mid-risk claims route to a nurse-review queue with a prefilled summary; high-risk claims trigger full human review and audit logging. After six months, average time-to-payment falls by two days and exception volume drops, while audit trails support appeal workflows.

Common mistakes and trade-offs

Common mistakes

- Expecting perfect accuracy: early models need human oversight and phased rollout.

- Skipping governance: inadequate logging and version control make audits difficult.

- Over-automation: giving agents full authority on high-risk decisions without safeguards.

Trade-offs to consider

Faster automation often reduces touch labor but increases the need for robust monitoring and exception handling. Highly interpretable models may sacrifice some predictive power. Investing more in integration upfront reduces long-term maintenance cost but increases initial project scope.

Practical tips to get started

- Prioritize high-volume, low-complexity claim types for initial automation pilots to prove ROI quickly.

- Use human-in-the-loop workflows and confidence thresholds so the system learns from real reviewer decisions.

- Establish SLAs and escalation paths between bots and contact center agents to preserve member experience.

- Instrument monitoring for drift, data quality issues, and fairness metrics; schedule regular model re-evaluation.

- Document decision criteria and keep an audit trail to support regulatory reviews and appeals.

Core cluster questions

- How do AI agents improve claims processing efficiency in insurance?

- What are the compliance requirements for AI-driven health insurance automation?

- Which tasks should remain human-handled when deploying AI agents for claims?

- How to measure ROI after implementing AI agents in a claims workflow?

- What integration patterns work best for AI agents and legacy claims systems?

FAQ

What are AI agents in health insurance and how are they used?

AI agents are software components that perform specific tasks—like extracting data from claim attachments, responding to customer inquiries, or scoring claims for fraud risk—using combinations of RPA, NLP, and ML. They are used to automate repetitive work, speed decision-making, and surface exceptions for human review.

How secure are AI agents that process protected health information (PHI)?

Security depends on design: enforce encryption, role-based access controls, strong logging, and regular security assessments. Adhering to recognized standards and working with security and privacy teams reduces risk.

Can AI agents fully replace human claims adjusters?

No. AI agents are best deployed for high-volume, rules-based tasks and for surfacing insights. Human oversight is necessary for complex medical judgment, appeals, and where legal accountability rests with staff.

How to measure success after deploying AI customer support for health insurance?

Track metrics such as first-contact resolution, average handle time, escalation rate, member satisfaction (CSAT/NPS), and cost-per-contact. Monitor model-specific KPIs like confidence distribution and error rates.

Are there best practices for integrating AI agents with existing claims systems?

Yes. Use APIs and message queues, design clear data contracts, implement idempotent operations, and create middleware layers to decouple agent logic from legacy systems. Start with narrow pilot integrations before scaling across systems.