Enterprise AI vs Traditional Software Firms: Practical Differences and What to Expect

Get a free topical map and start building content authority today.

Introduction: why this comparison matters

Choosing between an enterprise AI development company and a traditional software firm affects timelines, cost structure, risk controls, and long-term maintenance. The term enterprise AI development company signals firms that focus on data science, MLops, model governance, and AI-specific production practices. This article breaks down the differences, trade-offs, and practical steps to evaluate partners for production AI.

- Primary difference: model lifecycle + data engineering vs traditional application engineering.

- Key capabilities to check: data pipelines, MLops, model monitoring, and governance.

- Use the AI Integration Readiness Checklist before contracting.

Detected intent: Commercial Investigation

How an enterprise AI development company differs from traditional software firms

The primary distinction is orientation: an enterprise AI development company centers work around data, statistical models, model deployment, and continuous learning; traditional software firms center around deterministic application code, feature requirements, and UI/UX delivery. This affects project phases, staffing, tooling, and legal/compliance needs.

Core capability comparison

1. Project lifecycle and milestones

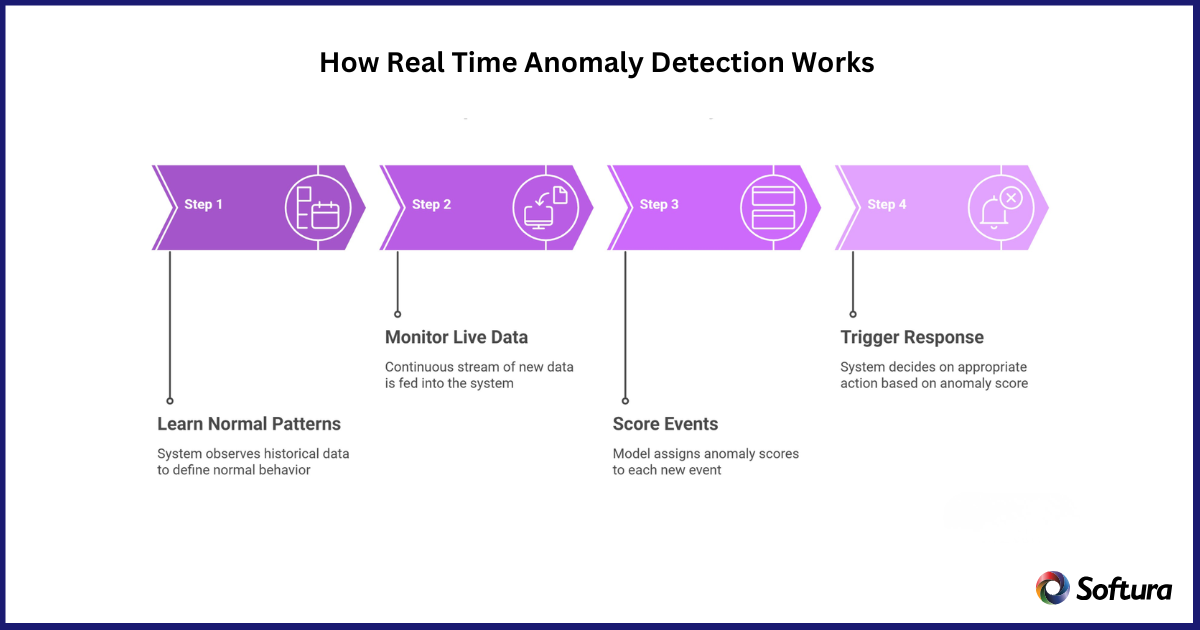

AI projects include data discovery, labeling, model selection, validation, A/B testing, and drift monitoring. Traditional projects focus on requirement specs, feature sprints, QA, and release cycles. Expect an AI partner to budget for longer discovery and iterative validation stages.

2. Team composition and skills

Enterprise AI teams typically combine data engineers, machine learning engineers, ML researchers, and MLops specialists. Traditional firms emphasize backend, frontend, QA, and product managers. Look for explicit MLops or SRE roles when evaluating AI capability.

3. Infrastructure, tooling, and cost profile

AI work requires GPU/TPU or managed inference capacity, feature stores, experiment tracking, and model registries. This creates ongoing cloud cost and operational complexity not present in many traditional software projects. Expect higher variable infrastructure costs tied to model training and inference.

4. Risk, compliance, and governance

AI introduces model risk: bias, explainability requirements, versioning, and data provenance. An enterprise AI development company should demonstrate model governance practices and integration with compliance frameworks. Reference standards like the NIST AI Risk Management Framework when asking about controls.

AI Integration Readiness Checklist (named framework)

Use this checklist when evaluating an AI partner. Each item should be evidenced in documentation or demos.

- Data availability: clear inventory and quality metrics for required datasets.

- Labeling strategy: annotated data, inter-annotator agreement measurements.

- MLops pipeline: CI/CD for models, automated testing, and retraining triggers.

- Monitoring & observability: latency, accuracy, drift, and fairness metrics in production.

- Governance & compliance: model registry, lineage, rollback procedures, and access controls.

Real-world example: retail demand forecasting

A national retailer needs demand forecasting for inventory optimization. A traditional software firm might build an inventory management UI, reports, and scheduling rules based on simple heuristics. An enterprise AI development company would first audit historical sales and promotions data, build a feature store that captures seasonality and holidays, train probabilistic forecasting models, deploy them with an MLops pipeline that retrains weekly, and add drift monitoring. The AI approach can improve forecast accuracy but requires new operational roles, continuous data feeds, and model governance to prevent supply issues.

Practical tips for choosing between the two

- Match objectives: if the outcome depends on predictive accuracy, prefer partners with MLops and model monitoring experience.

- Ask for reproducible artifacts: experiment logs, model cards, and model performance baselines.

- Budget for operations: include cloud training/inference costs and data engineering in TCO calculations.

- Require a rollback and mitigation plan: models fail in unexpected ways—contract terms should cover remediation.

Common trade-offs and mistakes

Trade-offs:

- Speed vs accuracy: traditional firms often deliver faster Minimum Viable Products; AI partners invest more time upfront for accuracy gains.

- Ownership vs dependency: pre-built AI platforms accelerate time-to-value but increase vendor lock-in compared to custom traditional code.

- Cost predictability vs variable cloud spend: traditional projects tend to have fixed-cost sprints; AI projects have unpredictable training and inference costs.

Common mistakes

- Treating AI like a feature rather than a system: insufficient planning for data pipelines and monitoring.

- Skipping model validation: accepting high offline metrics without robust online testing and canary deployments.

- Ignoring governance: no versioning, lineage, or access controls for models and training data.

Decision checklist: when to hire an enterprise AI development company

- Problems require prediction, classification, or automated decisioning at scale.

- Significant historical data exists and can be engineered into features.

- Organization is ready to operationalize models with ongoing monitoring and retraining.

Core cluster questions

- How do deployment and monitoring differ between AI and traditional software projects?

- What roles and skills are essential on an AI project team compared to a standard engineering team?

- How to estimate total cost of ownership for production AI vs a conventional application?

- What legal and compliance considerations are unique to enterprise AI projects?

- When is a hybrid approach (traditional firm plus AI specialists) the best option?

Practical vendor-evaluation checklist

Ask prospective vendors to provide:

- Case studies with measurable outcomes and post-launch monitoring details.

- Architecture diagrams showing data flow, model registry, and rollback mechanisms.

- SLAs for model performance degradation and incident response processes.

Final assessment: align risk, value, and capabilities

Choosing an enterprise AI development company makes sense when predictive accuracy, continuous learning, and data-driven automation are central to the product. Traditional software firms remain appropriate for deterministic, feature-driven applications with limited machine learning needs. The right choice depends on the problem type, data maturity, and willingness to invest in operations and governance.

What does an enterprise AI development company do that traditional software firms don't?

An enterprise AI development company builds and operates model lifecycles: data pipelines, model training, deployment, experiment tracking, and continuous monitoring—tasks not typically handled by traditional software firms focused on application logic and user interfaces.

How should procurement evaluate AI-specific proposals versus traditional software bids?

Procurement should request artifact evidence (model cards, experiment logs), ask for clear TCO including cloud costs, and require governance measures such as versioning and rollback procedures. Include performance baselines and acceptance criteria tied to business KPIs.

What are the biggest operational costs in enterprise AI projects?

Major operational costs include data engineering, cloud compute for training and inference, annotation and labeling, and ongoing MLops maintenance—often exceeding initial development costs over the life of the system.

How long does it take to go from prototype to production with an enterprise AI development company?

Timelines vary, but moving from prototype to production commonly takes 3–9 months depending on data readiness, compliance needs, and the complexity of integration. Expect additional months for robust monitoring and retraining pipelines.

Are there hybrid engagement models that combine traditional software firms with AI specialists?

Yes. Many organizations use a hybrid model where a traditional firm handles application UX and integration while a specialist AI partner supplies models, data pipelines, and MLops. This can balance speed and specialized capability but requires clear contracts and joint operational responsibility.