Practical Guide to Ethical AI for Enterprise Chatbots: Building Trust and Ensuring Fairness

Get a free topical map and start building content authority today.

Enterprise teams adopting conversational AI must prioritize ethical AI enterprise chatbots to keep customer trust, reduce legal risk, and maintain brand safety. This guide explains practical controls, a named framework, testing steps, and governance checkpoints that teams can apply immediately.

Detected intent: Informational

What are ethical AI enterprise chatbots and why they matter

Ethical AI enterprise chatbots are conversational systems designed, deployed, and operated under explicit principles that protect users, promote fairness, and support accountable decision-making. Organizations build them to prevent biased outcomes, preserve privacy, and ensure transparent user interactions while delivering efficient service.

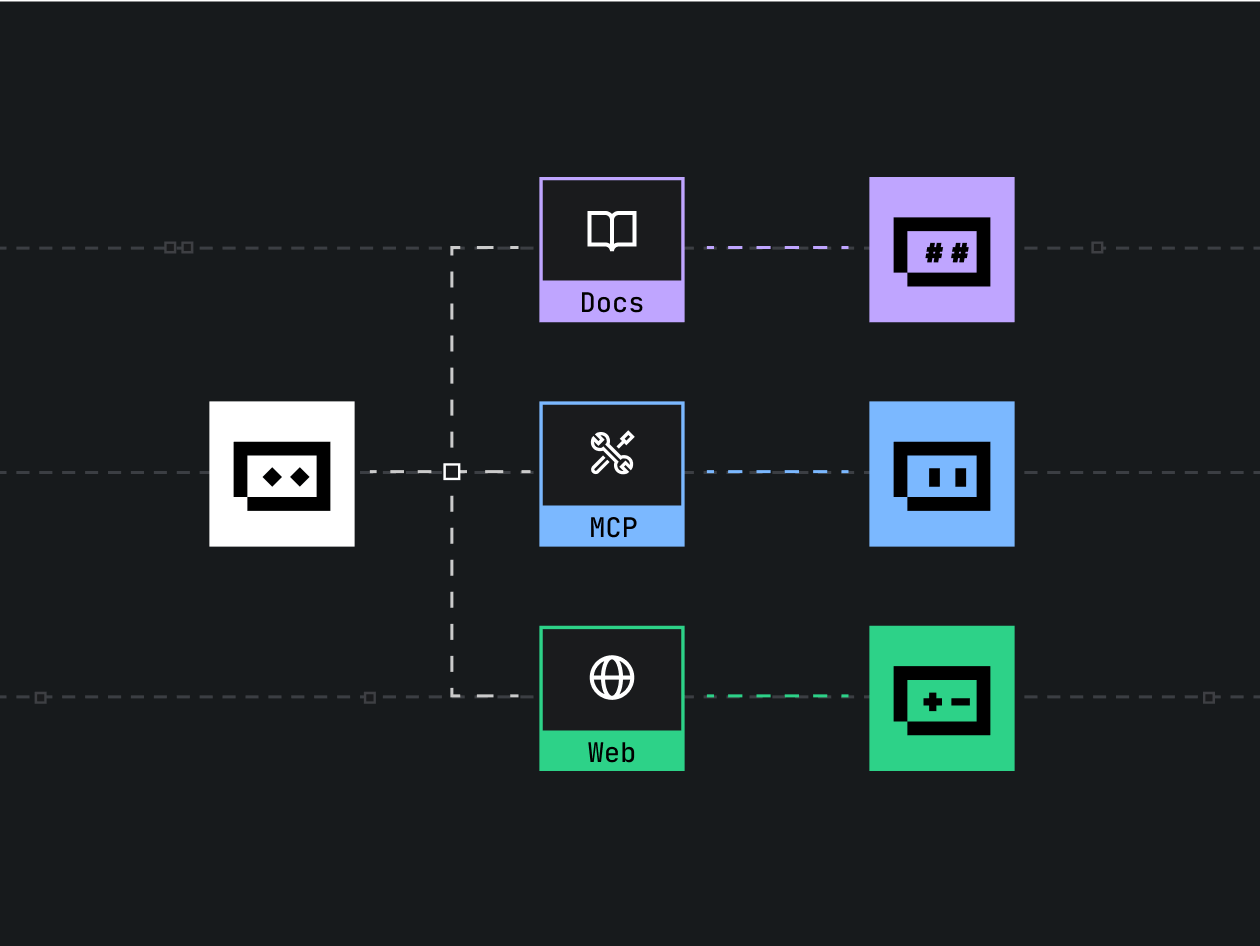

TRUST Checklist: a framework for operational ethics

Introduce a practical named framework—the TRUST Checklist—designed for engineering, product, and compliance teams. The checklist is short, easy to adopt, and focused on measurable controls.

- Transparency: User-facing disclosures about chatbot capabilities, data usage, and when a human will take over.

- Responsibility: Clear ownership for model behavior, escalation paths, and incident response plans.

- Unbiased design: Bias audits, representative training data, and demographic performance metrics.

- Security: Data minimization, encryption standards, and access controls for sensitive data.

- Testing: Pre-deployment simulation tests, continuous monitoring, and feedback loops for model drift.

Design and governance steps for fairness and trust

AI governance for chatbots

Establish an AI governance body that sets policies, approves risk levels, and reviews audit logs. Governance should align with standards such as the NIST AI Risk Management Framework for measurable risk assessments and lifecycle controls. NIST AI resources outline useful practices for enterprise risk management.

Data and model controls to ensure fairness in chatbot systems

Fairness in chatbot systems requires representative training data, label quality checks, and evaluation metrics beyond overall accuracy—use subgroup performance, false positive/negative rates per demographic, and calibration plots. Implement bias mitigation techniques (data balancing, reweighting, post-hoc calibration) and log decisions for auditability.

Privacy, consent, and transparency

Provide concise, user-friendly disclosures when the chatbot collects personal data or performs decision-making affecting users. Offer opt-outs or human escalation paths and document data retention policies.

Practical deployment checklist

Checklist items map directly to the TRUST framework and are designed for sprint planning or production readiness reviews:

- Complete a risk assessment and record the owner and mitigation plan.

- Create a transparency notice and human handoff mechanism visible to users.

- Run bias audits across user subgroups and set acceptance thresholds.

- Enable logging, explainability metadata, and access controls for logs.

- Schedule post-deployment monitoring and automated alerts for drift or safety incidents.

Real-world example: resolving biased answers in a bank chatbot

Scenario: A bank deploys a chatbot to assist with loan inquiries. After launch, analytics reveal the bot provides fewer pre-qualification suggestions to applicants from certain postal-code neighborhoods. Actions taken: stop-gap removal of the problematic response pathway, a dataset review revealed underrepresentation of key income brackets, retraining with reweighted samples, recalibrating confidence thresholds, and adding a human review flag for edge cases. Outcome: measurable reduction in subgroup false negatives and documented governance approval before re-release.

Core cluster questions

- How to audit bias in customer service chatbots?

- What metrics measure fairness in conversational AI?

- How to document human-in-the-loop processes for chatbots?

- Which controls reduce data leakage in chatbot logs?

- How to set escalation rules for automated chatbot decisions?

Practical tips for immediate impact

- Log decisions with context metadata (user locale, model version, confidence) to enable retrospective audits.

- Use lightweight A/B tests to compare fairness metrics before full rollout.

- Build a short human-review pathway for any high-risk or low-confidence cases.

- Automate alerts for shifts in subgroup performance to detect drift early.

Common mistakes and trade-offs

Common mistakes

- Assuming overall accuracy implies fairness—subgroup gaps often hide behind aggregate metrics.

- Skipping documentation of human oversight and escalation procedures.

- Logging everything without data minimization, increasing privacy risk.

Trade-offs to consider

Stricter safety filters reduce risky outputs but may also degrade helpfulness or frustrate users. More aggressive data retention minimization improves privacy but can limit root-cause analysis. Balancing these trade-offs requires policy decisions tied to business risk appetite and regulatory context.

Monitoring and continuous improvement

Operationalize monitoring: track uptime, user satisfaction, subgroup accuracy, and incident frequency. Set SLOs for human handoffs and incident response times. Regularly review the TRUST Checklist and update training data cadence when drift exceeds thresholds.

FAQ: Ethical AI enterprise chatbots — common questions

What are ethical AI enterprise chatbots and how do they differ from regular chatbots?

Ethical AI enterprise chatbots include explicit controls for transparency, fairness, security, and accountability that are documented and enforced through governance—unlike general-purpose chatbots that may focus only on functionality.

How should fairness in chatbot systems be measured?

Measure subgroup performance (precision/recall per demographic), false positive/negative disparity, calibration, and user satisfaction across segments. Use statistical and human evaluation to triangulate fairness issues.

What is the TRUST Checklist for building ethical chatbots?

The TRUST Checklist (Transparency, Responsibility, Unbiased design, Security, Testing) is a compact operational framework for teams to validate ethical controls from design through monitoring.

How to set up AI governance for chatbots?

Form a cross-functional oversight group, define risk categories, require documented approvals for high-risk use, and align policies with industry guidance such as the NIST AI framework.

Are there legal standards for ethical chatbots?

Regulatory regimes vary by jurisdiction. Follow industry standards and frameworks (for example, guidance from national standards bodies) and consult legal counsel for requirements such as data protection, discrimination law, and consumer protection.

Related entities and terms: model interpretability, bias mitigation, human-in-the-loop, data minimization, explainability, model calibration, SLOs, incident response, audit trail.