How to Scrape Grocery Price Data for Retail Intelligence: A Practical Guide

Get a free topical map and start building content authority today.

Grocery price data scraping is the process of collecting product pricing and availability from supermarket websites or apps to power analytics, price monitoring, or competitive intelligence. This guide explains methods, legal and technical considerations, an actionable checklist, and a real-world scenario for reliably gathering grocery price data without overreaching.

- Detected intent: Informational

- Primary focus: grocery price data scraping

- Includes: SCRAPE checklist, example pipeline, legal points, practical tips

grocery price data scraping: what it is and why it matters

At its core, grocery price data scraping extracts structured information—price, unit price, promotion text, stock status, UPC/GTIN, and category—from retailer pages or APIs. Businesses use this data for price monitoring, assortment analysis, elasticity modeling, and dynamic pricing. Related terms include web scraping, screen scraping, price monitoring, product matching, GTIN/UPC lookup, and retail analytics.

Common data sources and methods

1. Public product pages

Retailer product pages are often HTML-rich and contain the information needed. Parsing techniques include DOM parsing, CSS/XPath selectors, or headless browser rendering when JavaScript loads prices dynamically.

2. Mobile app endpoints and APIs

Some grocery chains expose APIs used by their web or mobile apps. These endpoints can be faster and cleaner to parse, but confirm terms of service and legal constraints before accessing them directly.

3. Official feeds and partners

Where available, direct data feeds, EDI, or GS1-compliant product data are the most reliable sources. Consider partnerships or licensing to avoid legal risk.

Technical stack essentials

Typical components: HTTP client libraries, rate limiting, rotating IP proxies, user-agent management, headless browser tools (e.g., headless Chromium), HTML parsers, product matching (fuzzy matching on titles, UPC/GTIN), and a storage layer (relational DB, search index, or data lake).

SCRAPE checklist (named framework)

Use the SCRAPE checklist to plan and validate a scraping project:

- Scope: Define stores, SKUs, fields, cadence, and acceptable error rates.

- Compliance: Check robots.txt, terms of service, and local laws (see robots.txt spec).

- Reliability: Implement retries, backoff, monitoring, and data validation rules.

- Authentication: Plan for login barriers, token refresh, and session handling when required.

- Processing: Normalize units, extract numeric price fields, and store provenance metadata.

- Ethics & Efficiency: Minimize load, use caches, and prefer official feeds when possible.

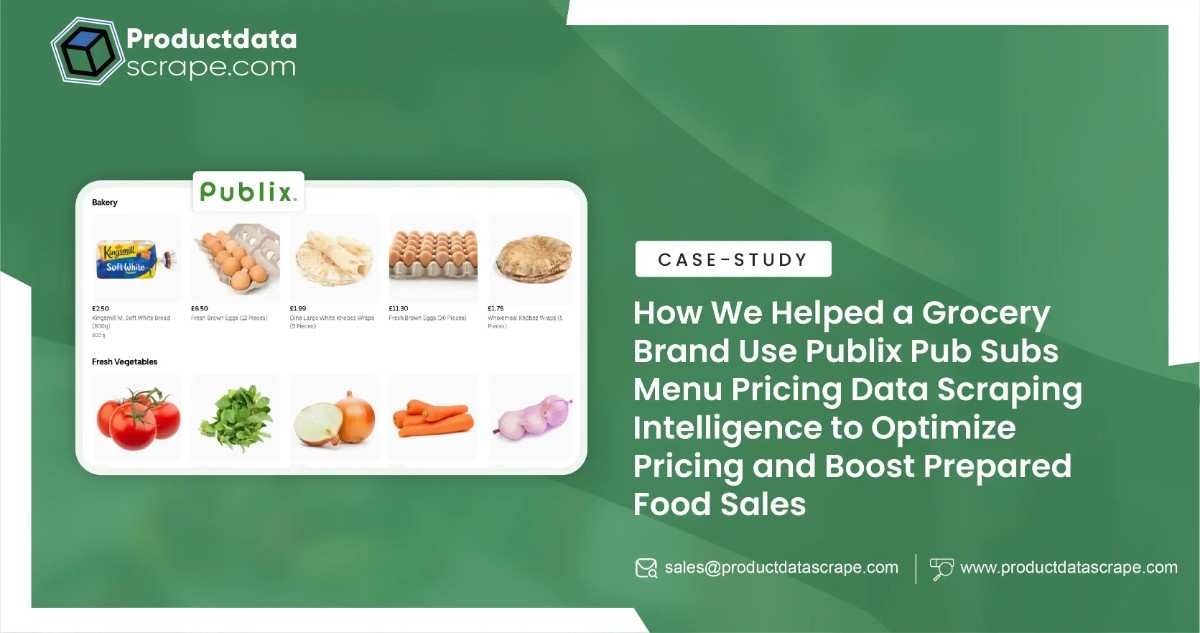

Example scenario: weekly competitor price monitoring

A regional grocery chain tracks 2,000 SKUs across five competitors every week. The pipeline uses an authenticated API where available, DOM parsing with headless rendering for JavaScript-driven pages, and a nightly job that respects rate limits. Prices, promotions, and stock status are normalized to a canonical SKU matched via UPC. Data flows to a BI tool for margin and promotion analysis. Provenance fields include timestamp, source URL, response headers, and parser version.

Practical tips for reliable scraping

- Respect robots.txt and site terms; treat those as the first compliance checkpoint.

- Rotate user-agents and IPs moderately; aggressive evasion increases legal and technical risk.

- Build robust parsing with schema validation: record nulls and parser errors for monitoring.

- Normalize prices to a unit measure (price per 100 g, per oz, per unit) for apples-to-apples comparison.

Legal, ethical, and standards considerations

Legal risk varies by jurisdiction. Some courts consider public web scraping lawful when information is public; others have ruled against scraping behind access controls. Follow best practices from standards bodies and consult counsel for commercial use. Respect rate limits, don’t circumvent paywalls or authentication, and prefer licensed feeds where possible. Also follow data protection laws if personal data is involved.

Trade-offs and common mistakes

Trade-offs

- Speed vs. politeness: Faster crawls give fresher data but increase blocking risk from retailers.

- Accuracy vs. coverage: Deep parsing and product matching increase accuracy but require more engineering effort.

- Proxy cost vs. reliability: High-quality proxy networks reduce IP bans but add budget pressure.

Common mistakes

- Assuming page HTML structure is stable—implement schema checks and alerts.

- Failing to store provenance—without source metadata, data audits are impossible.

- Not normalizing units—comparisons across pack sizes will be skewed.

Core cluster questions

- How to match products across retailers using UPC/GTIN and fuzzy matching?

- What are safe rate limits and crawling schedules for price monitoring?

- How to normalize unit pricing across different pack sizes and promotions?

- What techniques detect dynamic pricing or flash promotions in grocery stores?

- How to build monitoring and alerts for parser failures or price anomalies?

Data quality, storage, and validation

Store raw HTML/JSON payloads alongside parsed records. Maintain a validation layer that checks numeric ranges, required fields, and cross-field consistency (e.g., promotional price < regular price). Use indexes on SKU, store, and timestamp to support time-series queries and change detection.

Monitoring and maintenance

Set up health checks for response times, parser error rates, and changes in DOM pattern signatures. Automate alerting when a parser fails more than a threshold, and version parsers so it’s clear which code produced which dataset.

FAQ

What is grocery price data scraping?

Grocery price data scraping is the automated extraction of pricing and availability information from grocery retailers' web pages, apps, or feeds for analytics, competitive monitoring, and pricing strategies.

Is scraping grocery websites legal?

Legality depends on jurisdiction, the retailer's terms of service, and whether access restrictions are circumvented. Respect robots.txt, avoid unauthorized access, and consult legal counsel for commercial projects.

How often should price monitoring run?

Cadence depends on goals: daily or weekly for strategic tracking, hourly for dynamic pricing, and near-real-time for promotion detection. Balance freshness with politeness and cost.

What tools help with supermarket price scraping?

Common tools: HTTP libraries, headless browsers (Puppeteer, Playwright), HTML parsers (BeautifulSoup, lxml), and data pipeline tools. Use proxy providers and monitoring for production reliability.

How to handle CAPTCHAs and login-protected price pages?

Avoid bypassing CAPTCHAs or credential-stuffing. Work with retailers for authorized access or use partner feeds/APIs. If authentication is permitted, implement secure credential storage and session management.