How AI Builds Kids’ Empathy, Creativity & Curiosity: A Practical Guide

Get a free topical map and start building content authority today.

Artificial intelligence is showing new ways of supporting child development across social-emotional learning, creative practice, and exploratory thinking. This article explains how AI teaching empathy, creativity and curiosity works, what techniques are used, and how parents and educators can put proven patterns into practice without losing sight of safety and ethical concerns.

- AI supports empathy through role-play, perspective-taking prompts, and emotionally intelligent feedback.

- Creativity is amplified with generative tools, collaborative prompts, and scaffolded remixing tasks.

- Curiosity is stoked by personalized question paths, adaptive puzzles, and safe exploration environments.

- Use a simple framework (C.A.R.E.) and a short checklist before deploying any AI activity with children.

Detected intent: Informational

How AI is teaching empathy, creativity and curiosity

Defining the terms

Separate concepts help clarify design choices: empathy refers to perspective-taking and emotional recognition; creativity covers idea generation, flexible thinking, and combining concepts; curiosity is the motivation to explore, ask questions, and persist when answers are incomplete. AI systems can be designed to target each domain through interaction patterns rather than replacing human guidance.

How it works: key techniques and technologies

Natural language role-play and perspective-taking

Chat-based agents and scripted role-play scenarios let children assume different viewpoints safely. Through guided prompts and branching responses, AI systems model emotional cues, provide gentle challenge, and ask reflective questions to build empathy and self-awareness. These approaches use natural language processing and dialog management to keep interaction coherent.

Generative tools and creative scaffolding

Generative AI produces images, text, music, or code that children can remix. When combined with constraints and iterative prompts, these tools teach creative workflows—brainstorm, prototype, revise. Techniques include constrained prompts, suggestion lifts (offering one detail and asking the student to change or expand it), and collaborative co-creation where AI suggests alternatives.

Adaptive curiosity paths and discovery-driven feedback

Adaptive learning algorithms adjust difficulty and hinting based on a child's responses, preserving a productive zone of challenge. Curiosity is reinforced when the system poses open-ended questions, creates micro-goals, and gives informative feedback that encourages further exploration rather than simply telling the answer.

Social-emotional signal processing

Some platforms evaluate sentiment, facial expressions, or vocal tone to offer emotionally sensitive responses. When used carefully and with consent, these signals help the system mirror feelings, ask check-in questions, and propose calming strategies—behaviors that support empathy-building exercises.

The C.A.R.E. framework for safe, learning-centered AI activities

Use the C.A.R.E. checklist before introducing an AI activity:

- Context: Is the activity appropriate for the child's age and cultural background?

- Agency: Does the child retain control and choice in the task?

- Reflection: Is there a built-in reflection prompt to process learning and emotions?

- Ethics: Are privacy, safety, and bias minimization addressed?

Real-world classroom example

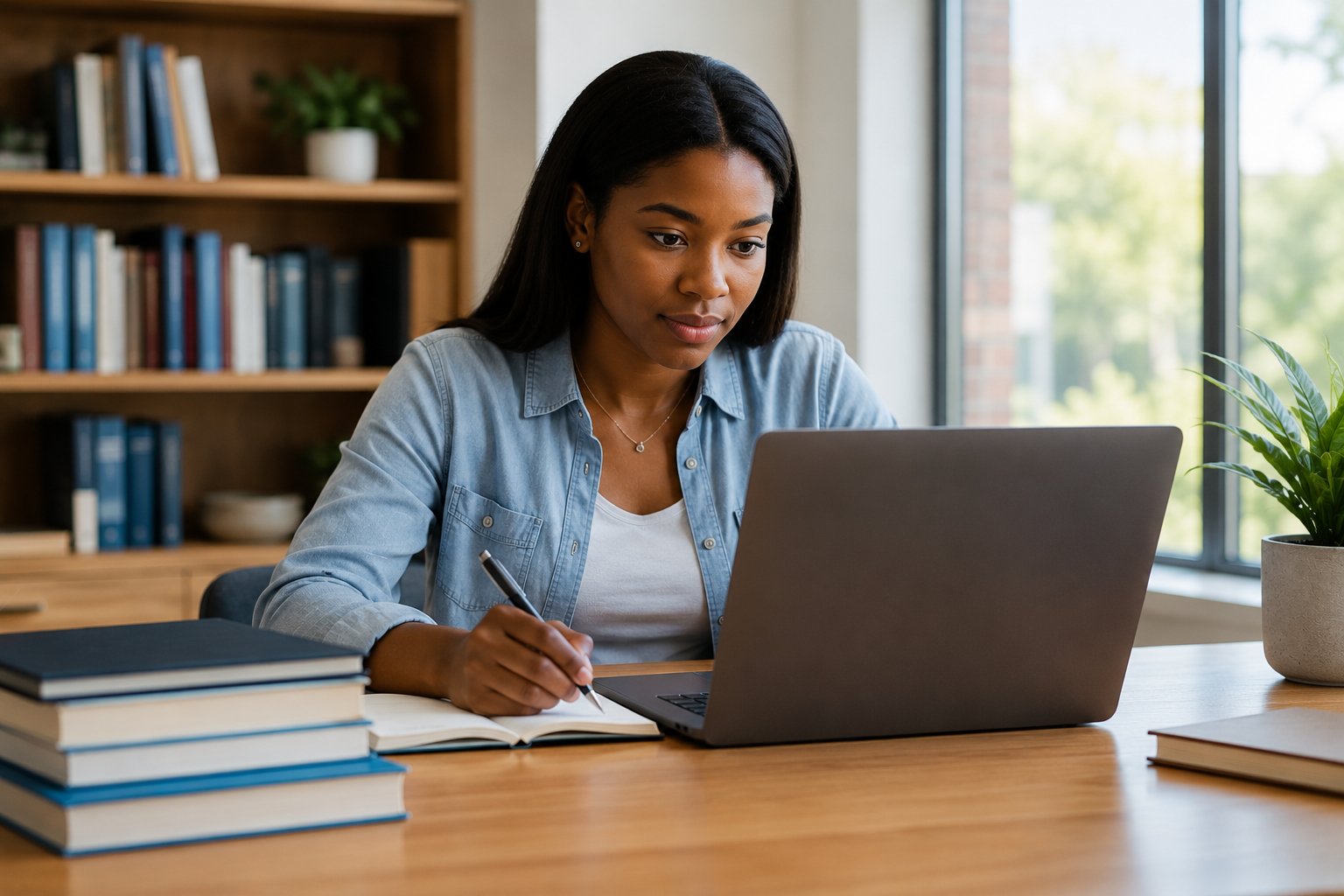

Scenario: A 3rd-grade teacher runs a two-day unit on 'A Day in Someone Else's Shoes.' Students use a chat-based story-generator to create short diaries written from another character's perspective: a refugee child, a new student, or an elderly neighbor. The AI provides starter sentences, suggests emotions to explore, and asks follow-up questions that prompt reflection (Why might they feel that way? What could help?). On day two, students remix their peers' stories into short role-play scenes and discuss choices made. The activity uses adaptive prompts to keep difficulty appropriate and a final reflection sheet where students note what surprised them.

Practical tips for parents and educators

- Set learning goals first: pick whether the focus is empathy, creative fluency, or curiosity-driven inquiry and choose prompts accordingly.

- Use AI as an assistant, not the authority: pair AI responses with teacher-led debriefs and peer discussion to deepen learning.

- Limit personal data: avoid uploading identifiable photos or voice samples unless consent and secure storage are guaranteed.

- Design short cycles: 10–20 minute AI activities with a human-guided reflection produce better outcomes than long unsupervised sessions.

Trade-offs and common mistakes

Trade-offs include:

- Engagement vs. dependency: Highly engaging AI might reduce effortful persistence if the system provides answers too quickly.

- Personalization vs. privacy: Tailored prompts help learning but require careful data governance and transparency.

- Scaffolding vs. challenge: Over-scaffolding can limit creative risk-taking; under-scaffolding can frustrate curiosity.

Common mistakes to avoid:

- Skipping the reflection phase—without reflection, emotional and cognitive gains are much smaller.

- Assuming AI interpretations of emotion are flawless—validate ambiguous assessments with human observation.

- Deploying tools without an exit plan—ensure students can continue the work without the AI and that learning transfers.

Core cluster questions

- Can AI replace human-led social-emotional learning activities?

- Which age groups benefit most from AI-driven creative prompts?

- How do adaptive algorithms support sustained curiosity in learners?

- What privacy safeguards are recommended for AI tools used with children?

- How to evaluate whether an AI activity increased empathy or creative thinking?

For design and policy guidance on using AI in education, referring to established international guidance is recommended: see UNESCO's resources on AI in education for principles and frameworks that help align practice with human-rights based approaches UNESCO AI in Education.

Short checklist before an AI activity (one-page)

- Goal defined: empathy, creativity, or curiosity?

- Consent and privacy checked

- Reflection prompt prepared

- Human debrief scheduled

- Exit plan for offline continuation

Measuring success

Practical assessment combines qualitative reflection prompts, short rubrics for perspective-taking and idea fluency, and simple metrics such as number of follow-up questions asked or revisions made to a creative work. Use pre/post reflection prompts to detect shifts in thinking and feeling rather than relying solely on system-internal logs.

How is AI teaching empathy, creativity and curiosity in classrooms?

AI supports these outcomes by structuring interactions that encourage perspective-taking, offering generative prompts for creative iteration, and adapting tasks to maintain curiosity. Crucially, the best outcomes occur when AI activity is paired with human facilitation, reflection, and safeguards for privacy and fairness.

Can AI accurately detect emotions in children?

Emotion detection models can identify cues like sentiment and facial expressions with varying accuracy. They are tools to inform human judgment, not replace it. Verification and adult oversight are essential, and consent plus transparent data handling must be in place.

What safety steps should parents take before letting kids use generative AI tools?

Check privacy policies, avoid uploading identifying data, set time limits, preview prompts and outputs, and plan a debrief to discuss why certain responses may be biased or inaccurate.

How can educators measure growth in empathy or creativity?

Use short rubrics, reflective journals, peer feedback, and performance tasks where students must show perspective-taking or produce multiple original ideas. Combine qualitative observations with simple quantitative counts (e.g., number of distinct ideas generated).

Is AI appropriate for very young children (preschool to early elementary)?

Age-appropriate AI activities can work if content is carefully curated, interactions are short and supervised, and any use of biometric sensing is avoided. Emphasis should be on guided play and human scaffolding rather than autonomous interactions.