Reduce Time to Market with AI: A Practical Guide for Software Teams

Get a free topical map and start building content authority today.

Reducing cycle time is a top priority for product teams. This guide explains how to reduce time to market with AI by mapping concrete levers—code generation, automated testing, CI/CD automation, and smarter planning—into everyday workflows. The goal is faster, safer delivery without introducing undue technical debt.

Intent: Informational

How AI reduces time to market with AI: the main levers

AI shortens time-to-market by accelerating tasks that are repetitive, pattern-based, or data-driven. Primary levers include AI-assisted code and test generation, intelligent code review and linting, automated release orchestration, and insights-driven prioritization from telemetry and user data. These levers work best when combined with solid engineering practices such as CI/CD and automated testing.

FAST-to-Market framework (named model)

The FAST-to-Market framework organizes practices into four repeatable steps so teams can adopt AI safely and measurably:

- F — Feature focus: Limit scope to a smallest-deliverable product and prioritize by user value and risk.

- A — Automate pipeline: Apply CI/CD, automated builds, dependency scanning, and release gating.

- S — Shift-left quality: Move testing, security checks, and static analysis earlier in the cycle using AI where it reduces manual effort.

- T — Telemetry & iterate: Instrument product usage and feedback to guide rapid iterations.

FAST-to-Market checklist

- Define an MVP with no more than three core flows.

- Automate builds and tests for each pull request (CI with artifact storage).

- Use AI for small, repeatable tasks only: boilerplate, unit tests, and suggestion-based refactors.

- Gate merges with automated tests and security scans.

- Collect runtime telemetry and tie metrics back to product decisions.

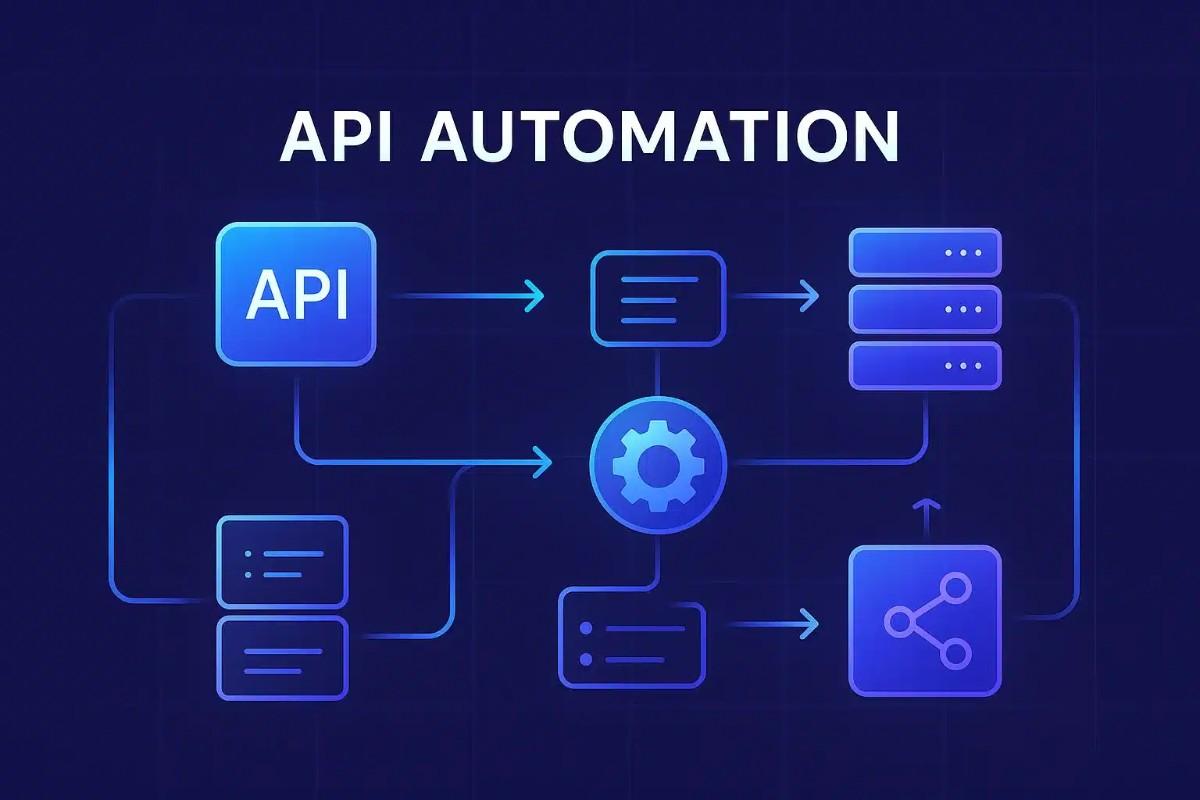

AI-driven development workflow and common integrations

Common points where AI reduces friction include:

- AI-assisted code completion and snippet generation to reduce boilerplate.

- Automated unit and integration test generation that provides starting tests and increases coverage fast.

- Intelligent code review helpers that spot potential bugs, style issues, and risky patterns.

- Release automation that uses predictive gating and anomaly detection to reduce manual verification time.

Reference to best practices

These practices align with Agile and continuous delivery principles promoted by industry organizations such as the Agile Alliance and the broader continuous delivery community. For a view of continuous delivery principles, see the Agile Alliance resources (Agile Alliance).

Real-world scenario

Scenario: A five-person SaaS product team needed an MVP to validate an enterprise integration. The team used the FAST-to-Market framework: trimmed the scope to two core integrations (Feature focus), set up CI with automated builds and container images (Automate pipeline), used AI to generate initial unit test scaffolds and repetitive API client code (Shift-left quality), and shipped telemetry to measure success criteria (Telemetry & iterate). Result: shorter feedback loops, earlier customer validation, and fewer manual release tasks—reducing calendar time to first usable release from months of ad-hoc work to a focused 8–10 week cycle.

Practical tips to safely reduce time to market with AI

- Start small: Apply AI to well-defined, low-risk tasks such as generating unit tests or documentation stubs, not core business logic.

- Instrument EVERYTHING: Track cycle time for PR->merge, test flakiness, and rollback rates to measure impact.

- Enforce human review: Treat AI output as a draft—require code review and static analysis before merging.

- Keep CI fast: Parallelize tests and run a quick smoke suite on PRs to preserve developer flow.

- Version and audit AI prompts and policy decisions so outputs are reproducible and accountable.

Trade-offs and common mistakes

Adopting AI accelerators introduces trade-offs that must be managed:

- Quality vs. Velocity: Relying too much on AI-generated code without review increases technical debt.

- Over-automation: Automating fragile pipelines or flaky tests can slow teams; automation must be reliable.

- Security and compliance: Unvetted AI outputs may introduce license or data leakage issues; enforce scanning and policy checks.

- False savings: Speed gains on small tasks can be offset by increased maintenance if AI suggestions are not curated.

Measuring impact

Key metrics to track after introducing AI into delivery workflows:

- Lead time for changes (PR opened → production deploy)

- Change failure rate (deploys that require rollback or hotfix)

- Mean time to recovery (MTTR)

- Developer cycle time for common tasks (e.g., scaffolding, writing tests)

Core cluster questions

- What AI tools most effectively automate software testing and QA?

- How to integrate AI-generated code into an existing CI/CD pipeline?

- What governance and security controls are needed for AI-assisted development?

- How to measure the ROI of AI in software delivery?

- When should teams avoid using AI for software development tasks?

Conclusion

AI reduces time to market with AI when it complements disciplined engineering practices: focus on scope, automate reliably, shift quality left, and iterate with telemetry. Use the FAST-to-Market framework and checklist to introduce AI incrementally, measure results, and avoid common pitfalls like over-automation or unchecked AI outputs.

How can teams reduce time to market with AI?

Teams reduce time to market with AI by applying AI to specific, repeatable tasks (code scaffolding, test generation, and review), automating the release pipeline, and maintaining strict review and telemetry processes to control risk.

What are the risks of relying on AI for production code?

Risks include introducing subtle bugs, license or data leakage issues, and hidden technical debt. Mitigate these with code review, static analysis, license scanning, and conservative use of AI for low-risk tasks.

Which metrics show an AI-driven workflow is effective?

Primary metrics: reduced lead time for changes, stable or improved change failure rate, decreased MTTR, and measurable reductions in developer time on repetitive tasks.

Can AI replace QA and manual testing?

No. AI accelerates test creation and helps find edge cases, but human testers remain necessary for exploratory testing, usability, and complex acceptance criteria.