Practical Review: ChatGPT Prompt Toolkit for Effective AI Workflows

Get a free topical map and start building content authority today.

The ChatGPT prompt toolkit can transform how non-experts and power users interact with large language models. This review evaluates usability, typical results, and how to adopt a prompt engineering checklist that produces reliable outputs in real work scenarios.

- Intent detected: Commercial Investigation

- What this covers: practical strengths, weaknesses, a named PACT prompt framework, and an actionable prompt engineering checklist

- Outcome: guidance for integrating a ChatGPT prompt toolkit into workflows with examples and 3–5 tactical tips

ChatGPT prompt toolkit: what this review covers

This review focuses on features that matter to practical AI users: clarity of instructions, support for structured outputs, reusable templates, and measurable quality controls such as examples-per-prompt and testing harnesses. The goal is to show which elements of a toolkit reduce ambiguous results and to present a concrete prompt engineering checklist for consistent outputs.

Why a toolkit matters for effective ChatGPT prompts

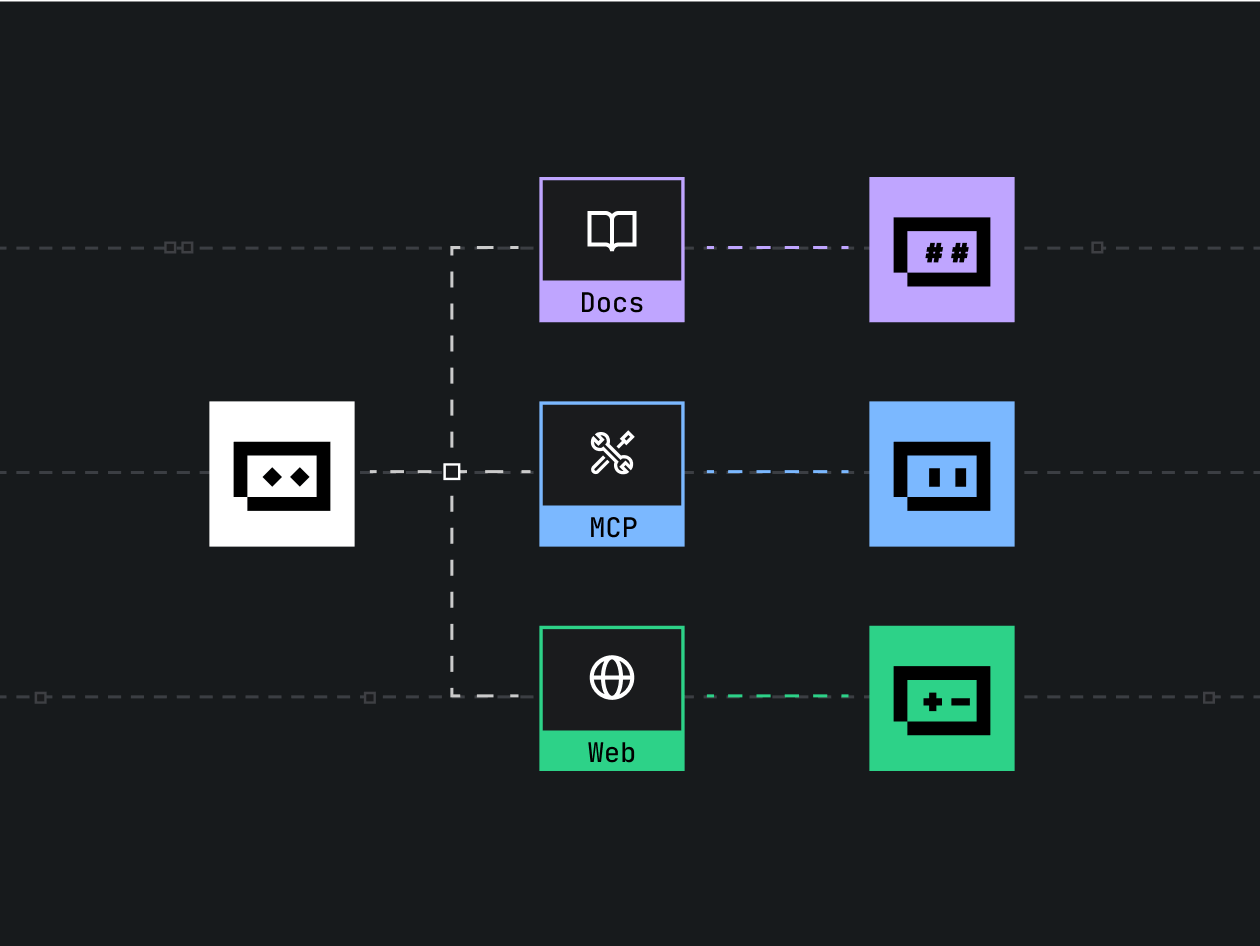

Using a ChatGPT prompt toolkit is not just convenience — it provides repeatable patterns that cut down iteration time. Related concepts include prompt engineering, system messages, temperature and sampling controls, few-shot examples, and chain-of-thought techniques. These terms are central when assessing how a toolkit improves output accuracy and reduces hallucination risk.

The PACT prompting framework (named model)

Introduce the PACT framework as a simple, repeatable model for prompt design:

- Purpose — define the exact goal and success criteria

- Audience — set tone, technical level, and constraints

- Constraints — format, length, and forbidden content

- Tooling — include examples, system messages, and evaluation rules

PACT Prompting Checklist

Use this checklist before running any prompt:

- Specify the Purpose in one sentence

- Set Audience and Tone (technical, lay, marketing, etc.)

- List required Constraints: output format (JSON, table), max tokens, and forbidden topics

- Add 1–3 few-shot examples demonstrating success and one negative example if relevant

- Define an automated evaluation test or human review rule

Real-world example: customer support canned responses

Scenario: A small SaaS company needs consistent, branded email replies for common support questions. Using the PACT framework, create a template that includes the problem category, required data fields, tone, and a JSON output for integration into the helpdesk.

Example prompt template (conceptual):

System: You are a support assistant for Acme SaaS. Purpose: Write a 3-sentence resolution and a friendly closing. Tone: professional but warm. Output: JSON with fields {subject, body, tags}. Example: [provide 1 example].Result: The toolkit produces structured JSON that can be parsed by automation, reducing manual edits and maintaining brand voice.

Practical tips for using a prompt toolkit and creating effective ChatGPT prompts

- Start with a strong system message: Fix model behavior early by setting role, constraints, and evaluation criteria in the system prompt.

- Provide a small set of few-shot examples: 1–3 good and 1 counterexample help models distinguish desired outputs.

- Use structured outputs where possible: Request JSON or CSV to make downstream parsing deterministic.

- Instrument and test: Track accuracy in a small test set and iterate prompts based on measurable failure modes.

Common mistakes and trade-offs

Common mistakes

- Vague goals — prompts that lack clear success criteria produce inconsistent outputs.

- No negative examples — omitting counterexamples can leave models guessing forbidden content.

- Over-reliance on long instructions — very long prompts can introduce noise; use concise, layered instructions instead.

Trade-offs to consider

More constraints increase reliability but reduce creative variation. Few-shot examples improve precision but increase token cost. Structured outputs (like strict JSON) simplify automation but require precise model compliance, which may need post-run validation. Balance these trade-offs based on safety, cost, and required fidelity.

Evaluation and integration: measuring toolkit value

Assess a toolkit with a small A/B test: run 50–100 prompts with and without toolkit templates and compare metrics such as correct-field completion rate, average edit time, and user satisfaction. For best-practice prompting guidance, consult the platform documentation and model guidelines, for example the official prompt guidance: OpenAI prompting guide.

Core cluster questions

Use these as internal link targets or follow-up articles:

- How to structure few-shot examples for consistent outputs

- When to use system messages vs. inline instructions

- How to design automated tests for prompt outputs

- Best formats for structured outputs (JSON, CSV, Markdown tables)

- How to reduce hallucinations with constraints and checks

Implementation checklist before deploying a toolkit

- Define clear success metrics and a small validation dataset

- Create templates using the PACT framework

- Include at least one negative example per template

- Automate parsing and simple validation rules (schema checks)

- Set monitoring for drift and periodic human review

Final verdict: who benefits most

Teams that need reproducible, automatable outputs — such as support, content operations, and data extraction — benefit most from a ChatGPT prompt toolkit. Individual hobbyists may find templates useful but should expect an upfront setup cost. Overall, a toolkit raises baseline output quality when paired with a clear checklist and lightweight testing.

FAQ: Is a ChatGPT prompt toolkit worth adopting?

A toolkit is worth adopting when repeated tasks require consistent structure, fewer edits, or automation. Start small with one template and measure time saved.

FAQ: How do effective ChatGPT prompts differ from casual prompts?

Effective prompts include a clear purpose, target audience, constraints, and examples. Casual prompts often lack structure, leading to variable results.

FAQ: Can a prompt engineering checklist reduce hallucinations?

Yes. A checklist that enforces constraints, verification steps, and output validation reduces hallucinations by narrowing acceptable answers and adding checks.

FAQ: How to test a ChatGPT prompt toolkit before production?

Run an A/B on a validation set, measure field completeness and edit time, and include human review for edge cases before full deployment.

FAQ: Where to learn official best practices for prompting?

Official platform guides and model documentation are the primary sources for best practices; visit the platform's prompt guidance for details and examples.