How AI Content Creation Will Transform Online Education: Practical Guide & Checklist

Get a free topical map and start building content authority today.

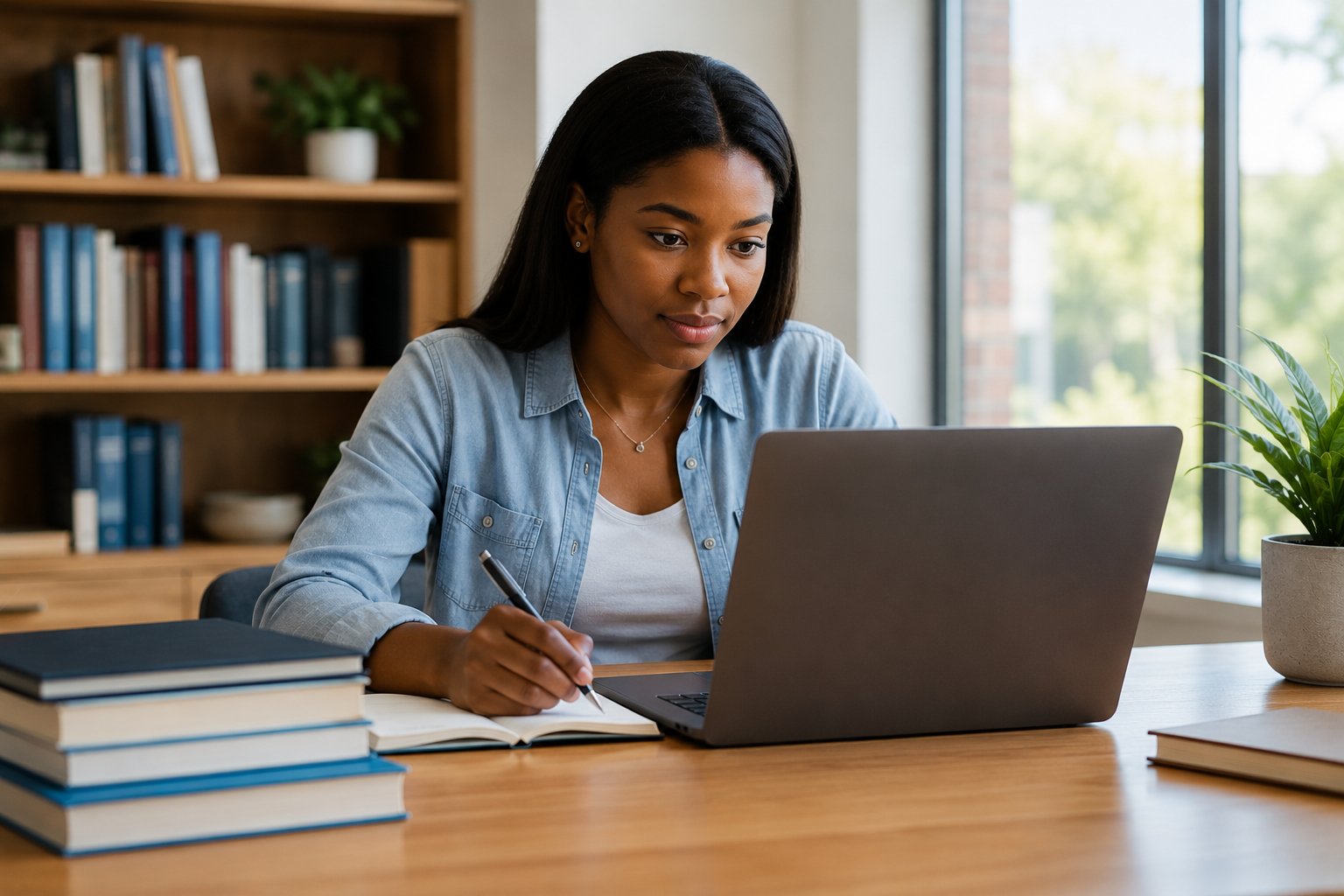

AI content creation in online education is reshaping how courses are designed, delivered, and assessed. This guide explains practical trends, a readiness checklist, implementation steps, and pitfalls to avoid for instructional designers, faculty, and administrators. Detected intent: Informational

- Primary focus: practical adoption of AI-generated learning materials, adaptive content, and assessment automation.

- Includes a named checklist (EDU-AI Readiness Checklist), a real-world scenario, 4 actionable tips, and common mistakes to avoid.

- References industry standards and ethical considerations to support sustainable deployment.

AI content creation in online education: trends, definitions, and practical value

AI content creation in online education refers to using generative AI, large language models (LLMs), and automation tools to produce lectures, quizzes, summaries, interactive exercises, and multimedia assets. Related terms include AI-generated course materials, adaptive learning content creation, personalization engines, learning management systems (LMS), xAPI, and LTI integrations. Key drivers are scalability, faster iteration, personalized pathways, and improved accessibility when paired with human oversight.

How it works: components and standards

Core components

- Content generators (text, audio, video) based on LLMs and multimodal models.

- Adaptive engines that select or modify content based on learner data.

- Assessment generators that create formative and summative items with metadata for difficulty and competency alignment.

- Integration middleware that connects AI outputs with LMS, student information systems, and analytics platforms.

Standards and governance

Adopt interoperability standards such as LTI, xAPI and SCORM for consistent exchange of learning records. Follow guidance from standards bodies and ethical frameworks—organizations like UNESCO and IEEE publish recommendations for responsible AI deployment in education. For example, UNESCO's work on AI ethics provides principles that align with fairness, transparency, and privacy policies when using automated content.

EDU-AI Readiness Checklist (named checklist)

Use the EDU-AI Readiness Checklist to evaluate projects before production rollout. The checklist is a compact framework for decision-makers:

- Objectives: Define learning outcomes that AI-generated materials must support.

- Data & Privacy: Verify consent, anonymization, and data retention policies.

- Quality Gates: Establish human review, accuracy thresholds, and revision cycles.

- Interoperability: Ensure outputs map to LMS taxonomy and use xAPI/LTI where possible.

- Accessibility & Equity: Validate WCAG compliance and bias mitigation processes.

- Monitoring: Set metrics for learning impact, engagement, and error rates.

- Training: Document faculty workflows and provide governance for updates.

Practical implementation steps

1. Pilot small, measure precisely

Start with a single course module. Generate draft lectures, summaries, and formative quizzes, then run an A/B test against existing materials. Track learning outcomes, completion rates, and student feedback.

2. Human-in-the-loop workflows

Design review stages where instructional designers validate factual accuracy, alignment to outcomes, and tone. Use AI for draft generation and human experts for curation and contextualization.

3. Integration and metadata

Tag AI outputs with competency metadata so adaptive engines and analytics can measure mastery and recommend next steps. Map content to taxonomies used by the LMS or curriculum planning tools.

Real-world example: a university module scenario

Scenario: An online university automates week-by-week reading summaries and practice quizzes for a 12-week research methods course. The team used the EDU-AI Readiness Checklist to define objectives and data boundaries. AI generated summaries were routed through a human review step where subject-matter experts corrected technical nuances and added citations. Quizzes used item metadata so the LMS could assign remediation pathways. After the pilot, quiz pass rates improved slightly and time spent on instructor preparation dropped by half, while students reported clearer weekly expectations.

Practical tips for course designers and admins

- Embed human review checkpoints for every AI-generated artifact before publishing.

- Keep a changelog and version control for generated content to support audits and updates.

- Use small, measurable pilots with control groups to isolate the effect of AI-generated content on outcomes.

- Prioritize accessibility: auto-generate captions and structured transcripts to improve inclusion.

Trade-offs and common mistakes

Trade-offs to consider

- Speed vs. accuracy: Faster generation saves time but increases the need for verification layers.

- Personalization vs. privacy: More tailored content requires more learner data and stricter governance.

- Automation vs. pedagogy: Over-automation can degrade pedagogical coherence if alignment to objectives is weak.

Common mistakes

- Skipping human review or assuming outputs are 'ready' without subject-matter validation.

- Not tagging or mapping competencies, which prevents meaningful analytics and adaptive sequencing.

- Ignoring accessibility and equity checks, which can reduce reach and disproportionately affect learners with disabilities.

Core cluster questions

- How can instructional designers validate AI-generated content for accuracy and alignment?

- What data privacy practices are essential when using learner data for adaptive AI?

- Which interoperability standards ensure AI outputs work with major LMS platforms?

- How should assessment items generated by AI be reviewed and calibrated?

- What metrics measure the learning impact of AI-generated course materials?

Related technologies and terms

Terms to be familiar with: generative AI, large language models (LLMs), adaptive learning engines, learning analytics, xAPI, SCORM, LTI, accessibility (WCAG), assessment calibration, bias mitigation, metadata-driven content.

FAQ

Will AI content creation in online education replace instructors?

No. AI augments instructors by automating repetitive tasks, creating drafts, and enabling personalization. Instructors remain essential for pedagogical design, critical feedback, and contextual judgment.

How to ensure academic integrity when using AI-generated assessments?

Use item pools, randomized parameters, proctored or authenticated assessment modes, and integrity analytics. Combine AI generation with human review and item calibration to maintain standards.

What are the best practices for adopting AI-generated course materials?

Run targeted pilots, apply the EDU-AI Readiness Checklist, require human-in-the-loop validation, track learning metrics, and ensure metadata and accessibility compliance.

How does AI-generated content affect accessibility and inclusion?

AI can improve accessibility by auto-generating captions, alternative text, and simplified summaries, but outputs must be checked for clarity and bias. Always validate against WCAG standards and consult disability services.

Can institutions use AI to create adaptive learning paths and AI-generated course materials?

Yes. Combining adaptive learning content creation with competency tagging and LMS integration enables personalized pathways, but it requires careful data governance, interoperability planning, and ongoing evaluation.