AI App Development Tools: Practical Platforms, Frameworks & Deployment Guide

Get a free topical map and start building content authority today.

Developers and product teams increasingly rely on AI app development tools to design, train, and deploy intelligent applications. This article explains core components, compares common frameworks and runtimes, and outlines practical steps for production-ready AI apps.

- AI app development tools include SDKs, ML frameworks, model hosting, and MLOps platforms.

- Key choices affect model training, inference latency, scalability, and operational monitoring.

- Select tools based on data needs, target devices (cloud vs edge), and compliance requirements.

AI app development tools: core platforms and frameworks

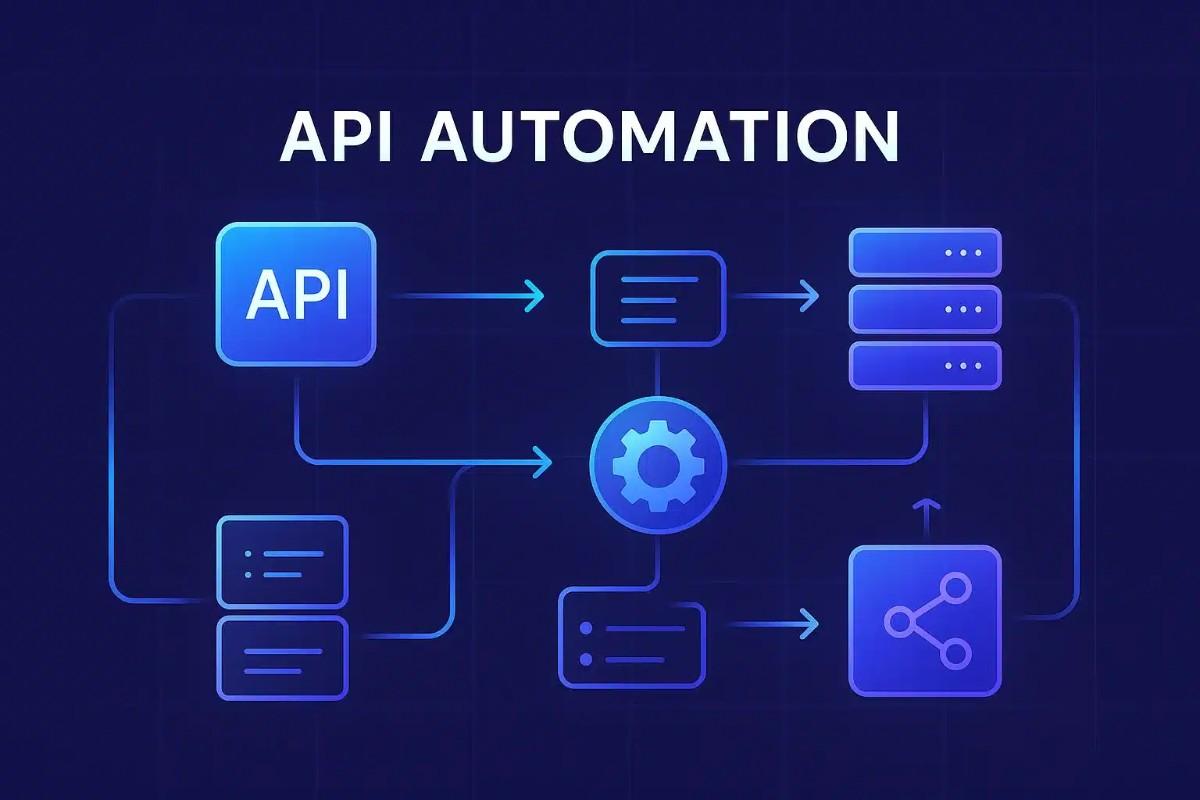

AI app development tools span a range of capabilities: data preprocessing utilities, machine learning frameworks for model creation, libraries for model export and interoperability, inference runtimes, and orchestration systems for deployment. Typical components include model training frameworks (for example, deep learning and classical ML libraries), model format converters, inference engines optimized for CPU/GPU/accelerator hardware, and APIs or SDKs that enable integration into mobile, web, or backend applications.

Key components of an AI app development toolchain

Data preparation and feature pipelines

Tools for data ingestion, cleaning, labeling, and feature engineering are foundational. Data pipelines can use ETL frameworks, data versioning systems, and labeling platforms. Proper data validation reduces bias and helps maintain model quality over time.

Model training frameworks

Machine learning and deep learning frameworks provide model architectures, training loops, and tooling for experiment tracking. Common paradigms include supervised learning, unsupervised learning, and transfer learning. Frameworks often support GPU acceleration and distributed training to handle large datasets.

Model formats and interoperability

Model formats such as ONNX enable moving models between frameworks and runtimes. Exporting to portable formats helps support heterogeneous inference environments like cloud VMs, edge devices, and specialized accelerators.

Inference runtimes and optimization

Inference engines and compilers focus on latency and throughput. Techniques include quantization, pruning, batching, and compilation to device-specific instructions. Choosing the right runtime affects user experience for real-time applications.

Deployment, orchestration, and MLOps

Deployment tools include containerization (Docker), orchestration (Kubernetes), CI/CD for models, monitoring, and governance. MLOps platforms automate retraining, canary releases, and performance monitoring in production.

Popular frameworks, libraries, and SDKs

Model development: training and research

Frameworks designed for model development vary by use case. Some emphasize research flexibility, others production stability. Key features to evaluate are model zoo availability, ecosystem integrations, and tooling for experiment tracking and reproducibility.

Model packaging and deployment

Packaging tools help export trained models and create inference services. Formats and runtimes that support cross-platform deployment are valuable when targeting both cloud and edge devices.

Designing a development workflow

Prototyping and evaluation

Start with rapid prototyping to validate concepts and metrics. Use lightweight datasets for initial experiments, then scale to full datasets for final training runs. Maintain reproducible experiments by tracking hyperparameters and random seeds.

Testing, validation, and monitoring

Testing should include unit tests for preprocessing, model validation on holdout data, and integration tests for inference APIs. Production monitoring tracks model drift, latency, error rates, and resource consumption.

Deployment and scalability considerations

Cloud vs edge deployment

Cloud deployment enables scalable inference and centralized model management; edge deployment reduces latency and dependency on connectivity but requires model optimization. Evaluate trade-offs based on application constraints.

Scaling and cost control

Autoscaling, batching, and hardware choice (CPU, GPU, TPU, or custom accelerators) influence performance and cost. Profiling models under expected loads helps estimate infrastructure needs.

Security, privacy, and compliance

Data governance and model risk

Tools should support data encryption, access controls, audit logs, and model explainability where required. Organizations often adopt standards and best practices from bodies such as IEEE and ACM for trustworthiness and accountability.

Regulatory guidance and standards

Consult national and international guidance for AI governance and risk management. For technical standards and assessment resources, refer to institutional guidance such as the NIST artificial intelligence program: NIST AI.

Choosing the right tools and best practices

Match tooling to project goals

Select tools based on model complexity, latency requirements, available hardware, team skillset, and long-term maintainability. Smaller teams may prioritize integrated platforms; larger teams may prefer modular systems that separate training and serving concerns.

Focus on reproducibility and observability

Implement version control for data and models, establish evaluation metrics, and integrate monitoring into production. Observability enables faster troubleshooting and improves reliability over time.

Learning resources and community

Educational materials and research

Community forums, academic papers, and open-source repositories provide examples, benchmarks, and implementation patterns. Engaging with developer communities and standards organizations supports continuous learning.

When to adopt new tools

New tools can accelerate development but introduce migration costs. Pilot new solutions on noncritical projects and evaluate long-term maintenance implications before committing to large-scale adoption.

Conclusion

AI app development tools form an ecosystem that covers data processing, model creation, packaging, inference, and operations. Choosing a coherent stack aligned with performance, cost, and compliance goals reduces time to production and improves application reliability.

What are the best AI app development tools for beginners?

Beginners benefit from tools with robust documentation, community support, and low setup overhead. Look for integrated SDKs, managed model-hosting services, and straightforward local runtimes that support quick prototyping and simple deployment pathways.

How do AI app development tools support model deployment and scaling?

Deployment tools provide containerization, orchestration, autoscaling, and monitoring. They enable models to run reliably under load while maintaining performance and observability.

Which AI app development tools help with model portability and interoperability?

Portable model formats and conversion tools enable interoperability across runtimes and hardware. Standards such as ONNX and compatible inference runtimes aid in moving models between frameworks and production environments.

What security and compliance features should be considered when selecting AI app development tools?

Consider encryption, role-based access control, audit logging, model explainability, and data governance features. Compliance requirements may vary by industry and jurisdiction, so align tool capabilities with regulatory obligations and organizational policies.

Where to find authoritative guidance on AI development standards?

Refer to government and standards organizations for technical guidance and risk management frameworks. Institutions such as NIST publish resources and research on trustworthy AI practices that can inform tool selection and operational policies.