AI in Education: A Practical Guide to Personalized Learning and EdTech Strategy

Get a free topical map and start building content authority today.

Detected intent: Informational

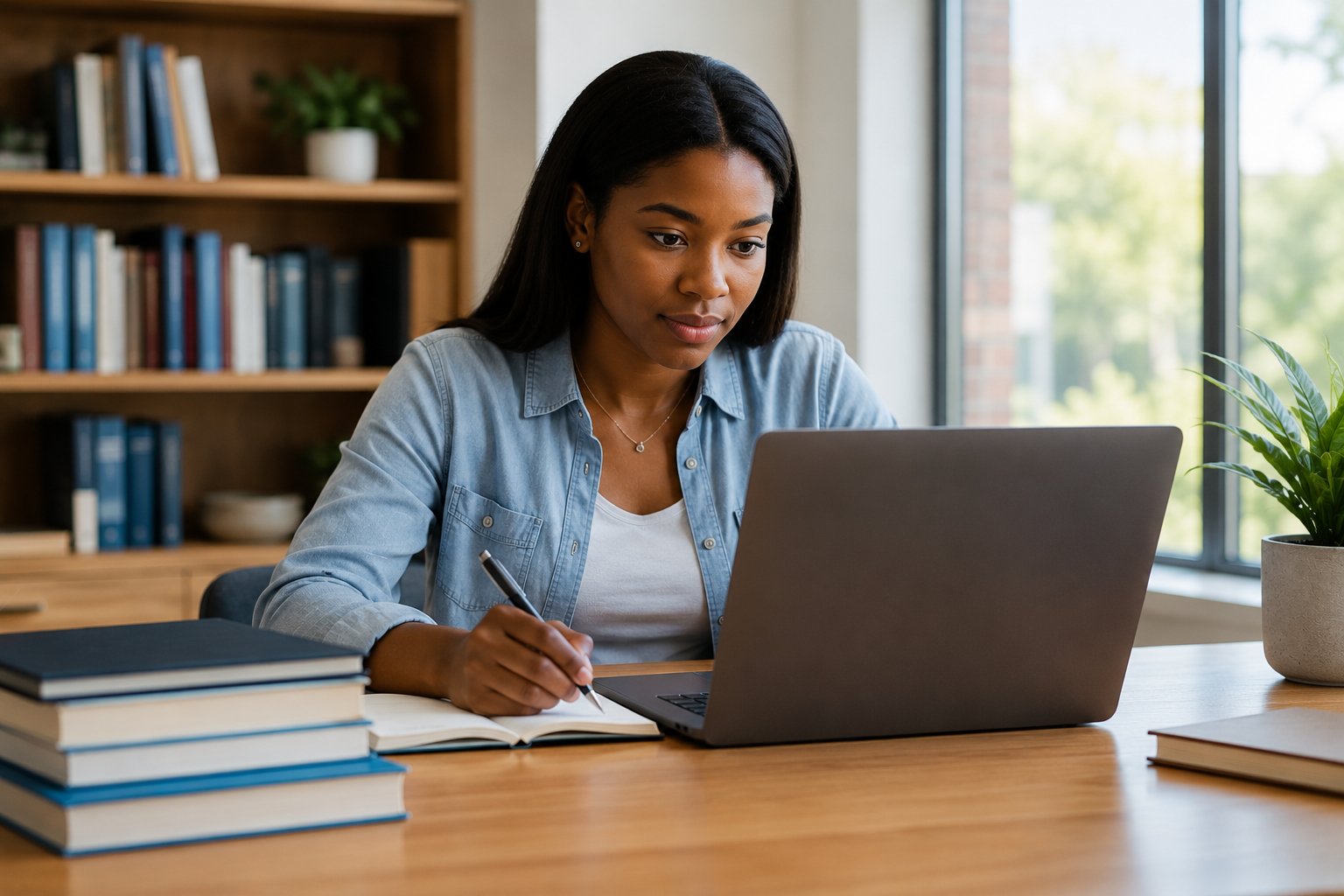

AI in education is reshaping how learners access content, how teachers assess growth, and how institutions plan technology investments. This guide explains practical uses, trade-offs, and a named adoption framework that helps educators move from pilot to steady practice without losing sight of ethics and equity.

AI in education: What it means for schools and learners

AI in education refers to systems that use machine learning, natural language processing, or rule-based automation to support instruction, assessment, and administration. Common applications include intelligent tutoring, adaptive content sequencing, automated grading, predictive analytics for retention, and administrative automation. Key related terms include personalized learning, adaptive learning, AI tutoring systems, learning analytics, and ethical AI in education.

How AI changes teaching, assessment, and EdTech strategy

Teachers gain tools that automate routine tasks and surface student needs. Assessment can shift from one-off exams to continuous, formative signals when systems measure patterns of engagement and mastery. At the institutional level, EdTech strategy moves from one-off product purchases to platform orchestration and data governance. Successful changes depend on clear goals, reliable data pipelines, teacher training, and governance that protects privacy and fairness.

Common applications and related technologies

- Adaptive practice engines that tailor questions and pacing to proficiency

- AI tutoring systems that provide hints, explanations, and step-by-step scaffolding

- Automated scoring for objective questions and draft feedback for written work

- Learning analytics dashboards that surface at-risk students and skill gaps

- Natural language tools for reading support, language learning, and content summarization

TEACH framework: a named model for safe adoption

Introduce a concise framework to guide pilots and scale-up: the TEACH framework.

- Target outcomes — Define specific, measurable learning goals for each AI use case.

- Evidence & evaluation — Use small experiments with control measures and qualitative feedback to validate claims.

- Adaptive integration — Ensure AI augments teacher workflows, not replace them; require teacher-facing controls.

- Compliance & ethics — Implement data governance, privacy safeguards, and fairness checks aligned with standards such as those from UNESCO and other policy bodies.

- Human oversight — Maintain human decision points for grading, placement, and disciplinary actions.

Checklist based on TEACH

- Define 1–3 measurable outcomes per pilot (e.g., increase formative mastery by X%).

- Collect baseline data and design a 6–12 week pilot with comparison measures.

- Train staff on tool use and interpretation of AI signals before rollout.

- Create privacy and data-retention rules; document access roles.

- Schedule regular review cycles to audit fairness, accuracy, and learning impact.

Real-world example: a middle-school math pilot

Scenario: A 7th-grade team pilots an adaptive practice platform to improve algebra mastery. The system provides personalized practice, flags misconceptions, and generates weekly progress reports. Teachers use the dashboard to group students for targeted interventions and to plan small-group lessons. After an 8-week pilot, the team compares pre/post formative assessments and reports a 12% increase in procedural fluency for students who completed individualized practice. Importantly, teachers documented changes to instruction informed by AI insights and continued human verification for grading and progression.

Practical implementation tips

Follow these actionable steps to increase the odds of meaningful impact:

- Start with a single, measurable problem (attendance, mastery of a skill, grading load) rather than a platform-wide rollout.

- Require teacher training and co-design: include educators in selection and configuration of AI tutoring systems so outputs are interpretable.

- Monitor for bias: review model predictions by subgroup and validate that interventions do not widen gaps.

- Limit the scope of automated decisions: keep high-stakes decisions under human control and use AI for recommendations.

Trade-offs and common mistakes

Deploying AI involves trade-offs and predictable missteps:

- Trade-offs: Personalization can improve engagement but risks overfitting to short-term behaviors; automation reduces teacher workload but may hide pedagogical context.

- Common mistakes: 1) Skipping baseline measurements; 2) Treating vendor claims as proof without independent evaluation; 3) Ignoring data governance or consent; 4) Over-relying on opaque recommendations without teacher mediation.

Core cluster questions

- How does AI personalize learning for different student needs?

- What are the privacy and data governance best practices for educational AI?

- How should schools evaluate claims from AI tutoring systems?

- What training do teachers need to use learning analytics effectively?

- How can AI be used to support equitable outcomes in diverse classrooms?

Resources and guidance

Policy frameworks and ethical guidance matter. Refer to international recommendations and ethics guidance for AI in public contexts — for example, UNESCO publishes principles and policy guidance on AI and education that can inform governance choices. UNESCO: Ethics of Artificial Intelligence

FAQ

How is AI in education already used in classrooms?

Common classroom uses include adaptive practice platforms that adjust difficulty based on responses, automated quizzes and formative assessments, reading supports that summarize or scaffold text, and dashboards that surface students who need interventions. Implementation varies by grade, subject, and local infrastructure.

Can AI replace teachers?

No. AI is a tool to augment teaching: it handles repetitive tasks, provides personalized practice, and highlights trends from data. Human teachers provide context, motivation, classroom management, and ethical judgment that AI cannot replicate.

What should schools measure to evaluate an AI pilot?

Measure learning outcomes tied to the pilot's goal (mastery checks, formative assessments), usage metrics, teacher time saved, and qualitative feedback from students and staff. Also track equity metrics to detect disparate impacts across groups.

How to address bias and fairness in educational AI?

Run subgroup analyses on predictions and outcomes, require vendors to disclose training data characteristics and evaluation results, and include diverse educator review panels during pilot assessment. Keep humans in the loop for high-stakes or ambiguous recommendations.

What steps should be taken before buying an AI tutoring system?

Define learning goals, require vendor documentation of evidence and privacy practices, run a small pilot with comparison measures, train teachers on interpretation, and create a data governance plan. Use the TEACH checklist to ensure alignment with outcomes and ethical safeguards.