Core Pillars of Cloud Computing: Architecture, Services, and Security

👉 Best IPTV Services 2026 – 10,000+ Channels, 4K Quality – Start Free Trial Now

Cloud computing has become a foundational model for delivering computing resources over the internet, enabling on-demand access to servers, storage, databases, networking, and applications. This article explains the main pillars of cloud computing and how they relate to architecture, service models, security, compliance, and operations.

- Cloud computing rests on service models (IaaS, PaaS, SaaS), deployment models (public, private, hybrid, multi-cloud, edge), and technical foundations such as virtualization and containerization.

- Security, governance, and compliance are cross-cutting pillars that shape design and operations, informed by standards and guidance from organizations such as NIST and ISO.

- Operational practices—monitoring, automation, resilience, and cost management—translate architecture into reliable, scalable services.

Key Pillars of cloud computing

Understanding the pillars of cloud computing helps organizations plan deployments, select technologies, and manage risk. These pillars group technical capabilities and management disciplines into a coherent framework.

Service models: IaaS, PaaS, SaaS

Service models define what level of abstraction is provided to users and administrators. Infrastructure as a Service (IaaS) offers virtualized compute, storage, and networking. Platform as a Service (PaaS) provides runtime environments and development tools. Software as a Service (SaaS) delivers fully managed applications. Each model shifts responsibility for maintenance, patching, and updates between provider and consumer.

Deployment models: public, private, hybrid, edge, and multi-cloud

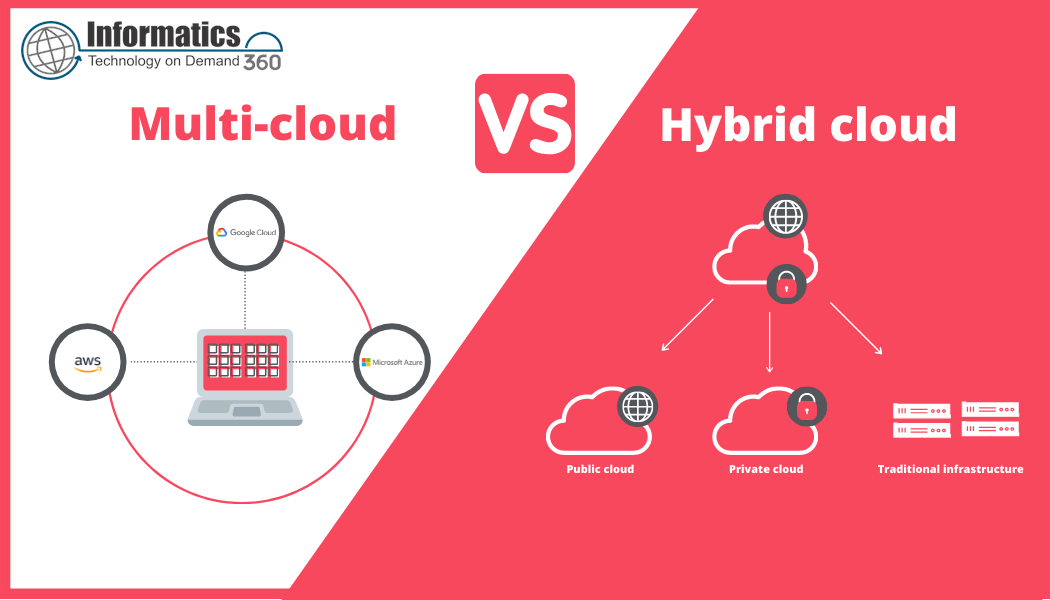

Deployment models affect control, latency, and compliance. Public cloud provides shared infrastructure hosted by third-party providers. Private cloud offers dedicated resources for a single organization. Hybrid cloud blends public and private environments to support workload placement and data residency. Edge computing extends cloud capabilities closer to users or devices for low-latency processing. Multi-cloud strategies distribute workloads across multiple providers to reduce vendor lock-in and increase resilience.

Core technology foundations

Virtualization, containerization, orchestration (for example, container orchestration), software-defined networking, and programmable APIs form the technical foundation of cloud computing. Virtualization enables multiple isolated environments on shared hardware; containers package applications with dependencies for portability; orchestration automates deployment and scaling. These technologies enable elasticity, resource pooling, and rapid provisioning.

Security, compliance, and governance

Security, compliance, and governance are essential pillars that overlap technical controls and organizational policy. A robust approach includes identity and access management, encryption, network segmentation, secure configuration baselines, and continuous monitoring.

Identity and access management (IAM)

IAM enforces least-privilege access, supports multi-factor authentication, and manages service identities for automation. Proper role-based access control (RBAC) and just-in-time privileges reduce exposure.

Data protection and privacy

Data at rest and in transit should be encrypted with strong algorithms. Data residency and privacy requirements may influence deployment model choice; adherence to standards such as ISO/IEC and local regulators is often required for sensitive data.

Compliance and standards

Guidance from official organizations helps align cloud deployments with recognized best practices. The National Institute of Standards and Technology (NIST) provides a widely cited definition and risk-management guidance for cloud computing, which can inform architecture and controls. See the NIST cloud computing definition for authoritative background.

Operational resilience and reliability

Operational disciplines translate architecture into dependable services. Key operational pillars include monitoring, automation, backup and recovery, capacity planning, and incident response.

Monitoring and observability

Collecting metrics, logs, and traces provides observability into system health. Alerting thresholds, runbooks, and post-incident reviews improve mean time to detection and recovery.

Automation and infrastructure as code

Infrastructure as code, configuration management, and CI/CD pipelines enable repeatable deployments, reduce human error, and speed changes. Automated testing and policy-as-code help maintain consistency across environments.

Resilience and disaster recovery

Architectures should plan for region failures, network partitions, and cascading faults. Strategies include multi-region deployment, automated failover, regular backups, and disaster recovery testing to validate recovery time objectives (RTOs) and recovery point objectives (RPOs).

Cost management and performance optimization

Cost and performance are operational pillars that require continuous attention. Rightsizing resources, scheduling non-production workloads, using reserved capacity where appropriate, and monitoring consumption patterns help control costs while meeting performance targets.

Capacity planning and elasticity

Elastic scaling matches resource allocation to demand. Autoscaling policies, load balancing, and caching reduce latency and improve user experience while optimizing resource use.

Tagging and chargeback

Resource tagging and billing reports enable cost allocation, show spending trends, and support chargeback or showback models within organizations.

Emerging considerations

Trends such as serverless computing, function-as-a-service, confidential computing, and increased use of containers and orchestration frameworks continue to reshape how cloud computing is implemented. Edge computing and AI workloads also introduce new architectural and governance needs.

Further reading and standards

For formal definitions and risk guidance, consult standards and publications from recognized authorities such as NIST and ISO/IEC. The NIST Special Publication on cloud computing is often used as a reference for definitions and service models.

NIST Special Publication 800-145: The NIST Definition of Cloud Computing

Practical checklist

- Map workloads to appropriate service and deployment models.

- Design identity, encryption, and network controls from the start.

- Automate provisioning and policy enforcement with infrastructure as code.

- Implement monitoring, backup, and tested disaster recovery plans.

- Use tagging and reporting to maintain cost transparency and governance.

Conclusion

The pillars of cloud computing—service and deployment models, foundational technologies, security and governance, operations, and cost management—provide a structured way to design, run, and govern cloud-based systems. Applying these pillars helps organizations balance agility, cost, and risk while meeting technical and regulatory requirements.

What is cloud computing and why does it matter?

Cloud computing is a model for enabling on-demand network access to shared configurable computing resources. It matters because it allows organizations to scale resources quickly, reduce upfront capital expenditure, and accelerate delivery of digital services while providing options for resilience and geographic distribution.

What are the main service models in cloud computing?

The main service models are Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS). Each model offers different levels of management responsibility and operational overhead.

How should security be approached in cloud environments?

Security should be approached as a shared responsibility between provider and consumer. Establish identity and access controls, encrypt data, implement network segmentation, apply secure configuration baselines, and monitor continuously. Align controls with regulatory and standards-based guidance.

How can costs be controlled in cloud deployments?

Control costs by rightsizing instances, using autoscaling, scheduling non-critical workloads, adopting reserved capacity where appropriate, and using tagging and reporting to track and optimize spending.