Edge Computing Myths — Clear Facts, Practical Guidance, and What Really Matters

Get a free topical map and start building content authority today.

Edge computing myths are widespread: claims that edge always reduces cost, eliminates cloud, or guarantees security can mislead teams deciding architecture. This guide debunks the top 10 misconceptions about edge computing, explains when edge makes sense, and provides a practical checklist to evaluate real projects.

Detected intent: Informational

- Edge computing can solve latency and bandwidth problems but is not a universal replacement for cloud.

- Evaluate use cases with a lightweight checklist: latency tolerance, data volume, resilience, cost model, and manageability.

- Common mistakes: treating edge as a security cure-all, ignoring operations complexity, or over-provisioning hardware.

Why these edge computing myths deserve correction

Misconceptions about edge computing often arise because the term covers many deployments—from device-level inference to multi-access edge computing (MEC) in telco networks. Accurate understanding is essential for cost control, performance, and security planning. This article explains the most common myths, provides a named framework for evaluation, a short real-world example, core cluster questions for deeper research, and practical tips for teams assessing edge projects.

edge computing myths: top 10 debunked

Myth 1 — Edge always lowers total cost

Reality: Edge can reduce bandwidth and cloud processing costs for high-volume telemetry, but adds capital, site, maintenance, and operations costs. A total cost of ownership (TCO) analysis must include hardware lifecycle, facility power and cooling, and remote management overhead.

Myth 2 — Edge eliminates the need for cloud

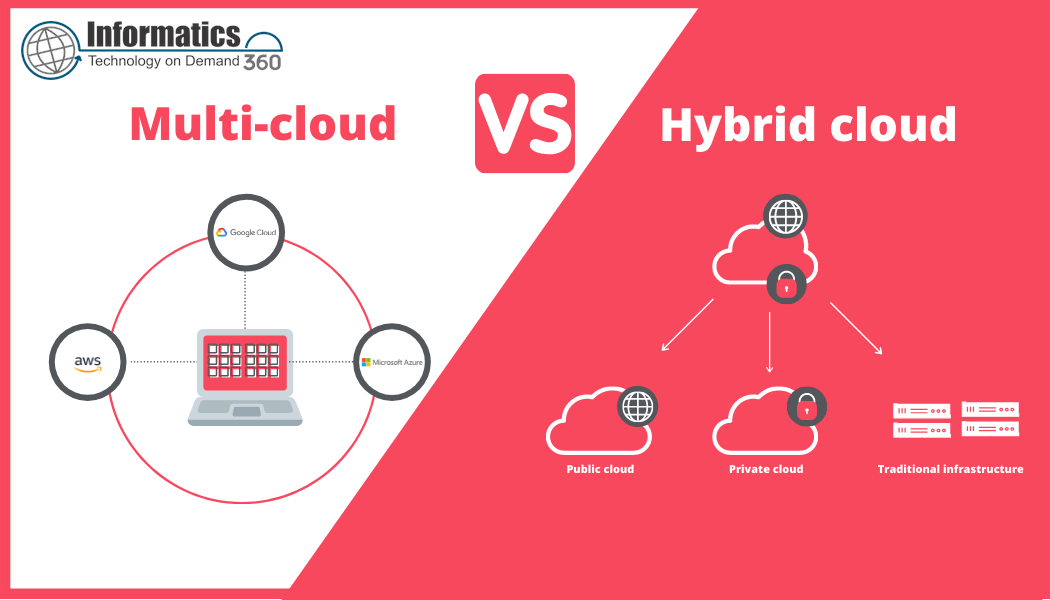

Reality: Edge complements the cloud. Cloud remains essential for long-term storage, heavy analytics, model training, coordination, and centralized control. Hybrid architectures (cloud + edge) are common and often optimal.

Myth 3 — Edge removes latency issues completely

Reality: Edge reduces network latency for nearby compute, but application design still matters. Local processing patterns, queuing, and device constraints can introduce latency. Measure end-to-end latency under realistic load, not just network RTT.

Myth 4 — Edge is inherently more secure

Reality: Edge reduces exposure for some data flows but introduces distributed attack surface and physical vulnerabilities. Strong device authentication, secure boot, encryption, and centralized patching are required. See standards and best practices from standards bodies like ETSI for MEC architecture guidance: ETSI MEC.

Myth 5 — Edge means identical hardware everywhere

Reality: Edge nodes vary from tiny gateways to micro data centers. Choosing the right compute class depends on CPU/GPU requirements, power profile, and environmental constraints.

Myth 6 — Edge deployment is a one-time effort

Reality: Edge requires ongoing operations: remote monitoring, updates, security patching, and capacity tuning. Plan for lifecycle management tools and processes from the start.

Myth 7 — Edge solves all IoT problems

Reality: Edge helps with local processing and connectivity, but IoT projects still face challenges with device management, data governance, and sensor reliability that edge alone does not fix.

Myth 8 — Edge equals 5G

Reality: 5G and edge are complementary. 5G can provide low-latency connectivity and network slicing, while edge provides compute near the source. Both are separate technologies and neither is required for the other.

Myth 9 — Containers make edge trivial

Reality: Containers and orchestration frameworks (like Kubernetes) simplify deployment patterns but do not remove network unreliability, hardware variance, or remote debugging challenges. Lightweight runtimes and careful image sizing are often necessary.

Myth 10 — Edge is only for performance-hungry AI

Reality: Edge use cases include latency-sensitive controls, privacy-sensitive data aggregation, regulatory data residency, and bandwidth optimization—AI inference is one of many valid reasons to place compute at the edge.

Named framework: THE EDGE Checklist (Test • Handle • Evaluate • Govern • Execute)

Use THE EDGE Checklist to evaluate any candidate edge deployment:

- Test — Measure real-world latency, throughput, and failure modes.

- Handle — Define data handling policy (what stays local vs. what goes to cloud).

- Evaluate — Run a TCO and risk assessment including ops costs.

- Govern — Establish security, compliance, and update policies for distributed nodes.

- Execute — Start with a small pilot, monitor, then iterate.

Short real-world scenario

A retail chain pilots cashier-less checkout. Cameras and local inference detect purchases at each store. Edge nodes process video locally to avoid sending raw footage to the cloud, reducing bandwidth and protecting customer privacy. Trained models and aggregated telemetry sync periodically to the cloud for analytics and model updates. The deployment used THE EDGE Checklist: latency tests validated local inference, data handling rules limited cloud uploads to anonymized events, and lifecycle tools automated model updates across 120 stores.

Core cluster questions

- When should a workload be placed at the edge versus the cloud?

- How do edge deployments affect security and compliance responsibilities?

- What monitoring and update patterns work reliably for distributed edge nodes?

- How to estimate total cost of ownership for edge versus centralized cloud?

- Which orchestration options scale best for heterogeneous edge hardware?

Practical tips for evaluating and running edge projects

- Start small: pilot a single site with production traffic to validate assumptions before roll-out.

- Automate updates and telemetry: implement secure over-the-air updates and centralized logging to minimize on-site intervention.

- Design for intermittent connectivity: build store-and-forward and graceful degradation into applications.

- Measure end-to-end performance: include sensor, processing, and actuation delays when testing latency.

- Plan lifecycle and spares: include hardware replacement, remote troubleshooting tools, and SLA-aware vendors.

Trade-offs and common mistakes

Common trade-offs include:

- Cost vs. performance: Higher upfront hardware and ops cost can yield better latency and reduced bandwidth spend.

- Consistency vs. locality: Centralized cloud offers consistent platform features; edge requires handling heterogeneity.

- Security surface vs. privacy: Local processing can reduce sensitive data transmission, but increases the number of endpoints to secure.

Common mistakes

- Skipping realistic load and failure testing—assumptions about local performance often fail under load.

- Treating edge as a feature rather than an operational model—operations complexity must be planned and budgeted.

- Overgeneralizing a single success—what works in one environment (e.g., factory floor) may not translate to another (e.g., retail).

Implementation checklist (compact)

- Define clear success metrics: latency, availability, data reduction, cost targets.

- Run a 30–90 day pilot with representative traffic.

- Select management tooling for images, secrets, and telemetry.

- Document update and rollback procedures for on-site nodes.

- Verify compliance and data retention policies for local data.

FAQ

What are common edge computing myths?

Several myths persist: that edge always cuts cost, replaces cloud, or automatically improves security. Each claim may be true in specific contexts but not as universal rules—assessment should be case-by-case.

How does edge differ from fog or CDN approaches?

Edge typically refers to compute placed near data sources; fog computing emphasizes distributed layering between devices and cloud; CDNs cache static content closer to users. Each addresses different problems—use case dictates the right approach.

Are there standard best practices for edge security?

Yes. Implement hardware root of trust, secure boot, device identity management, encrypted communication, and centralized patching. Industry standards and bodies provide architecture guidance, such as the ETSI MEC specifications.

Which workloads benefit most from edge deployments?

Latency-sensitive controls, privacy-sensitive data processing, high-bandwidth sensor pre-processing, and situations requiring offline operation are strong candidates for edge placement.

How to estimate edge vs cloud total cost of ownership?

Include hardware procurement, site power, maintenance, remote management, personnel, bandwidth reductions, cloud storage/ingest costs, and expected lifecycle replacements. Run a multi-year TCO model rather than comparing capital-only numbers.