LLM-Powered Agent Tools for Automation: Practical Guide, Checklist, and Implementation Steps

Get a free topical map and start building content authority today.

Introduction

LLM-powered agent tools for automation are changing how organizations design workflows, integrate systems, and scale routine decision-making. This guide explains what these agentic tools do, how they differ from traditional automation, and practical steps to evaluate and implement them in production. The content that follows focuses on real-world application, clear checklists, and common trade-offs to help technical and non-technical stakeholders plan effectively.

What this guide covers: an overview of how LLM-based agents operate, a named checklist (AUTOMATE) for planning and deployment, a short real-world scenario, practical tips, and common mistakes to avoid. Detected intent: Informational.

Core cluster questions (for follow-up reading):

- How do LLM agents differ from RPA and scripted automations?

- What are reliable evaluation metrics for autonomous AI agents?

- How to orchestrate multiple AI agents across a business workflow?

- What safety controls are required when deploying agentic automation?

- When should a company choose a hybrid agent-plus-human workflow?

LLM-powered agent tools for automation: What they are and why they matter

LLM-powered agents combine a large language model (LLM) with tool use, task planning, and external API access to perform multi-step tasks with varying degrees of autonomy. Instead of running a single scripted action, an agent can decompose a goal, query databases, call external services, and produce structured outputs. These tools bridge natural language intent and programmatic systems, enabling new productivity patterns across customer support, data preparation, business intelligence, and operations.

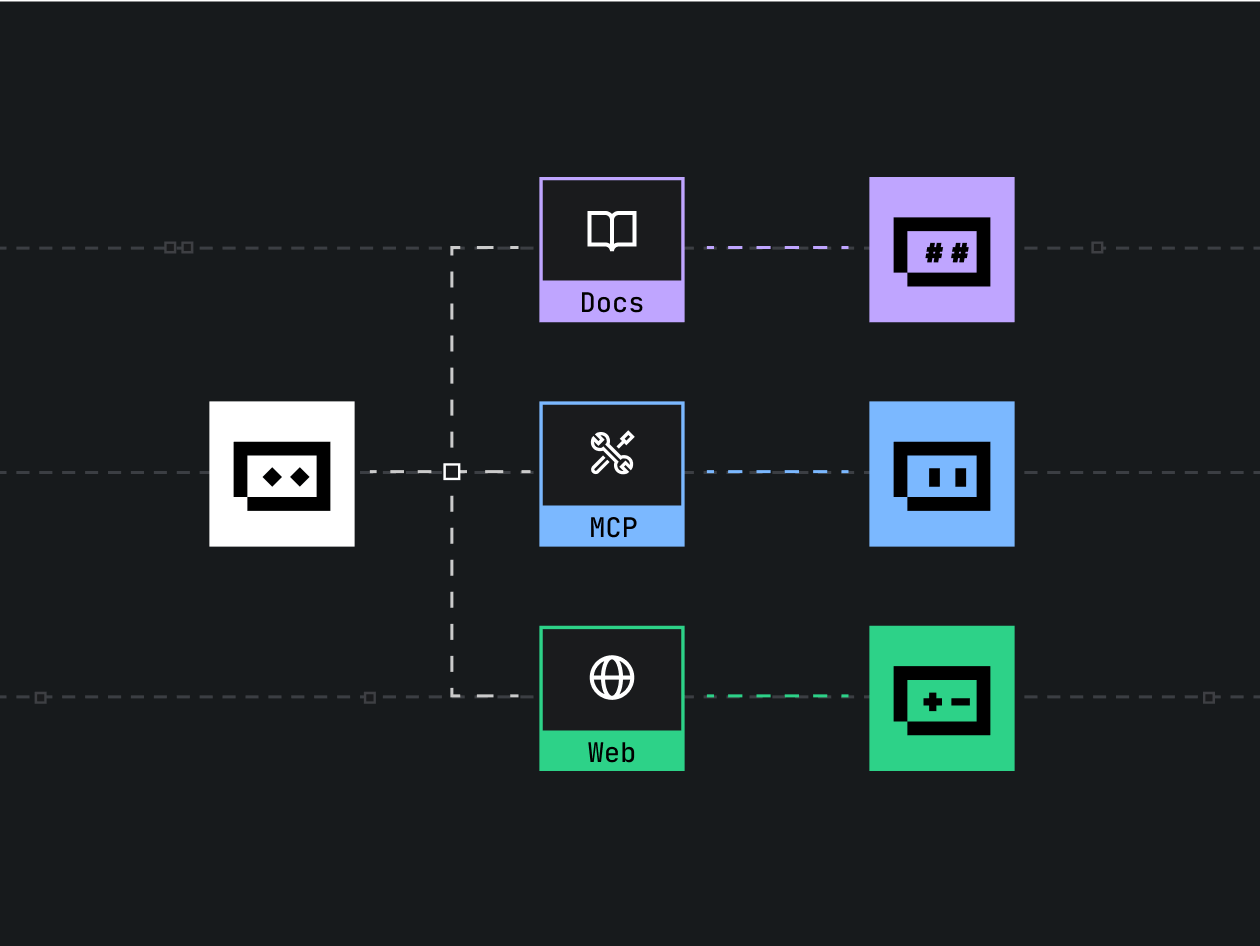

Key components and related terms

- Large language models (LLMs): models that interpret and generate natural language as the reasoning core.

- Tool interfaces: APIs, web browsers, databases, and specialized function calls available to the agent.

- Orchestration layer: the runtime that sequences actions, manages state, and handles retries.

- Prompt engineering and chain-of-thought control: methods to structure agent reasoning.

- Autonomy and guardrails: policies, validation, and human-in-the-loop controls to manage risk.

Autonomous AI agents for business workflows

In business contexts, autonomous AI agents for business workflows can perform tasks such as triaging support tickets, preparing draft reports from raw data, or coordinating between SaaS systems. The combination of natural language understanding, planning, and API access makes these agents suited for unstructured or partially structured work that was previously manual or brittle when scripted.

AUTOMATE checklist: A named framework for evaluating and deploying agents

Use the AUTOMATE checklist to move from idea to production with clear milestones.

- Assess: Identify objectives, success metrics, and stakeholders.

- Use-case fit: Validate that the task benefits from language understanding, multi-step planning, or dynamic tool use.

- Tooling: Select the LLM, connectors, orchestration platform, and observability stack.

- Orchestrate: Design how agents will sequence tasks, call APIs, and hand off to humans.

- Model tuning & prompting: Prepare prompts, examples, and fine-tuning as required.

- Acceptance tests: Define automated tests and human review gates for correctness and safety.

- Telemetry & monitoring: Implement logging, metrics, and error tracking for agents.

- Evolve: Plan iterations based on metrics, user feedback, and changing data.

Practical implementation steps

Follow these steps to reduce risk and accelerate value:

- Start with a narrow, measurable pilot—pick a task with clear input and output.

- Define acceptance criteria, including accuracy thresholds and allowed failure modes.

- Build an orchestration prototype that limits external access and logs each action for auditability.

- Run a shadow phase where agents suggest actions but humans approve changes.

- Move to staged rollout with automated rollback and human override controls.

Real-world example: Automated contract intake and routing

A legal operations team needs to process incoming vendor contracts and route them for review depending on clauses, value, and counterparty type. An LLM-powered agent reads the incoming contract text (tooling: document ingestion and OCR), extracts structured metadata (effective date, renewal terms, indemnity clauses), consults a pricing database via API, and proposes a routing decision (legal review, procurement approval, or auto-accept). Initial deployment runs the agent in suggested-mode; a human reviewer approves routing while the system collects correction labels to improve extraction accuracy.

AI agent orchestration platforms

Orchestration platforms provide the runtime for action sequencing, retry logic, rate limiting, and secret management. When evaluating these platforms, prioritize robust observability, easy connector development, and clear security boundaries between agents and sensitive systems.

Practical tips for success

- Limit scope initially: pick one workflow and instrument it for measurement.

- Design for explainability: capture the agent’s chain-of-thought and decision reasons in logs.

- Implement human-in-the-loop gates for high-risk decisions and a defined escalation path.

- Use structured prompts and examples rather than long free-form prompts to improve stability.

- Monitor performance drift and retrain or adjust prompts when input distributions change.

Trade-offs and common mistakes

LLM-powered agent tools enable flexibility but introduce new trade-offs:

- Reliability vs. flexibility: Agents handle ambiguous tasks but may be less deterministic than scripted automations.

- Observability requirement: The need for detailed logging and explainability increases operational overhead.

- Security surface: Giving an agent API access risks unintended actions if controls are weak.

- Over-automation: Automating tasks that require human judgment can create costly errors—use staged adoption.

Common mistakes include skipping acceptance tests, insufficient monitoring, and poor versioning of prompt templates and connector code.

Governance and standards

Adopt recognized frameworks for risk assessment and model governance. For guidance on responsible AI practices and risk management, consult official resources such as the NIST AI Risk Management Framework for baseline controls and best practices: NIST AI Risk Management Framework.

Core cluster questions

Use these topics as next-article ideas or internal link targets:

- How to compare LLM agents versus traditional RPA for routine tasks

- Checklist for safe API access and credential handling in autonomous agents

- Best practices for testing and validating agent outputs in production

- Design patterns for multi-agent orchestration and communication

- Metrics and alerting strategies for monitoring agent performance

FAQ

What are LLM-powered agent tools for automation and when should they be used?

LLM-powered agent tools combine language models with external tools and orchestrators to perform multi-step tasks. They are best used where tasks involve unstructured inputs, require flexible reasoning, or must adapt to changing contexts—examples include document parsing, search-driven workflows, and multi-system coordination.

How do LLM agents differ from traditional RPA (Robotic Process Automation)?

Traditional RPA automates deterministic GUI or API interactions based on explicit rules. LLM agents add natural language understanding, planning, and dynamic tool selection, enabling them to handle ambiguous or variable inputs that RPA would struggle with.

What safety controls are essential when deploying agentic automation?

Key controls include role-based API scopes, human approval gates for high-impact actions, deniable sandbox environments, detailed action logging, and automated anomaly detection. Acceptance tests and rollback mechanisms are also critical.

What metrics should be tracked to evaluate agent performance?

Track accuracy of structured outputs, task completion rate, mean time to resolution, human override frequency, and false positive/negative rates for critical decisions. Also monitor system-level metrics like latency and API error rates.

Can LLM agents replace human decision-makers entirely?

In most organizational contexts, full replacement is not recommended. Agents can augment human operators, reduce routine workload, and improve consistency, but humans should retain oversight for novel, high-risk, or ethical decisions. Hybrid workflows are the safest early path to production.