How ChatGPT Evolved: From GPT-3 Breakthroughs to Next-Generation AI

👉 Best IPTV Services 2026 – 10,000+ Channels, 4K Quality – Start Free Trial Now

The evolution of ChatGPT has shaped expectations about conversational artificial intelligence, moving from the GPT-3 milestone to refined systems that incorporate multimodality, safety tooling, and alignment techniques. This article summarizes key technical advances, practical applications, governance issues, and likely future directions for large language models and conversational agents.

- GPT-3 introduced large-scale few-shot learning and popularized transformer-based language models.

- Subsequent versions focused on alignment, safety, multimodal input, and efficiency.

- Techniques such as reinforcement learning from human feedback (RLHF) and fine-tuning improved conversational behavior.

- Regulators and researchers increasingly emphasize transparency, robustness, and responsible deployment.

Evolution of ChatGPT

Milestones from GPT-3 onward

The GPT-3 release (presented in the paper "Language Models are Few-Shot Learners") marked a practical turning point by demonstrating that massively scaled transformer models could perform new tasks from minimal examples. Later iterations introduced better instruction-following, safety mitigations, and multimodal capabilities. Research and engineering efforts combined larger datasets, refined training objectives, and human-in-the-loop methods to improve coherence, factuality, and usability.

Key published research and technical reports on model scaling, transformer architecture, and alignment have appeared on academic servers and in conference proceedings, and provide the evidence base for many of these shifts. For an original reference on GPT-3, see the arXiv preprint: Language Models are Few-Shot Learners (GPT-3).

Technical advances that shaped ChatGPT

Transformer architecture and foundation models

The transformer architecture, introduced by Vaswani et al., underpins modern language models. Self-attention mechanisms and parallelizable training enabled the development of foundation models: large pretrained networks that are later adapted for many tasks through fine-tuning or prompt-based techniques.

Scaling laws, parameters, and compute

Empirical scaling laws showed predictable improvements in performance with increases in model size, data, and compute. These relationships guided investments in larger parameter counts and longer training runs while spurring research into efficiency, sparse models, and distillation to lower inference costs.

Reinforcement learning from human feedback (RLHF) and alignment

Techniques such as RLHF combine supervised fine-tuning with human preference data to shape behavior and reduce harmful outputs. Alignment research targets robustness, truthfulness, and the mitigation of biases, drawing on human evaluators, adversarial testing, and model audits.

Multimodality, retrieval, and context management

Later systems integrated multimodal inputs (text, images, audio) and external retrieval mechanisms to access up-to-date or domain-specific information. Improved context windows and retrieval-augmented generation address limitations in long-term coherence and factuality.

Applications, limitations, and societal impact

Common use cases

Conversational models are applied to drafting text, coding assistance, customer support, creative writing, tutoring, and accessibility tools. Domain-specific fine-tuning and safety layers are commonly used before deployment in professional settings.

Limitations and known risks

Limitations include hallucinations (fabricated or inaccurate outputs), sensitivity to prompt phrasing, data biases inherited from training corpora, and the potential for misuse. Robust evaluation frameworks and factuality checks are active areas of research to address these challenges.

Governance, standards, and regulation

Regulatory attention on AI systems has increased. Examples include policymaking initiatives in the European Union and guidelines from national consumer protection agencies. Research organizations and standards bodies publish best practices for evaluation, transparency, and reporting, and academic reviews contribute evidence on societal impacts.

Future frontiers and research directions

Efficiency, accessibility, and edge deployment

Research aims to reduce compute and energy footprints through quantization, model pruning, and distillation so capable models can run on consumer devices or edge hardware. This trend supports broader access while lowering environmental and economic costs.

Improving safety, interpretability, and evaluation

Future work focuses on interpretable architectures, better uncertainty quantification, benchmarks for harmful behavior, and standardized evaluations developed by academic and regulatory communities.

Interoperability and multimodal user experiences

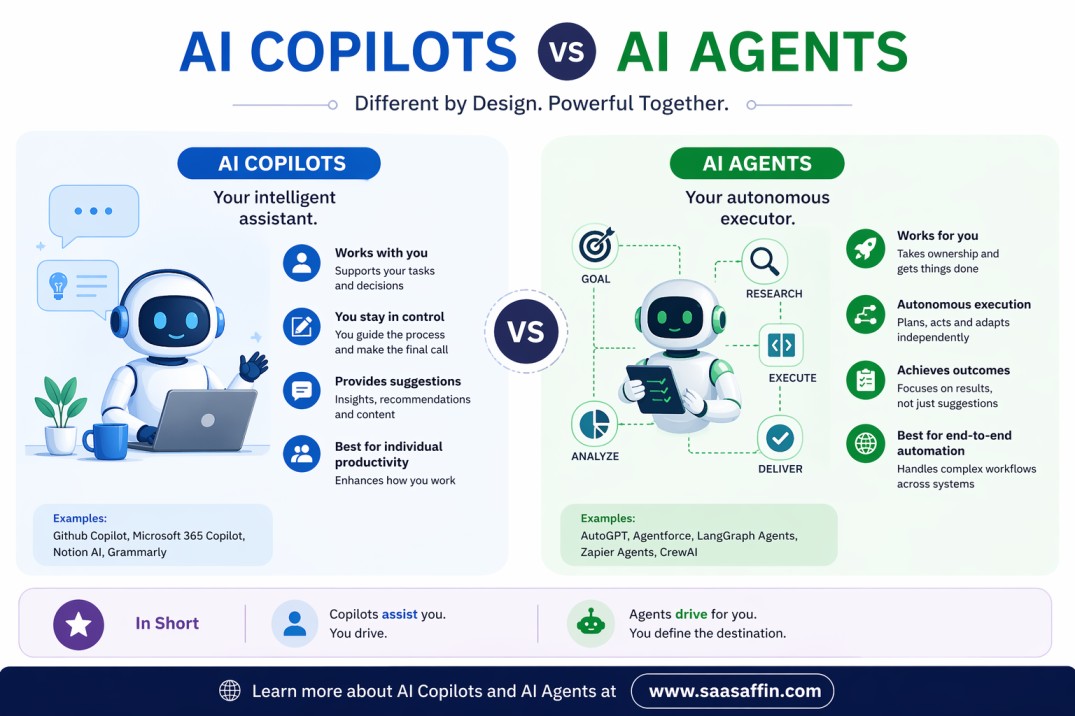

Advances in multimodal reasoning and tool use are expected to create more interactive, context-aware agents that can consult databases, execute code, or manage multimedia content while preserving guardrails for safety and privacy.

Research and reference notes

Foundational literature includes the transformer paper by Vaswani et al., scaling law studies, and model-specific preprints and technical reports deposited on academic archives and conference proceedings. Organizations involved in research and standard-setting include university labs, regional regulators, and international standards bodies.

FAQ

What is the evolution of ChatGPT?

The evolution of ChatGPT began with transformer-based language models like GPT-3, which demonstrated few-shot capabilities at large scale. Subsequent stages added instruction tuning, RLHF for alignment, multimodal inputs, larger context windows, and deployment-focused improvements such as retrieval augmentation and safety filters.

How do techniques like RLHF improve conversational models?

RLHF uses preference data from human evaluators to guide model outputs toward more helpful, less harmful responses. It typically follows supervised fine-tuning and helps reduce undesirable behaviors, although it does not eliminate all failure modes.

What are the main concerns regulators and researchers focus on?

Key concerns include transparency, accountability, data governance, robustness against misuse, bias mitigation, and ensuring models meet applicable safety and privacy standards before deployment in sensitive contexts.