Practical Guide: How NAS Data Archiving Improves Scientific Research Storage

Get a free topical map and start building content authority today.

Research teams and institutional IT departments increasingly adopt NAS data archiving to preserve large scientific datasets reliably while keeping files accessible for analysis and reuse. This guide explains how NAS supports scientific archives, lifecycle management, and reproducible research without vendor hype.

Detected intent: Informational

How NAS data archiving improves scientific data preservation

Network Attached Storage (NAS) provides POSIX-style file access, native metadata handling, and integrated features such as snapshotting, replication, and automated tiering—capabilities that align with common scientific data archival needs. NAS often acts as the primary on-premises layer in a multi-tier archival architecture, making it easier to apply checksum verification, lifecycle policies, and access controls consistent with institutional requirements like the FAIR principles and records-management standards.

Core cluster questions

- What are the lifecycle stages for research data on NAS?

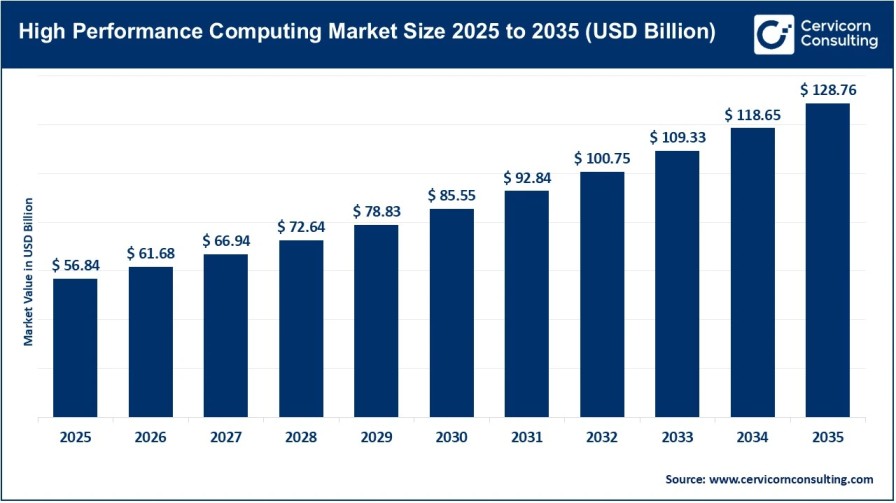

- How to design capacity planning for petabyte-scale NAS environments?

- Which metadata and checksum strategies ensure long-term integrity?

- How to integrate NAS with object storage and tape for cold archives?

- What access control and auditing practices meet research compliance needs?

Key benefits and components

Familiar file access and researcher workflows

NAS uses standard protocols (NFS, SMB) that match existing analysis pipelines and instruments, reducing friction when storing large files such as sequencing runs, microscopy images, or simulation outputs.

Data integrity, metadata, and indexing

Modern NAS devices provide built-in checksum validation, extended attributes for metadata, and searchable indexes. Combining these features with institutional metadata schemas improves discoverability and supports reproducibility.

Tiering and lifecycle management

NAS fits in a tiered strategy: hot active storage on NAS, warm object storage (S3-compatible) for medium-term access, and tape or deep cold repositories for long-term retention. Policies can automatically move files based on age, size, or access patterns—integral to scientific data archival strategies.

ARCHIVE checklist (named framework)

Use the ARCHIVE checklist to plan NAS-based archives:

- Assess: quantify data volumes, growth rate, and access patterns.

- Retention: define retention schedules, legal holds, and embargo periods.

- Checksums: implement end-to-end verification and periodic scrubbing.

- Hierarchy: design tiering rules between NAS, object storage, and tape.

- Identifiers & metadata: apply persistent identifiers and rich metadata schemas.

- Verification & monitoring: set up alerts, audit logs, and restore tests.

- Encryption & access: enforce encryption at rest/in transit and role-based access controls.

Choosing network attached storage for research: capacity, protocols, and performance

Design decisions should balance throughput requirements, concurrency, and capacity. High-throughput compute clusters may need NVMe or SSD cache in front of spinning-disk NAS. For archival workloads, prioritize sustained throughput for large sequential reads and efficient metadata operations to support indexing and search.

Real-world scenario

An academic genomics center produces 1 PB of raw sequence data per year. The center deploys NAS as the active archive, with automated policies that move age >12 months data to an object storage tier and cold data beyond five years to tape. Checksums are computed at ingestion and verified quarterly; a catalog service stores sample metadata and DOIs for published datasets. This reduces restore times for active projects while minimizing tape retrieval for rarely used datasets.

Practical tips for implementing NAS data archiving

- Plan for growth: size storage and metadata databases for multi-year growth, not just current needs.

- Automate verification: enable regular checksum scrubbing and test restores to detect silent corruption.

- Adopt metadata standards: align metadata with community standards (for example, domain-specific schemas) to improve reuse.

- Use lifecycle policies: configure automated tiering to reduce costs and simplify data management.

Trade-offs and common mistakes

Trade-offs include:

- Cost vs. accessibility: keeping everything on high-performance NAS is fast but expensive; tiering lowers costs but increases restore latency.

- Complexity vs. control: integrated NAS features simplify operations, while bespoke stacks (separate object store, metadata catalog, tape library) offer more flexibility but require more staff expertise.

Common mistakes:

- Underestimating metadata needs—files without searchable metadata are hard to reuse.

- Skipping verification—without checksums and scrubbing, bit rot can go undetected.

- Ignoring access patterns—treating all data the same increases costs and degrades performance.

Standards, compliance, and governance

Align archival policies with recognized frameworks (for example, OAIS concepts) and institutional governance. Refer to community guidance from research data organizations when defining retention, access, and preservation policies. For community best practices and research-data policy guidance, see the Research Data Alliance resource hub Research Data Alliance.

Integration and automation

Integrate NAS with workflow managers, data catalogs, and authentication services (LDAP, SAML). Use APIs or S3 gateways to allow tools that require object semantics to use NAS-backed archives. Schedule automated lifecycle actions and regular restore drills to validate policies.

How does NAS data archiving support long-term scientific data access?

NAS supports long-term access by providing stable file interfaces, metadata support, checksum verification, and automated tiering to less expensive media. Combined with governance and persistent identifiers, NAS can be the active layer that preserves discoverability and reproducibility.

Can NAS replace object storage or tape for archival needs?

NAS can serve as the active archive but rarely replaces object storage or tape for deep cold storage at scale. Object storage and tape offer cost advantages for infrequently accessed data; NAS is stronger for direct, low-latency file access and integration with analysis workflows.

What metrics should institutions monitor for NAS archives?

Monitor storage growth, ingest rates, read/write throughput, checksum error rates, restore times, and metadata completeness. These metrics indicate health and inform capacity and budget planning.

How to integrate NAS with cloud or hybrid archival strategies?

Use automated tiering, replication, or S3 gateways to move or copy data to cloud object storage. Evaluate egress costs, access latency, and compliance before moving regulated or embargoed datasets to public cloud providers.

What are common implementation mistakes to avoid when planning NAS data archiving?

Avoid underprovisioning metadata services, failing to implement regular integrity checks, and keeping all data on premium tiers. Plan lifecycle policies from the start and validate restores frequently to ensure archival reliability.