Practical Guide to Runway ML Video Editing: Tools, Workflow, and Tips

Get a free topical map and start building content authority today.

Runway ML video editing brings generative AI and neural tools into standard post-production tasks: background removal, inpainting, motion tracking, and creative generative passes. This guide explains what these tools do, how they change common workflows, and which practical steps produce stable, usable results for short-form and long-form projects.

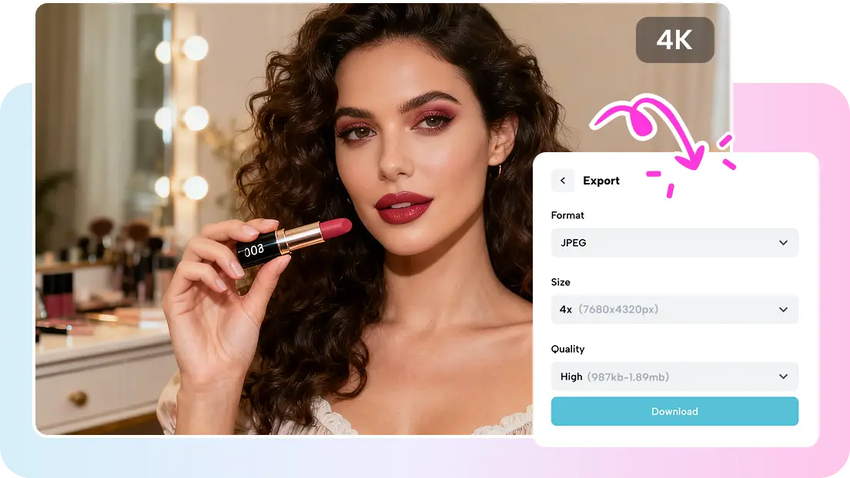

- Key features: generative inpainting, background removal, Gen-2 style transfer, and upscaling.

- Use the EDIT Workflow Checklist for consistent results: Evaluate, Isolate, Define, Test.

- Watch for temporal flicker, data size, and compute cost; test on short proxies before full renders.

Runway ML video editing: core capabilities and where they fit

Runway ML combines model-based operations that address common editing problems: rotoscoping and mask generation, background removal and replacement, frame-aware inpainting (object removal), generative style transfer (e.g., Gen-2), and AI upscaling. These tools map to stages in a standard NLE (non-linear editor) pipeline—prep, cleanup, creative pass, and final delivery—so treat them as part of the same workflow rather than standalone effects.

When to choose AI tools vs. traditional methods

AI video editing tools accelerate tasks that are tedious by hand, like long rotoscoping passes or rebuilding missing frames. They work best when footage is high quality and stable. Traditional methods (manual tracking, keying, hand-painted fixes) still win for absolute control, precise color science, or complicated motion with frequent occlusion. Expect trade-offs: time saved versus potential model artifacts and higher compute/export requirements.

Related terms and technologies

- Rotoscoping, masking, and temporal consistency

- Inpainting and object removal (frame-aware models)

- Style transfer / generative models (Gen-2)

- AI upscaling and frame interpolation

EDIT Workflow Checklist (named framework)

Use the EDIT checklist to bring AI into an established editing pipeline:

- Evaluate — Inspect footage for noise, motion blur, and occlusions; make test clips.

- Isolate — Create clean proxies, separate foreground and background layers, export alpha/mask passes when needed.

- Define — Set objective for each AI pass (remove object, replace background, stylize), and lock parameters for batch processing.

- Test — Render short segments, check temporal coherence, and iterate settings before full export.

Practical example: editing an interview clip

Scenario: A 2-minute interview was shot on a noisy conference-room background. The goal is a clean studio look with stabilized framing and consistent color.

- Export a 5–10 second proxy segment that includes challenging frames (subject moving, background motion).

- Run a mask-generation pass to isolate the subject; refine masks where auto-masks fail.

- Apply background removal and replace with a neutral plate; use spatially consistent lighting adjustments to match subject to plate.

- Apply a temporal-aware denoise/inpainting pass on problem frames (e.g., sensor noise during motion); check for flicker across cuts.

- Upscale or finish color grade in the NLE, then export master with 10–15% higher bitrate to avoid compression artifacts introduced by AI passes.

Runway documentation and model descriptions are useful reference points: Runway documentation.

Practical tips for reliable results

- Export short, representative proxies before committing to long renders—this makes iteration fast and cheaper.

- Prefer consistent frame rates and locked shutter settings; model artifacts often appear where motion blur varies frame-to-frame.

- Generate and save mask passes; manually refine masks for tricky occlusions rather than relying on a single auto-pass.

- Use GPU-accelerated instances or local hardware where possible; AI effects can be compute- and memory-heavy.

Common mistakes and trade-offs

Expect several common pitfalls when using AI for video editing:

- Over-reliance on automatic masking—models can miss fine details like strands of hair or transparent objects.

- Temporal flicker—single-frame inpainting or inconsistent model outputs can cause flicker across consecutive frames; always test continuity.

- File size and render time—AI passes often increase intermediate file sizes and export times; budget accordingly.

- Color and detail loss—generative passes can alter fine texture; preserve original plates and always compare before-and-after.

Output and delivery considerations

Render masters at high bitrate and preserve project files and mask layers. For distribution, test the final deliverable on target devices (streaming, broadcast, mobile) to spot compression interactions. Keep an archive of mask exports and AI input clips to reproduce or re-run passes with updated models.

When to avoid Runway ML tools

Avoid AI passes when absolute fidelity is required (e.g., archival footage restoration where original pixel history matters) or where legal/forensic authenticity is required. For these use cases, manual or specialized restoration workflows remain preferable.

Monitoring results and iteration

Track artifacts over multiple test renders and keep a short-changes log for parameter settings. If using generative style transfer, lock the random seed or export deterministic parameters to ensure consistent results across renders.

FAQ

What is Runway ML video editing and what can it do for a project?

Runway ML video editing refers to using Runway's generative and video models for tasks such as background removal, inpainting, rotoscoping, upscaling, and style transfer. These tools speed up repetitive tasks and enable creative passes, but require testing for temporal consistency and artifacts.

How should footage be prepared before running AI passes?

Stabilize and denoise footage where possible, export short proxies for testing, and generate tracking/geometry references. Stable exposure and consistent frame rate produce much more reliable AI outputs.

Can AI replace manual rotoscoping and color grading?

AI reduces manual effort but does not fully replace human oversight. Use AI for initial passes to save time, then refine masks, color, and compositing manually for final quality control.

How to maintain temporal consistency across long clips?

Use frame-aware models with temporal constraints, lock model seeds, and test on contiguous segments. When flicker appears, adjust temporal smoothing or combine AI passes with manual stabilization and frequency separation techniques.

Which export settings are recommended after AI edits?

Export a high-bitrate master (ProRes or high-bitrate H.264/H.265), preserve alpha/mask layers, and keep intermediate files for re-rendering. Deliver compressed variants only after verifying the master on target platforms.

Practical application of the EDIT checklist—and testing short proxies—delivers the best balance between speed and image quality when using AI video editing tools. Maintain a clear archive of inputs, masks, and parameters to reproduce results or update passes as models evolve.