Software & Application Development Overview: Process, Models, and Practical Checklist

Get a free topical map and start building content authority today.

This software and application development overview explains the core concepts, stages, and practical choices teams face when building reliable applications. The goal is to explain what development involves, how to choose an approach, and which practices reduce risk while accelerating delivery.

High-level view of development: phases of the Software Development Life Cycle (SDLC), architecture options, testing and deployment patterns, a named SDLC Checklist, and a short scenario that shows trade-offs in a real project.

Detected intent: Informational

software and application development overview: core components and lifecycle

Software and application development typically follows a repeatable structure: requirements, architecture and design, implementation, testing, deployment, and maintenance. This sequence is often represented by the Software Development Life Cycle (SDLC). Understanding each phase, the common models (waterfall, iterative, agile), and how they connect to testing, security, and operations reduces surprises during delivery.

The SDLC Checklist (named framework)

- Define scope and acceptance criteria

- Choose architecture and technology stack

- Create a minimum viable design and prototype

- Implement in small, testable increments

- Automate tests, builds, and deployment pipelines

- Monitor, measure, and iterate after release

Key stages: requirements, design, implementation, and operations

Requirements and product definition

Clear acceptance criteria and prioritized user stories limit rework. For regulated environments, include compliance and audit requirements up front. Reference standards and industry guidance (for web accessibility, security, and APIs) when writing requirements.

Architecture and design

Decisions here shape performance, maintainability, and cost. Common architectures include monoliths, modular services, and microservices. The C4 model and component diagrams help document architecture at the right level for developers and stakeholders.

Implementation and testing

Implement using incremental delivery and apply a testing pyramid: unit tests first, integration tests next, and a small number of end-to-end tests. Continuous integration (CI) that runs automated tests on every change prevents regressions and preserves quality.

Deployment and operations

Deployment models range from single-server releases to container orchestration and serverless functions. Operational practices—logging, metrics, error tracking, and runbooks—ensure systems stay observable and recoverable in production.

Comparing approaches: agile, iterative, and plan-driven trade-offs

Different projects benefit from different approaches. Agile emphasizes frequent delivery and change tolerance; plan-driven (waterfall) emphasizes upfront certainty. Use the following trade-offs to choose an approach:

- Speed vs. predictability: Agile delivers faster feedback; waterfall offers clearer early estimates.

- Flexibility vs. compliance: Iterative models accommodate evolving scope; regulated projects may require heavier upfront documentation.

- Team size and distribution: Microservices add complexity but scale better for large, distributed teams.

Common mistakes when choosing a model

- Assuming one model fits all projects—adapt process to product risk, regulatory needs, and team skills.

- Skipping architecture thinking because of tight timelines—technical debt compounds quickly.

- Underinvesting in testing and automation—manual gates slow delivery and increase defects.

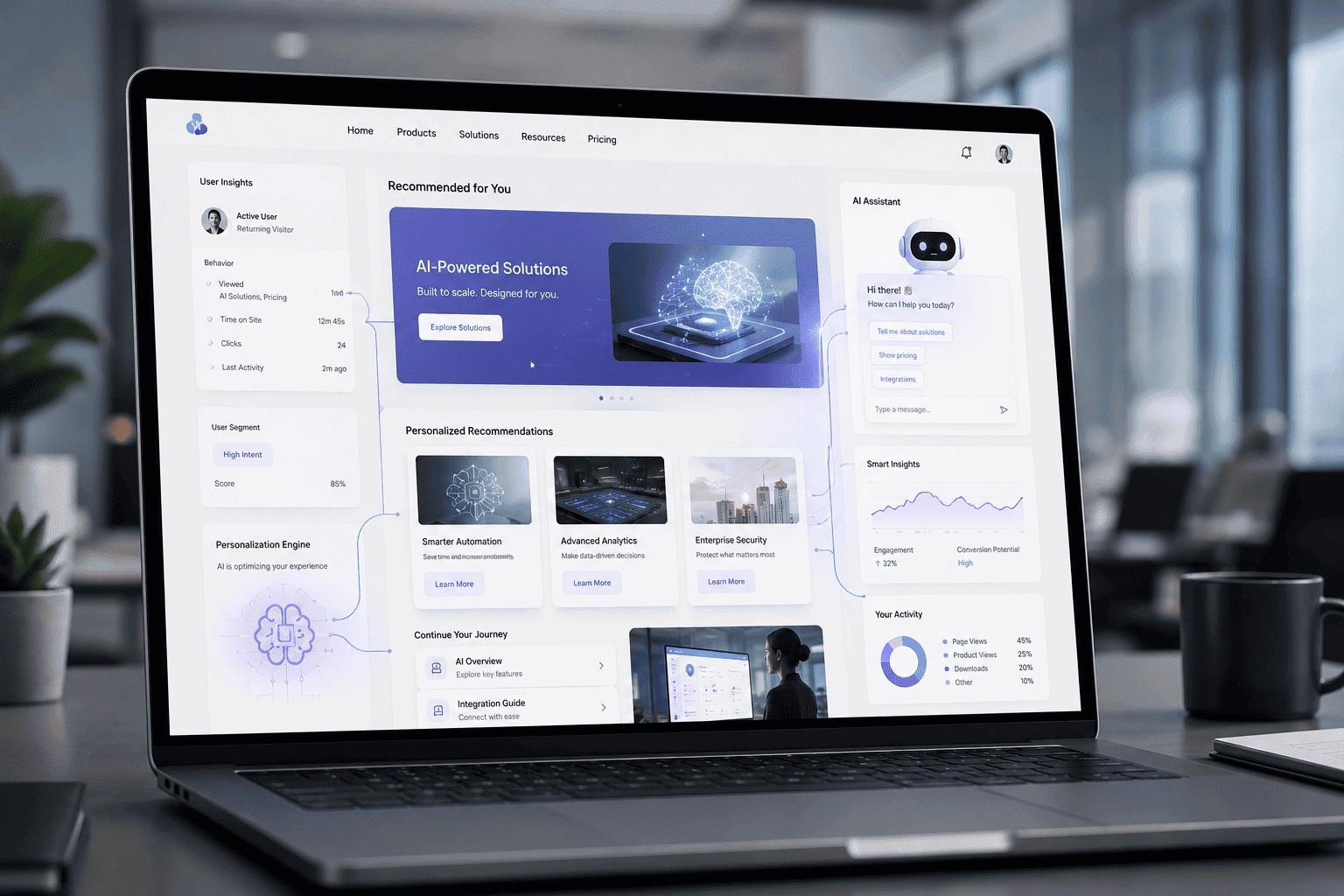

Design and delivery patterns for web and mobile projects

When building web and mobile applications, combine frontend patterns (single-page apps, progressive web apps) with backend choices (server-rendered, API-first) and deployment approaches (containers, serverless). The secondary keyword "web and mobile application development" is relevant here: choose based on user expectations, offline needs, and device constraints.

Security and compliance

Integrate security into each SDLC phase (shift-left). Threat modeling, secure coding practices, dependency scanning, and runtime protections reduce exposure. Follow guidance from recognized bodies—security baselines and secure development recommendations from industry groups and standards organizations.

For standards and best-practice references, see the W3C standards overview: W3C standards.

Practical tips for reliable delivery (actionable)

- Automate the build and test pipeline: ensure every commit triggers CI and a test suite that gates merging.

- Define a lightweight, living architecture document: update it alongside code to keep design and implementation aligned.

- Use feature flags for safe rollouts: decouple code deployment from feature exposure to limit blast radius.

- Measure key indicators: deployment frequency, lead time for changes, mean time to recovery (MTTR), and defect escape rate.

Real-world example: building an appointment-booking app

A team of four needs to build a mobile appointment booking app in three months. Using the SDLC Checklist, the team defines minimal features (booking, calendar sync, notifications), picks an API-first approach, and chooses a single-page web admin and native mobile apps. To reduce risk, the team delivers an MVP in iterative sprints, automates unit and integration tests, and deploys initially to a small user cohort behind feature flags. The team trades some architectural generality for speed by starting with a single backend service and migrating to microservices later when usage and complexity justify it.

Core cluster questions (for internal linking and related content)

- What are the software development lifecycle phases and their deliverables?

- How to choose between monolith and microservices for a new app?

- What testing strategy minimizes production defects?

- How do deployment patterns differ for cloud-native and legacy systems?

- What metrics best indicate healthy development and operations?

Common mistakes and trade-offs

Common mistakes include prioritizing features over maintainability, ignoring observability until production, and not investing in automated testing. Trade-offs often require explicit discussion: for example, investing time in modular architecture increases short-term delivery cost but reduces long-term maintenance effort. Document trade-offs in the project charter so future teams understand why decisions were made.

Secondary practices and models mentioned

Relevant terms and models to explore further: SDLC, CI/CD, feature flags, C4 model, MVP, test automation, agile software development practices, and software development lifecycle phases. These help form a practical toolbox for teams of any size.

Next steps for teams starting a project

- Run a short discovery to validate assumptions and define acceptance criteria.

- Create the SDLC Checklist items for the first two releases and assign owners.

- Set up CI and basic monitoring before the first public release.

What is a software and application development overview?

A software and application development overview summarizes the lifecycle stages, common architectures, delivery practices, and trade-offs involved in producing and operating software products. It helps teams align on process, scope, and priorities before committing to implementation.

How do software development lifecycle phases differ from each other?

Phases differ by primary focus and outputs: requirements create the product definition, design produces architecture and plans, implementation delivers working code, testing verifies behavior, deployment releases the product, and maintenance handles change and incident resolution.

When should a team choose agile software development practices?

Agile is a good fit when requirements are expected to evolve, rapid feedback is valuable, and the team can deliver in short iterations. It is less suitable when strict regulatory documentation and fixed-scope contracts demand heavy upfront planning.

What are common metrics to track for application health?

Track deployment frequency, lead time for changes, change failure rate, MTTR, error rates, latency, and user-facing metrics such as conversion or retention. These metrics connect development efficiency with user experience and reliability.

How should teams approach web and mobile application development costs?

Estimate costs across development, infrastructure, and ongoing operations. Early-stage projects often benefit from building an MVP to validate demand before investing in performance optimizations or complex architectures that increase long-term cost.