How Video Streaming Servers Power Reliable Content Delivery: Architecture, Roles, and Best Practices

👉 Best IPTV Services 2026 – 10,000+ Channels, 4K Quality – Start Free Trial Now

How video streaming servers deliver reliable video at scale

Intent: Informational

Understanding video streaming servers is essential when planning reliable playback for audiences across locations and devices. This guide explains what video streaming servers do, the typical server types and architecture patterns, and practical steps to design or evaluate a streaming delivery chain. The term video streaming servers appears throughout to make clear how each piece contributes to availability, latency, and quality.

- Video streaming servers include origin, transcoding, packager, CDN/edge, and manifest servers; each has a specific role.

- Key trade-offs are latency vs. stability, cost vs. reach, and complexity vs. flexibility.

- Follow the STREAMS checklist to design or audit a streaming architecture.

Video Streaming Servers: Roles and architecture

At a high level, video streaming servers form a pipeline that moves media from capture or storage to playback. The pipeline commonly includes origin servers (storage and web serving), transcoding/transmuxing servers (format and bitrate conversion), packager/manifest servers (HLS, DASH), and edge servers that cache segments close to viewers. Content delivery network servers and edge servers for streaming reduce latency and load on origin infrastructure.

Common server types and responsibilities

- Origin server: stores master files and serves initial requests. Responsible for durability and origin access control.

- Transcoding/transmuxing: converts source video into multiple bitrates and codecs for adaptive streaming.

- Packager/manifest server: creates HLS/DASH manifests and segments for playback clients.

- Edge/CDN servers: cache segments and serve them from locations closer to viewers to reduce hop count and improve throughput.

- Live ingest and origin clusters: accept live streams (RTMP, SRT, WebRTC) and hand off to transcoders and packagers.

- Monitoring and analytics: collect QoS metrics, error rates, and viewer experience data.

Edge servers for streaming and content delivery network servers

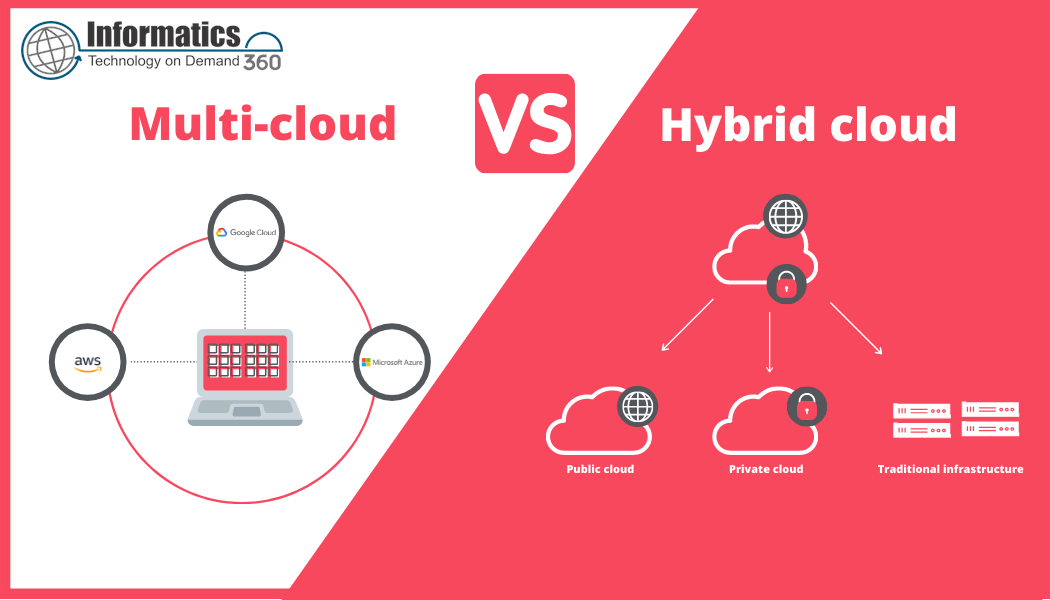

Edge servers cache frequently requested segments and reduce origin load. Content delivery network servers operate a distributed edge layer, often with global POPs, routing, and DDoS protection. For large-scale delivery, using distributed caching and intelligent routing is standard practice; combining multiple CDNs (multi-CDN) can improve resilience and global performance.

Design checklist: the STREAMS framework

Use the STREAMS checklist to evaluate or design a streaming deployment. STREAMS is an acronym that captures core areas to inspect:

- Server types mapped (origin, transcoder, packager, edge)

- Transcoding strategy (codecs, bitrate ladders, hardware vs. software)

- Reliability (auto-scaling, redundancy, multi-CDN)

- Edge placement (POP locations, TTL settings, cache keys)

- Adaptive bitrate setup (HLS, DASH, ABR rules)

- Monitoring & metrics (startup time, rebuffering, error ratios)

- Security & compliance (DRM, token auth, geo-restrictions)

Practical example: live sports stream with sudden demand spike

A regional broadcaster streams a live sports final expecting 50,000 concurrent viewers but faces a viral surge to 600,000. An origin-only setup overloads quickly. A resilient architecture routes ingest to a cluster of live encoders, pushes fragmented MP4 segments to origin storage, and leverages multiple CDN POPs where edge servers cache segments. Auto-scaling transcoding instances spin up additional bitrate ladders and a health-aware load balancer redirects traffic between CDNs. Monitoring dashboards surface rebuffer spikes, prompting temporary bitrate cap configuration to protect latency-sensitive viewers.

Practical tips for production-grade delivery

- Use adaptive bitrate streaming and a well-chosen bitrate ladder to balance quality with buffer resilience.

- Design cache keys to include manifest and variant identifiers so edge servers can cache segments efficiently while honoring session-level tokens.

- Implement multi-region origins or read-replicas to lower origin latency for geographically diverse viewers.

- Leverage health checks and autoscaling for transcoding and ingest services—transcoding is CPU/GPU intensive and needs headroom.

- Instrument end-to-end telemetry (player, edge, origin) to correlate viewer QoE with infrastructure events.

Common mistakes and trade-offs

Design choices require clear trade-offs:

- Over-cache vs. freshness: Long TTLs reduce origin load but can delay content updates or ad-targeting changes.

- Cost vs. latency: More edge POPs reduce latency but increase CDN and operational cost.

- Complexity vs. flexibility: Multi-CDN and multi-region origins improve resilience but add routing complexity and debugging overhead.

Operational best practices and standards

Protocols and standards influence server behavior. For example, HTTP/3 and QUIC reduce connection setup time and head-of-line blocking compared with older transports, benefiting interactive and low-latency streaming. For details on QUIC and transport-layer behavior, see the IETF QUIC specification.

IETF RFC 9000 — QUIC: A UDP-Based Multiplexed and Secure Transport

Monitoring checklist

- Track startup time, time to first frame, rebuffer ratio, and bitrate switches.

- Monitor origin and edge error rates (5xx/4xx) and cache hit ratios.

- Alert on transcoder queue depth and ingestion latency.

Core cluster questions (for related articles or internal linking)

- How does adaptive bitrate streaming work end-to-end?

- What differences exist between live and on-demand server pipelines?

- How should a multi-CDN strategy be configured for failover?

- What are best practices for secure token-based access to stream segments?

- How to measure and improve viewer Quality of Experience (QoE)?

Practical debugging flow

When a stream reports high rebuffering, follow this flow: verify player logs for network errors, check edge cache hit ratio, inspect origin CPU/memory and disk I/O, and review transcoder queue times. Narrowing issues by layer (player & network, edge, origin, transcoder) accelerates resolution.

What are video streaming servers and how do they work?

Video streaming servers are the set of server roles—origin, transcoder, packager, and edge/CDN—that prepare, store, and deliver media to playback clients. They work together to transcode media into multiple representations, package segments and manifests, cache content at the edge, and serve requests with low latency and high availability.

How do content delivery network servers differ from origin servers?

Content delivery network servers are distributed caches optimized for serving many small ranged requests (media segments) from locations close to end users. Origin servers hold the master files and handle content updates, authentication, and long-tail fetches that miss the cache.

When should edge caching be bypassed?

Edge caching should be bypassed when content must be strictly fresh (personalized streams, live DVR segments with tight ordering requirements) or when legal/regulatory constraints require origin-only delivery. Token-based short TTLs and signed URLs can control caching selectively.

How to choose between hardware and software transcoding?

Hardware transcoding (GPU/ASIC) offers cost-efficient high-throughput for large, consistent workloads. Software transcoding provides flexibility for codec support, feature updates, and smaller or variable workloads. Evaluate workload predictability, codec roadmap, and latency requirements.

How to measure success for video streaming servers?

Key metrics include startup time, rebuffering rate, average bitrate, play failures, cache hit ratio, and server error rates. Combine player-side telemetry with server logs and CDN analytics to get a complete view of viewer experience and infrastructure health.