AI Objection Handler Generator for Sales Teams: Build, Train, and Deploy

Get a free topical map and start building content authority today.

An AI objection handler generator can speed up creation of rebuttals, role-play scripts, and coached responses for sellers. This guide explains how to set up an AI objection handler generator, what data and prompts are required, and how to measure results so training time converts to pipeline impact.

AI objection handler generator: what it is and when to use it

An AI objection handler generator is a system—often powered by large language models—that produces objection responses, rebuttal scripts, and coaching prompts for sales teams. Use it when onboarding new reps, scaling objection libraries across product lines, or shortening the feedback loop between rejected deals and coaching updates. Related terms include objection handling, rebuttal scripts, response templates, natural language generation, and prompt engineering.

How the generator works and required inputs

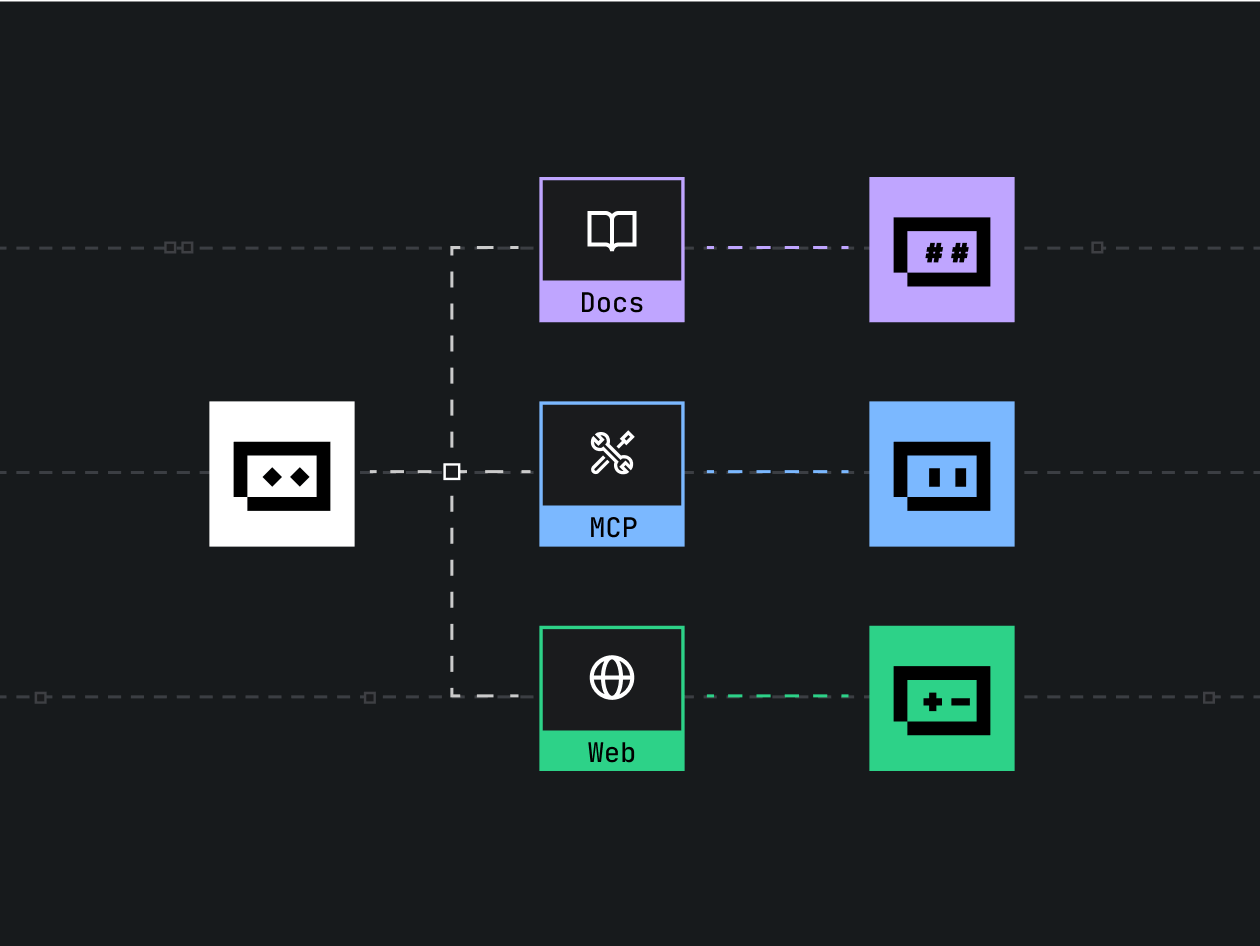

Core components

- Data layer: historical objection transcripts, CRM notes, call recordings (transcribed), email threads.

- Model layer: a base language model, optionally fine-tuned on internal dialogues and industry vocabulary.

- Prompt templates: parameterized prompts for different objection categories and customer personas.

- Delivery: export to playbooks, an LMS, a CRM widget, or a practice chat tool used in role-play.

Needed data and quality checks

Collect 100–1,000 real objection examples per product or persona where possible. Annotate each with objection type (price, timing, product-fit, competition), sentiment intensity, and outcome. Remove personal data and validate transcripts against privacy standards. For reproducible results, maintain a test set of unseen objections for evaluation.

CLEAR framework: checklist for building and validating an objection handler

Use the CLEAR framework to structure development and rollout.

- Catalog: Collect and tag objections by type, persona, and stage.

- Label: Add outcome labels (closed-won, lost, nurtured) and conversation context.

- Engineer: Create prompt templates and tune or fine-tune the model.

- Assess: Validate responses with role-play, A/B testing in enablement tools, and user feedback.

- Refine: Iterate on prompts, update the dataset, and retrain on new objections.

Practical example: pricing objection scenario

Scenario: A mid-market prospect says "The price is too high compared to Competitor X." The generator creates three structured responses: a short sales reply for live chat, a consultative script for discovery calls, and a coach note explaining the value trade-offs to practice in role-play. The script suggests clarifying questions ("What part of the price feels high—total TCO or monthly cost?") and a negotiated concession template. Use the script in practice and compare conversion rates across ten deals to validate efficacy.

Tips for deployment and training

- Start small: pilot the generator on one team and one objection type (for example, pricing) before expanding.

- Include human review in the loop: require a manager sign-off for new rebuttals before they become standard playbook items.

- Version prompts and datasets: track prompt changes and dataset snapshots so performance shifts can be traced.

- Measure impact: track win rate, objection recurrence rate, and average deal velocity before and after rollout.

Trade-offs and common mistakes

Trade-offs

Speed vs. accuracy: rapid generation can produce helpful drafts but may need human polishing to fit brand tone and compliance. Specificity vs. coverage: highly tailored responses perform better per persona but require more labeled data.

Common mistakes

- Feeding noisy transcripts without cleaning—results inherit errors and lead to incorrect rebuttals.

- Over-automation—publishing AI outputs without manager review can damage credibility with buyers.

- Ignoring evaluation—failing to A/B test or measure KPI changes leaves ROI unknown.

Evaluation metrics and ongoing governance

Track quantitative metrics: win rate by objection type, objection recurrence, average deal size, time to close. Use qualitative measures: seller confidence in rebuttals and manager ratings of AI-generated scripts. For industry guidance on sales best practices and objection handling, refer to an established sales enablement resource here: HubSpot sales objection strategies.

Practical integration patterns

Embed in coaching

Use the generator output as practice prompts for role-play sessions and record improvements in rep scoring rubrics.

Embed in tools

Expose short rebuttal snippets inside the CRM sidebar or chat templates so sellers can copy tested replies during live interactions.

Checklist to launch a pilot

- Gather 200+ labeled objections for the pilot scope.

- Create 3 prompt templates (short reply, discovery follow-up, negotiation script).

- Run a 4‑week role-play validation with manager approvals.

- Measure baseline KPIs and run an A/B test for 8–12 weeks.

FAQ

What is an AI objection handler generator and how does it fit into sales training?

An AI objection handler generator produces structured responses and coaching prompts for common buyer objections. It fits into training as a content source for role-play, playbooks, and CRM snippets that shorten ramp time and standardize effective responses.

How much data is needed to train a sales objection handling generator?

Start with 100–300 high-quality, labeled examples for a single objection type. For broader coverage across personas and products, scale to several hundred or thousands and maintain a validation set for testing.

Can the automated objection response builder adapt to different customer personas?

Yes—include persona labels in the training data and design prompts that request persona-specific tone and priorities. Validate outputs with sales reps from each persona segment.

What are quick ways to validate generated rebuttals during a pilot?

Use manager-reviewed role-play, A/B testing in controlled deal cohorts, and rep ratings. Track conversion lifts and reduce objection recurrence as primary signals.

How to ensure the AI objection handler generator stays compliant and on-brand?

Require human review for published responses, keep a compliance glossary in prompts, and maintain version control for prompt and dataset changes. Retrain or adjust prompts when product positioning or pricing changes.