Grok AI Assistant: Practical Guide to Using a Free AI Conversational Assistant

Get a free topical map and start building content authority today.

The Grok AI assistant is a conversational AI designed to simplify digital interactions — from customer support triage to content drafting and internal knowledge retrieval. This guide explains what the Grok AI assistant does, realistic expectations for accuracy and privacy, common integration patterns, and actionable steps for safe, useful deployment.

Grok AI offers a free AI conversational assistant experience with quick setup paths, API and widget options, and trade-offs around accuracy, data handling, and moderation. Use the G.R.O.K. evaluation checklist, follow security and governance practices, and match use cases to the model's strengths (summarization, routing, lightweight automation) rather than heavy decision-making.

Detected intent: Informational

Grok AI assistant overview: core capabilities and realistic expectations

The Grok AI assistant is built to handle natural language conversations, perform quick summarization, answer factual questions with provided context, and automate simple workflows. It typically runs as a cloud-hosted large language model (LLM) with a conversational interface. Expect strong performance on paraphrasing and context-aware replies, while recognizing limits: hallucination risk, sensitivity to prompt phrasing, and variable domain expertise.

Key terms and related concepts

Terms to know: conversational AI, large language model (LLM), prompt engineering, context window, intent detection, fallback routing, moderation/guardrails, and privacy controls. These concepts inform deployment choices and operational safeguards.

When to choose a free AI conversational assistant

Use cases well suited to a free AI conversational assistant include knowledge retrieval from curated documents, first‑tier customer triage, content drafting, and developer prototyping. Avoid relying on it for high‑stakes decisions, legal or medical diagnosis, or handling sensitive personal data without additional controls.

How Grok AI fits into common workflows

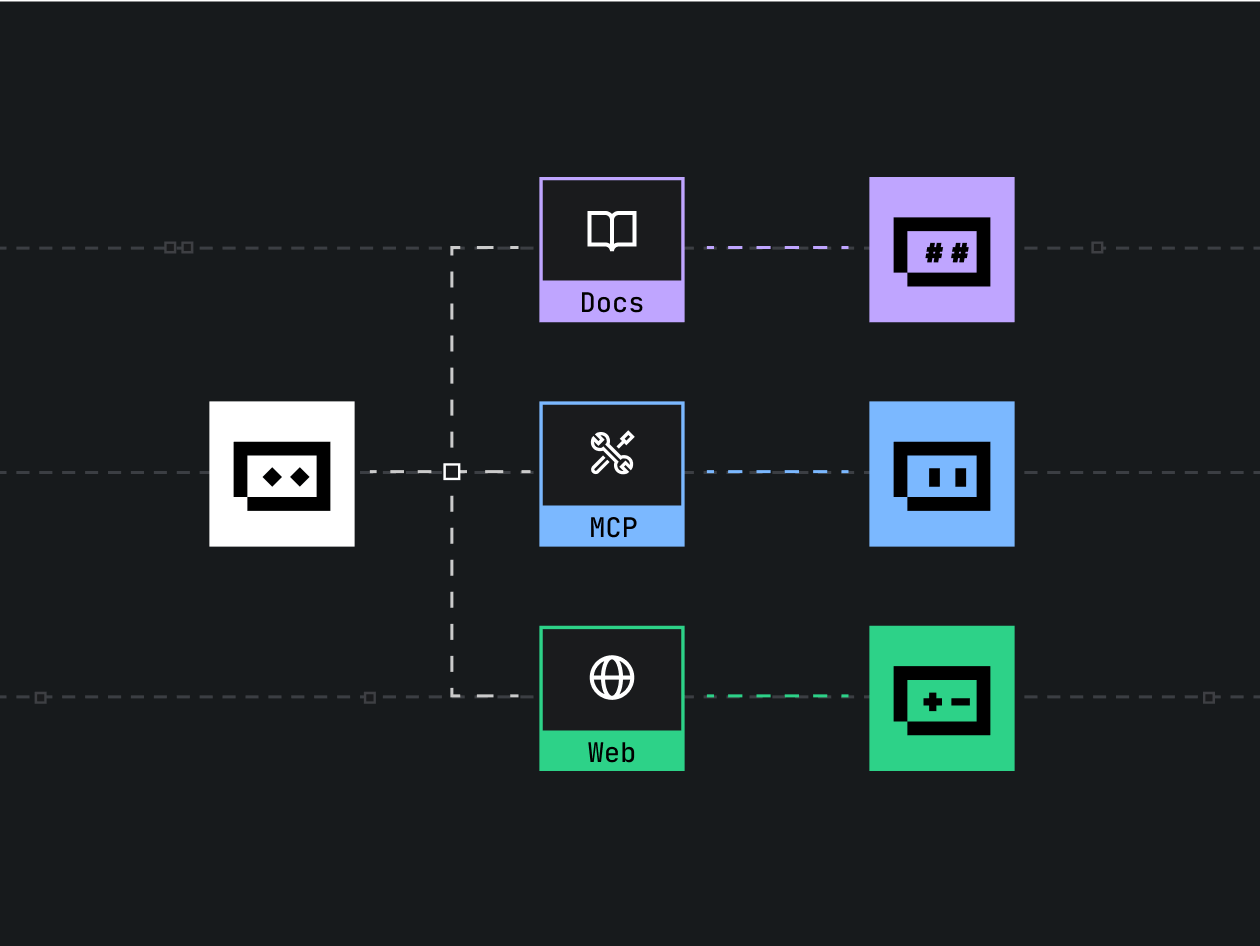

Integration patterns include: embedding a chat widget for customer support, connecting to a knowledge base via retrieval-augmented generation (RAG), or using an API to generate templates and summaries. Architecture typically layers model responses under application logic for verification and follow-up actions.

G.R.O.K. evaluation checklist for deploying conversational AI

Use the G.R.O.K. checklist to evaluate any conversational assistant before production deployment. The checklist is named for quick recall:

- Governance — Policies for data retention, human review, and escalation paths.

- Reliability — Monitoring, fallback responses, uptime SLAs, and testing for edge cases.

- Openness — Transparent disclosures to users about AI use and limits.

- Knowledge quality — Source control for knowledge bases, citation practices, and update cadence.

Practical example: customer support triage

Scenario: A mid-sized e-commerce site uses Grok AI assistant as the first contact point. The assistant collects order ID and issue type, attempts basic resolution (track package, reset password) and creates a structured ticket for human agents when confidence falls below a threshold. This reduces average handling time and surfaces clearer diagnostics to agents while preserving escalation control.

How to use Grok AI: setup, integration, and monitoring

Set up begins with a clear scope: define supported intents, permitted data types, and success metrics. Integration methods may include embedding a web widget, calling an API from backend services, or connecting the assistant to a document store for RAG. Implement confidence scoring to route uncertain cases to humans.

Integration checklist

- Map user intents and design dialog flows with fallbacks.

- Implement input sanitization and output filtering to reduce harmful or sensitive disclosures.

- Log interactions, but segregate sensitive fields to comply with data minimization rules.

- Measure KPIs: deflection rate, time to resolution, escalation rate, and user satisfaction.

Privacy, safety, and governance

Privacy obligations vary by region and sector. Follow industry best practices such as data minimization, secure storage, and explicit user consent when collecting personal data. For structured guidance on risk management and governance, consult recognized frameworks and standards in AI safety and risk management — for example, resources from the National Institute of Standards and Technology (NIST). NIST AI resources provide a starting point for risk frameworks and controls.

Common mistakes and trade-offs

Common mistakes include:

- Overtrusting model outputs — failing to validate facts before action.

- Insufficient testing across dialects and edge cases, leading to biased or inconsistent behavior.

- Collecting excessive logs that expose personal data without purpose limitation.

Trade-offs often involve balancing response coverage versus safety: opening the assistant to more data improves accuracy but increases privacy risk. Adding strict filters reduces risk but can frustrate users if legitimate requests are blocked.

Practical tips for maximizing value

- Start small: pilot the assistant on specific intents and measure impact before scaling to full support.

- Use retrieval-augmented generation (RAG) for factual domains: connect to curated documents and cite sources in replies.

- Set conservative confidence thresholds and clear escalation rules to human agents for ambiguous cases.

- Monitor performance and user feedback continuously; add targeted training data for recurring failure modes.

Core cluster questions

- How accurate is Grok AI in understanding context across multiple turns?

- Can Grok AI be integrated into existing helpdesk software and chat widgets?

- What are the privacy implications of using a free AI conversational assistant?

- How should teams design guardrails and human-in-the-loop escalation for conversational AI?

- What limitations should be expected when using the assistant for specialized domains?

Measuring success and operating at scale

Key metrics for conversational assistants include intent recognition accuracy, deflection rate (the percentage of contacts resolved without human handoff), average response time, and user satisfaction (CSAT). Log anonymized performance metrics and perform periodic audits to detect drift or coverage gaps.

Common deployment patterns and developer tips

Developers may choose one of three patterns: widget-first (fast user-facing launch), API-first (tight backend automation), or hybrid (widget with backend verification). Use typed schemas for API responses when automating actions, and include human verification steps for irreversible actions like refunds.

Short checklist before launch

- Complete the G.R.O.K. checklist items.

- Set monitoring dashboards for key KPIs and alerts for fallback spike.

- Run a privacy impact assessment and remove unnecessary data capture fields.

Conclusion

The Grok AI assistant can accelerate common conversational tasks and improve user experience when used within clear boundaries. Emphasize governance, monitoring, and incremental rollout to reduce risk. Pair the assistant with strong human-in-the-loop processes and a curated knowledge base to get the best outcomes.

What is the Grok AI assistant and how does it differ from other chatbots?

The Grok AI assistant is a conversational model focused on natural language understanding and quick responses. Differences from rule-based chatbots include higher flexibility, broader language comprehension, and reliance on model inference rather than purely scripted flows. This yields more natural interactions but requires additional validation to ensure factual accuracy.

Is Grok AI safe to use for customer support and what safeguards are recommended?

Using Grok AI for customer support is common, provided safeguards are in place: confidence thresholds, manual escalation, content moderation, and data minimization. Regular audits and user feedback loops help maintain safety and performance.

How to integrate Grok AI into a website or app?

Integration options include embedding a chat widget, calling an API for backend workflows, or a hybrid approach that verifies model suggestions before action. Ensure proper input/output sanitization and structured logging for automated flows.

What are typical limitations and how can they be mitigated?

Limitations include occasional hallucinations, sensitivity to ambiguous prompts, and domain knowledge gaps. Mitigation strategies: RAG with citations, human verification for critical actions, prompt templates, and targeted retraining or data curation.

Can Grok AI be used for sensitive data and what compliance steps are necessary?

Use with sensitive data requires strict controls: encryption in transit and at rest, minimal data retention, role-based access, and documented consent. Consult legal and compliance teams for sector-specific obligations before processing personal, health, or financial data.