AI for Business Growth: Practical Strategies to Uncover Hidden Opportunities

Get a free topical map and start building content authority today.

AI for business growth is a practical playbook, not a slogan. This guide explains how organizations identify and scale hidden revenue, efficiency, and customer experience opportunities using machine learning, predictive analytics, automation, and natural language processing.

Detected intent: Informational

This article presents a concise framework, a short real-world example, five core cluster questions for further content, a checklist, practical tips, and a section on trade-offs and common mistakes for leaders and practitioners evaluating AI-driven growth strategies.

AI for business growth: where hidden opportunities live

AI reveals patterns that are invisible to manual review — purchase behavior segments, process bottlenecks, churn predictors, and product bundling opportunities. Successful initiatives focus on measurable outcomes (revenue lift, cost reduction, retention) and use data-driven tests to validate impact.

DISCOVER framework: a practical model to find and scale AI opportunities

Use the DISCOVER framework to move from idea to production with guardrails and measurable outcomes.

- Define the business outcome — specify metric and target (e.g., increase average order value by 7%).

- Inventory data and constraints — map data sources, quality, latency, and privacy rules.

- Select the hypothesis — pick a testable idea with clear success criteria.

- Create prototypes — rapid models or rules to estimate lift with limited data.

- Orchestrate experiments — A/B tests, holdouts, or phased rollouts to measure real impact.

- Verify robustness — monitor drift, fairness, and operational metrics.

- Enable scale — automate model deployment, retraining, and measurement.

- Refine and repeat — capture learnings, update the backlog, and prioritize next bets.

How AI for business growth works in practice

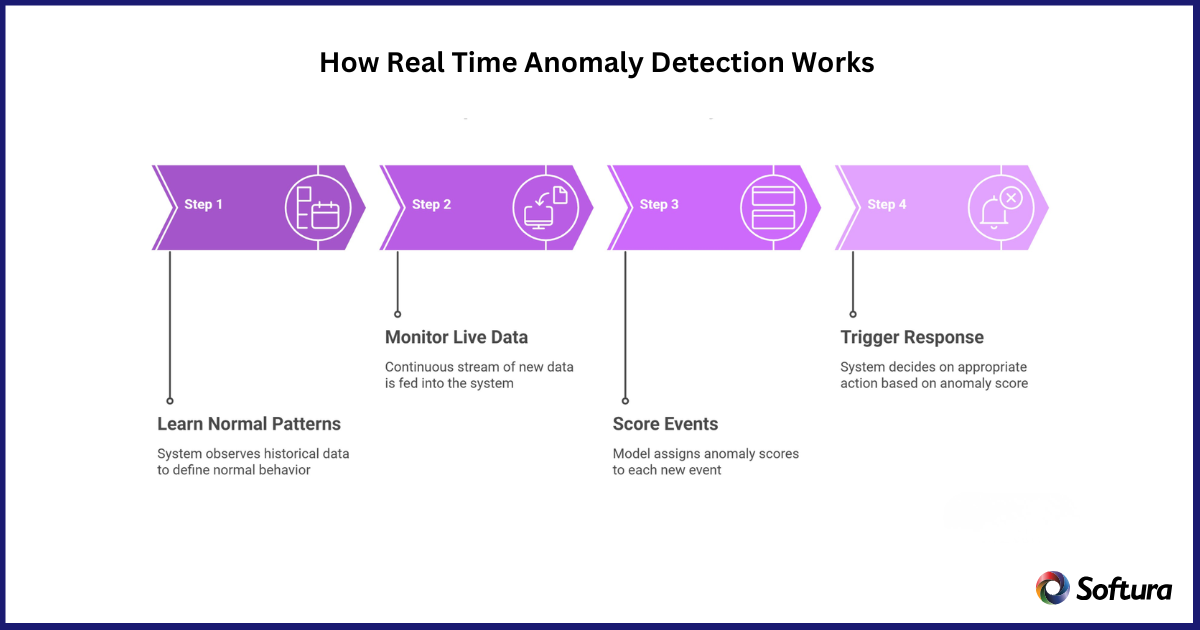

AI-driven growth strategies start with targeted experiments, not broad automation. The model identifies high-value segments or actions, a controlled test measures financial impact, and engineering integrates the winning logic into operations with ongoing monitoring.

Short real-world example

A mid-sized online retailer used a churn-prediction model to identify customers at high risk of leaving. A targeted retention campaign (personalized discounts plus tailored product recommendations) sent to the predicted cohort produced a 12% reduction in churn, an 8% lift in repeat purchase rate, and positive ROI within two quarters. The initiative followed the DISCOVER framework and included a holdout group to validate causality.

Checklist: minimum requirements before launching a growth experiment

- Clear success metric and baseline measurement

- Accessible, documented data sources with ownership

- Small-scale prototype or predictive model with explainability notes

- Experiment design (A/B or holdout) and required sample size

- Post-launch monitoring plan for performance, bias, and data drift

Practical tips to accelerate value

- Prioritize experiments by expected value and implementation cost—use an economic impact score to rank ideas.

- Start with features that require minimal upstream changes (recommendation widgets, email personalization) to prove value quickly.

- Instrument metrics and events from day one—accurate telemetry shortens the feedback loop.

- Design experiments with guardrails for fairness and privacy; consult legal and compliance early.

- Use model monitoring and alerting to catch drift and maintain trust with stakeholders.

Common mistakes and trade-offs

Trade-offs occur between speed and robustness. Rapid prototypes can show early signals but risk being brittle in production. Heavy engineering investment upfront reduces operational risk but delays learning. Common mistakes include:

- Measuring model performance (accuracy) instead of business impact (revenue, retention).

- Skipping proper A/B or holdout testing and assuming causality from correlated lift.

- Neglecting data quality and lineage, which increases long-term maintenance cost.

- Building complex models when simple heuristics would achieve required uplift.

Core cluster questions

- How to prioritize AI use cases that drive revenue?

- What metrics best measure AI-driven growth experiments?

- How to design an A/B test for a predictive model?

- When should automation replace manual processes with AI?

- How to maintain AI models in production to prevent performance drift?

Responsible practices and standards

Incorporate accepted standards for risk management, transparency, and data governance. For authoritative guidance on AI risk management and responsible use, consult the NIST AI resources: NIST AI. Aligning with standards reduces regulatory and reputational risk and improves stakeholder confidence.

Next steps for leaders and teams

Form a cross-functional growth squad with product, data science, engineering, and measurement ownership. Use the DISCOVER framework and checklist to run 2–4 focused experiments in a 90-day cycle and evaluate which ideas meet the defined economic thresholds for scale.

FAQ: What are the first steps to use AI for business growth?

Start by defining a clear, measurable outcome; inventory available data; and design a small experiment that isolates impact. Ensure legal and compliance checks for data usage before launching.

How do AI-driven growth strategies differ from traditional analytics?

Traditional analytics often explains past behavior; AI-driven strategies emphasize predictive models and automated actions that create forward-looking operational changes and real-time personalization.

How can AI for business growth be measured reliably?

Measure business-level KPIs through controlled experiments (A/B tests, holdouts) and report both short-term lifts and long-term retention or cost impacts. Track model health metrics (precision, recall, calibration) alongside financial metrics.

What are common pitfalls when scaling AI initiatives?

Scaling without monitoring, failing to manage data drift, and not investing in retraining pipelines are frequent causes of model decay and lost value. Plan for operational ownership and continuous measurement.

How to balance quick wins with long-term AI investments?

Use a portfolio approach: allocate resources to quick experiments that validate demand and to foundational investments (data quality, deployment pipelines) that reduce future cost and risk. Reassess priorities quarterly based on measured outcomes.