How Video Annotation Improves Action Recognition in AI Models

Get a free topical map and start building content authority today.

Action recognition has a tougher task than object detection because objects remain relatively stable in the labelers' environment. Actions, however, occur over seconds and even small variations in timing may completely change the meaning of an action. For example, a quick bending motion could be interpreted as a person falling down, a squat, or tying their shoes. Thus, if a model's labels do not clearly indicate the "when," then the model will learn a vague version of the action.

Furthermore, this issue continues to grow due to the rapid growth of video content. According to a 2024 report issued by Sandvine, video represents the largest category of downstream Internet traffic across all regions at approximately 41% to 48%. Additionally, according to YouTube, users were uploading over 500 hours of new video content per minute in 2020. So, the amount of new motion data on which you can put your hands is growing almost exponentially year on year.

This is one reason why video annotation is so important. Action recognition requires both motion and sequence information, including consistent start and stop points, transitions, and environmental context. By labeling these elements correctly, you are not merely preparing the data, but rather you are educating the model of what an action actually is.

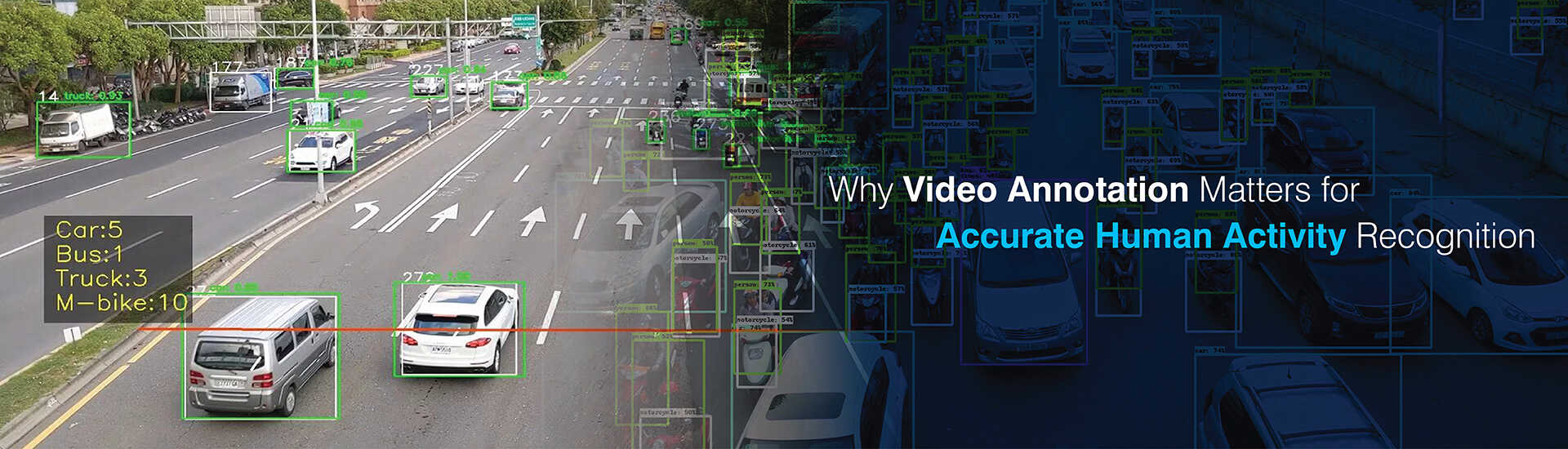

What Video Annotation Means in AI

Video annotation in AI means labeling video data so models can learn from motion and sequences, not just single images. It turns raw footage into annotated video datasets for AI that can train and evaluate systems for video action recognition and related tasks.

- What gets labeled: frames, clips, sequences, trajectories, temporal segments

- What’s inside the labels: actions, activities, interactions, objects in motion

- Why it’s different: time becomes part of the label, not just the pixels

Types of labels usually come in two layers: what you draw, and what you time-stamp.

- Spatial labels: bounding boxes, polygons, masks, keypoints

- Temporal labels: action tags, timestamps, temporal boundaries

- Common setup: video frame annotation tied to timelines for action classes

The Core Techniques Used in Video Recognition Tasks

All of the most commonly used methods for performing video action recognition today are based upon deep learning. However, there are some distinct differences between these methods and how they are applied to extract the necessary spatiotemporal information needed to identify relationships between frames.

- 3D Convolutional Neural Networks (CNN): Learn spatiotemporal features from frame stacks

- Two-Stream Networks: Combine RGB with optical flow to obtain motion-related signals

- Video Transformers: Use attention mechanisms to identify relevant frames and segments

- CNN-LSTM / Temporal Conv Nets: Utilize temporal layers to model sequential behavior

Why temporal modelling is central for action recognition in AI

Because video action recognition is concerned with identifying changes occurring between frames, temporal modeling is essential to the success of the method. If the labels associated with frames vary significantly across time, even robust architectures tend to produce confused results. Therefore, deep learning-based video annotation requires consistent labeling of sequences of frames, rather than simply clean shapes identified on key frames.

Why Video Annotation Quality Decides Model Accuracy

In almost all cases, improving accuracy requires improving the quality of the labels used during the training process. While models can tolerate moderate levels of noise, action recognition tends to become brittle when time boundaries and categories are poorly defined.

The Things that Will Break a Model:

- Poorly defined action boundaries

- Label Noise (Incorrect Class, Incorrect Time Stamp)

- Missing Context (Interaction, Tools, Environmental Surroundings)

Improved video annotation results in better training for the model through improved supervisory signals. Deep learning video annotation also reduces confusion between similar actions. Improved video annotation also enables better cross-person, view-angle, lighting, and layout generalization. Many teams have experienced significant improvements in performance when transitioning the model from the laboratory to real-world footage.

The Things that Improve with Better Labels:

- Better Separation Between Similar Actions

- Better Temporal Localization

- Fewer False Positives Caused by Background Bias

The Building Blocks of Action Recognition Datasets

A good action recognition dataset is a structured product, not a pile of clips. Teams define how the dataset behaves before they even start labeling.

- Dataset structure basics: classes, clip length, fps, resolution, camera viewpoint

- Splits: train/val/test splits and class balance

- Coverage: people diversity, environments, angles, and motion variety

Label formats depend on the task you are training for.

- Clip classification: single-label clip classification

- Sequence labeling: multi-label sequences where actions overlap

- Temporal localisation: identify when the action happens

- Segmentation: action segmentation in videos with boundaries and transitions

The more you move toward localisation and segmentation, the more you depend on precise timing rules. If timing rules stay vague, the dataset becomes inconsistent, and so does the model.

Annotation Methods That Best Support Action Recognition

Organizations typically use an approach to perform their annotation. The method of annotating things should match the goal of the organization and the type of annotation they wish to accomplish. In large-scale projects, many teams collaborate with an experienced video annotation company to manage complex annotation methods such as frame-by-frame labeling, keyframe tracking, and multi-object annotation at scale. Some common types of annotation used to help organizations improve action recognition performance are listed below.

1. Frame-by-Frame Annotation of Video

Video frame-by-frame annotation provides dense supervision, while it is very slow and can capture small motions and quick transition of objects that keyframe annotation cannot.

- Need: fine detail about motion, micro-actions, dense supervision.

- Best for: Footage with many instances of occlusions, crowded scenes, shaky cam.

- Cost/Benefit: High accuracy at a high cost.

2. Video Keyframe Annotation

Video keyframe annotation gives labels to key frames and then uses either tracking or interpolation to give labels to the other frames. This method saves time when there are no abrupt changes in motion and all objects in view remain trackable throughout the video.

- How: Label keyframes; Propagate labels to remaining frames; Correct any errors.

- Best for: Smooth motion; Trackable subject(s); Stable cam.

- Dangers: Errors caused by interpolation of rapidly moving objects or occluded subjects.

3. Segmentation of Actions in Videos

Segmentation of actions in videos is focused on "when" rather than "where". Labels are given to indicate the beginning and ending of each action along with transitions between each action. This is the most commonly used method when accurate timeline information is required.

What gets labeled: Action begins; Action ends; Transitions.

Aids with: Temporal localization; Sequential tasks; Long duration videos.

Requires: Strict boundaries and review loops.

4. Multiple Object Video Annotation

Deep learning video annotation becomes necessary when the meaning of an action is dependent upon the interactions between two or more entities. Single actor labels are insufficient for team sports, crowds, and care work flows.

- What is being tracked: Multiple objects/individuals with consistent identifiers over time.

- Additional information: Interaction labels and contextual cues.

- Applications: Team sports; Crowd monitoring; Patient-care work flows.

5. Skeleton/key point-based labeling (For Human Activity Recognition)

Skeleton and keypoint labels are concerned with pose and joint motion. These can be superior to appearance-based labels for human activity recognition datasets, particularly where there are variations in environment.

- What gets labeled: Joints; Pose sequences; Interaction cues.

- Advantages: Privacy friendly; Less dependent on background.

- Disadvantage: May need additional object/context labels for object-based actions.

How Annotated Video Improves Deep Learning Models

Annotated video enhances deep learning models through several practical means. Annotated video enhances the models ability to learn and enhance evaluation of the model as well as allow for tasks that depend on temporal precision.

Better Spatio-Temporal Feature Learning:

- motion cues and micro-actions

- transitions and action boundaries

- fewer blended classes in training

It also allows for improved measurement and debugging of the model's performance. Teams can identify if a model has trouble with similar classes or if the model identifies action boundaries too early or too late. That feedback loop is important as action recognition models can appear fine on accuracy but have problems with timing.

Much Improved Evaluation and Error Analysis:

- confusion matrix for similar actions

- errors from incorrect identification of boundaries (too early or too late)

- segment-level metrics for localization and segmentation

With quality labels, teams can also develop advanced results.

Enable Advanced Tasks:

- Action localization and segmentation

- Action anticipation

- Anomaly detection using temporal patterns

Common Mistakes and How to Avoid Them

Several frequent errors occur in the majority of action recognition projects, including projects developed by experienced professionals.

Confused labels and poorly defined class definitions: Include/exclude rules and example classes

Varying frame rates and/or varying timestamp values: Standardize frame rates or normalize timestamps

Failing to account for background bias: Use a variety of scene contexts for different classes so the model learns the action and not the background

Wrongly selecting negative samples: Include "non-action" and near-actions that look like the actions

Failing to properly test the QA process: Do not select large volumes of low trust data for testing. Establish review loops early to build trust.

Most fixes are simple and are frequently ignored due to the lack of perceived importance. However, simple fixes are generally the largest contributors to increased accuracy versus changing architectures.

Action recognition gains the most where timing mistakes are costly. These are also the cases where annotation depth needs to match the end goal. When teams treat context and time as first-class labels, these use cases become more reliable in the real world, not just on benchmarks.

Closing: What to Invest in First

Invest in your label taxonomy and your QA process first. These foundations also help organizations make better decisions when choosing right video annotation partner, ensuring that the selected team can follow strict annotation guidelines and deliver reliable datasets for training action recognition models. Get these correct before scaling. Select the level of annotation complexity based on the desired output: classification, localization, or action segmentation in videos.

Focus on first investments that will pay off quickly including the definition of clear class definitions with include/exclude rules, calibration rounds and gold standards, consistent QA checks and review loops and the appropriate level of annotation based on the desired task.