How GenAI Chatbots Are Changing Health and Fitness Apps: Practical Benefits and Risks

Get a free topical map and start building content authority today.

Introduction

GenAI chatbots for health apps are reshaping how people access coaching, tracking, and clinical guidance. This article explains why these systems are effective, what trade-offs to expect, and how product teams and users can evaluate them. Detected intent: Informational.

GenAI chatbots combine large language models, context from device sensors and profiles, and product logic to deliver personalized advice, triage, and motivation inside health and fitness apps. This guide covers the practical benefits, a named CARE checklist for safe design, a short real-world scenario, 5 core cluster questions for content planning, and actionable tips and common mistakes to avoid.

How GenAI chatbots for health apps deliver value

GenAI chatbots integrate natural language processing, behavior-change techniques, and user data (with consent) to create conversational experiences that feel personalized and adaptive. Common roles include AI fitness assistant features for workout planning, symptom triage, medication reminders, and mental health support. These systems use large language models (LLMs), fine-tuned domain models, and rule-based safety layers to balance fluency with accuracy.

Key capabilities and real-world benefits

Personalization and adaptive coaching

By combining user history, sensor data (like steps and heart rate), and stated goals, a personalized health chatbot can propose tailored workouts, dietary adjustments, and micro-goals that fit a user's schedule and readiness to change.

Scalable triage and information delivery

GenAI chatbots can provide initial symptom assessment, explain medical concepts in plain language, and escalate to human clinicians or recommend seeking emergency care when red flags appear. Careful design is required to avoid misclassification; many teams follow FDA and clinical decision support guidance for high-risk flows.

Engagement and behavior change

Conversational nudges, motivational interviewing techniques, and contextual reminders (a hallmark of modern AI fitness assistant features) increase adherence by making interventions timely and relevant.

CARE checklist: a practical framework for safe, useful chatbots

Use the CARE checklist when designing or evaluating GenAI chatbots for health and fitness apps:

- Consent & privacy — Explicitly collect consent, log data uses, and support data deletion; follow HIPAA or local privacy laws where applicable.

- Accuracy & sources — Surface confidence scores, cite sources, and maintain a verifiable knowledge base for medical claims.

- Relevance & personalization — Use profile and sensor data responsibly to tailor recommendations and avoid generic responses.

- Explainability & escalation — Provide clear explanations, acknowledge limitations, and offer escalation paths to clinicians when needed.

Short real-world example

A fitness app integrates a personalized health chatbot that reads a user's recent runs, sleep patterns, and stated knee pain. The chatbot suggests a 20-minute low-impact training plan, explains why cross-training helps recovery, and asks follow-up questions. When the user reports worsening pain and swelling, the system displays red-flag guidance and prompts the user to contact a clinician, while offering to schedule a telehealth visit if the app supports it.

Core cluster questions (use as internal link targets)

- How do GenAI chatbots personalize fitness plans using wearable data?

- What privacy rules apply to conversational health data?

- When should a chatbot escalate to a clinician?

- How are LLMs fine-tuned for medical or fitness advice?

- What metrics measure effectiveness of AI-driven health coaching?

Practical tips for product teams and users

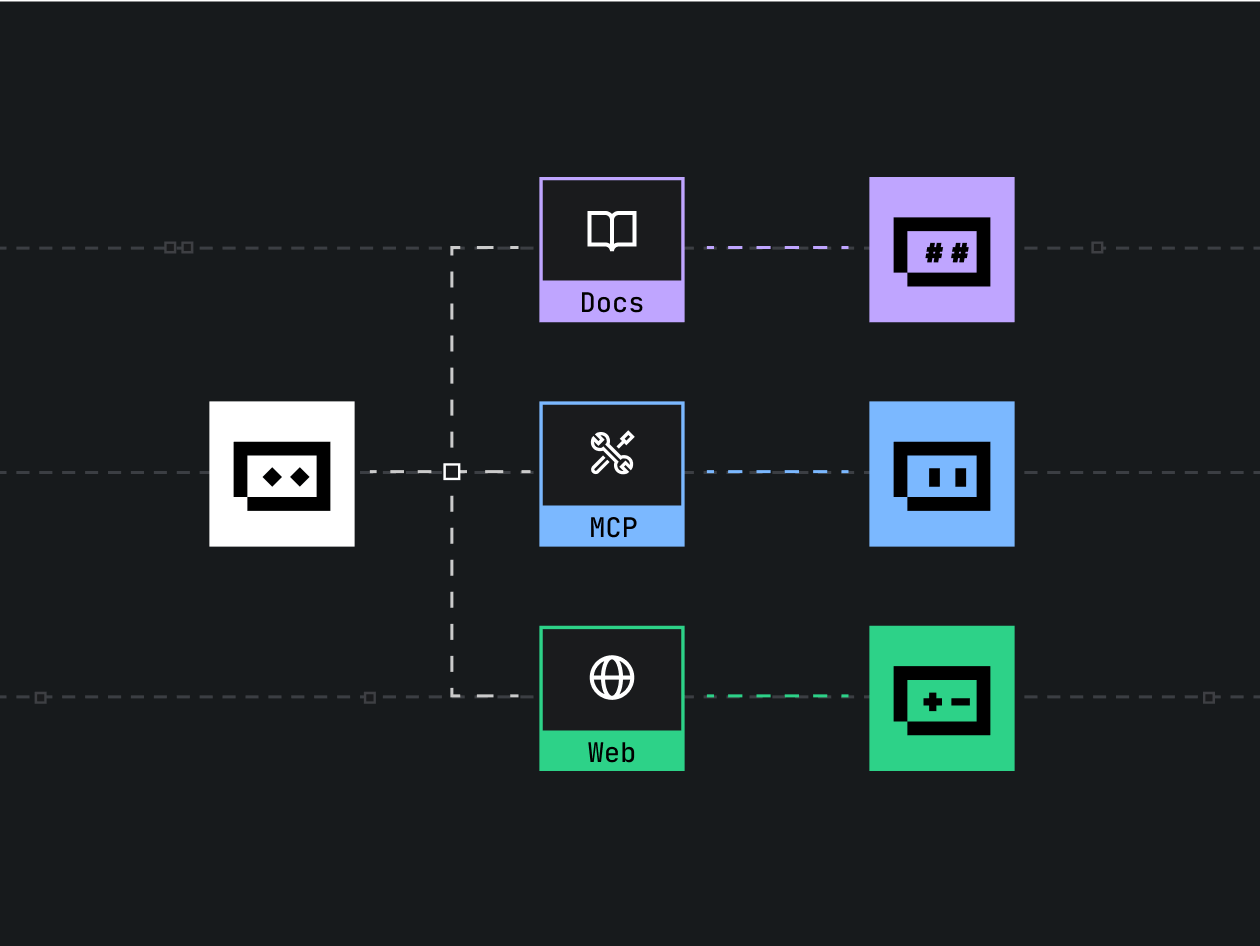

- Implement a hybrid architecture: combine LLM responses with deterministic rules for safety-critical guidance.

- Log model outputs and user interactions for auditability and continuous improvement while protecting PHI per legal requirements.

- Expose uncertainty: surface confidence or source citations when making clinical or diet recommendations.

- Design clear escalation flows and human-in-the-loop review for ambiguous or high-risk conversations.

Trade-offs and common mistakes

Trade-offs

Higher model fluency improves user engagement but can increase hallucination risk; stricter rule-based answers reduce hallucination but may feel less natural. Personalization boosts relevance but raises privacy and consent complexity. Matching the right balance depends on the app's risk profile and regulatory context.

Common mistakes

- Over-reliance on a single LLM without a safety toolkit (filters, fact-checkers, domain knowledge graph).

- Failing to provide clear escalation or emergency instructions for red-flag symptoms.

- Collecting more personal data than necessary for the feature, increasing legal and trust risk.

Standards, verification, and one trusted resource

Design teams should consult regulatory guidance depending on region—examples include the US Food and Drug Administration (FDA) for clinical decision support and global public health guidance on digital health. For broader digital health considerations, refer to the World Health Organization's digital health resources at WHO: Digital Health.

Measuring success: KPIs that matter

Track engagement (sessions per user), behavior-change metrics (goal completion, retention), clinical safety metrics (false-negative triage rate), and operational metrics (escalation response time). Combine quantitative KPIs with qualitative user feedback to validate that conversations are useful and trustworthy.

Integration checklist before launch

- Privacy and consent flows tested and documented.

- Safety rules and red-flag triggers implemented and reviewed by clinicians when required.

- Audit logging, rollback, and model-monitoring in place.

- User-facing explanations and opt-out options available.

Conclusion

GenAI chatbots for health apps offer practical gains: personalization, scalable education, and improved engagement. However, they require careful design—privacy protections, fact-checking, and escalation paths are essential to avoid harm. Using the CARE checklist and the trade-offs above helps teams build systems that are both helpful and safe.

Detected intent

Informational

FAQ

What are GenAI chatbots for health apps and how do they work?

GenAI chatbots are conversational systems powered by large language models that combine user data, domain knowledge, and product rules to produce personalized responses. They work by interpreting user input, retrieving relevant context (history, sensors, knowledge bases), generating a response, and applying safety filters before delivering the message.

Are GenAI chatbots safe for medical advice?

They can be safe when designed with safety layers: clinical review, clear limitations, citations, and escalation flows. For high-risk decision support, follow regulatory guidance, include human oversight, and validate performance with real-world testing.

How should apps protect user privacy when using AI chatbots?

Minimize data collection, obtain explicit consent, encrypt data at rest and in transit, and offer deletion. Comply with laws like HIPAA where applicable and apply role-based access controls for PHI.

How do AI fitness assistant features improve adherence and outcomes?

AI fitness assistant features deliver tailored, timely recommendations that match a user's readiness, context, and history. By creating micro-goals, adapting intensity, and providing motivational dialogue, these features increase consistency and long-term engagement.

How to evaluate whether a personalized health chatbot is working?

Measure behavior-change KPIs (goal completion, retention), safety KPIs (incorrect triage incidents), and satisfaction scores. Combine A/B testing with clinician review to validate clinical quality and user benefit.