Hybrid Cloud Storage for Big Data: Practical Benefits, Architecture, and a SCALE Checklist

FREE SEO Topical Map Generator: Find Your Next Content Ideas

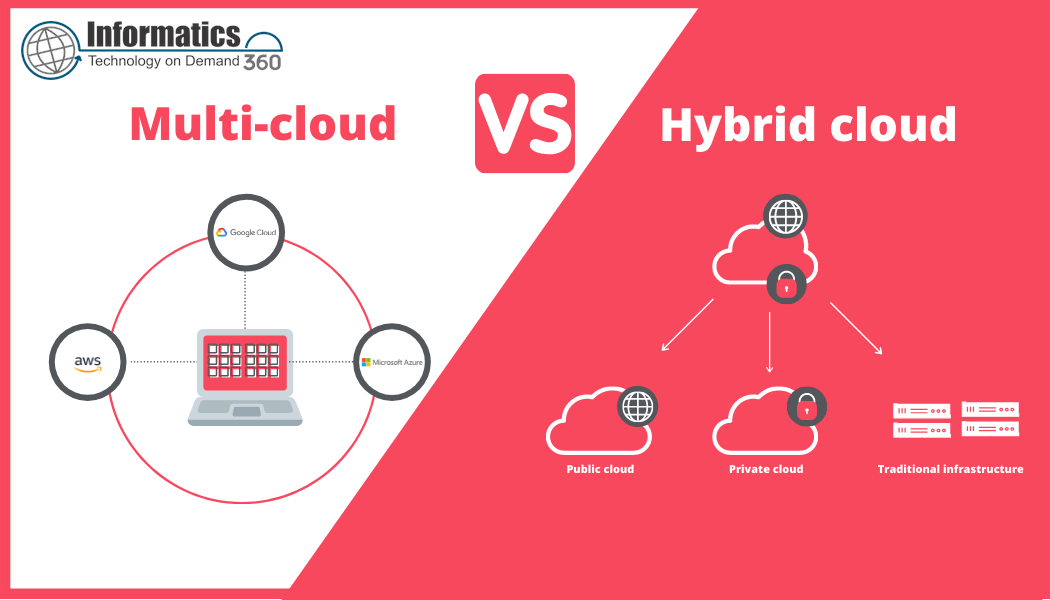

Hybrid cloud storage for big data combines on-premises systems and public or private cloud storage to meet the scale, cost, and performance needs of modern analytics workloads. This guide explains what makes hybrid architectures ideal for large datasets, how to design a practical deployment, and what pitfalls to avoid.

Detected intent: Informational

Key takeaway: Hybrid cloud storage offers cost control, performance tuning, and regulatory flexibility for big data when combined with clear policies for data tiering, security, and lifecycle management. Read the SCALE checklist and deployment steps for an actionable plan.

Why hybrid cloud storage for big data is a practical choice

Big data workloads—streaming clickstreams, IoT telemetry, genomics pipelines, and large-scale ML training—have different storage needs: high-throughput block and file storage for active processing, object storage for large immutable datasets, and archival solutions for long-term retention. A hybrid approach lets organizations place data where it makes the most sense: low-latency on-premises for hot compute, cost-effective cloud object stores for warm data, and archival tiers for cold data. This mix balances performance, compliance, and cost.

Core benefits and use cases

Hybrid environments unlock several advantages for big data projects:

- Performance: Keep latency-sensitive datasets close to analytics clusters (Hadoop, Spark, Kubernetes) while offloading bulk storage to cloud S3-compatible object stores.

- Cost optimization: Use data tiering and lifecycle policies to move infrequently accessed data to lower-cost cloud or tape archives.

- Regulatory control: Retain sensitive datasets on-premises or in private cloud regions to meet data residency rules and audit requirements.

- Scalable burst capacity: Handle unpredictable spikes by bursting compute to the public cloud without a permanent lift in on-prem hardware.

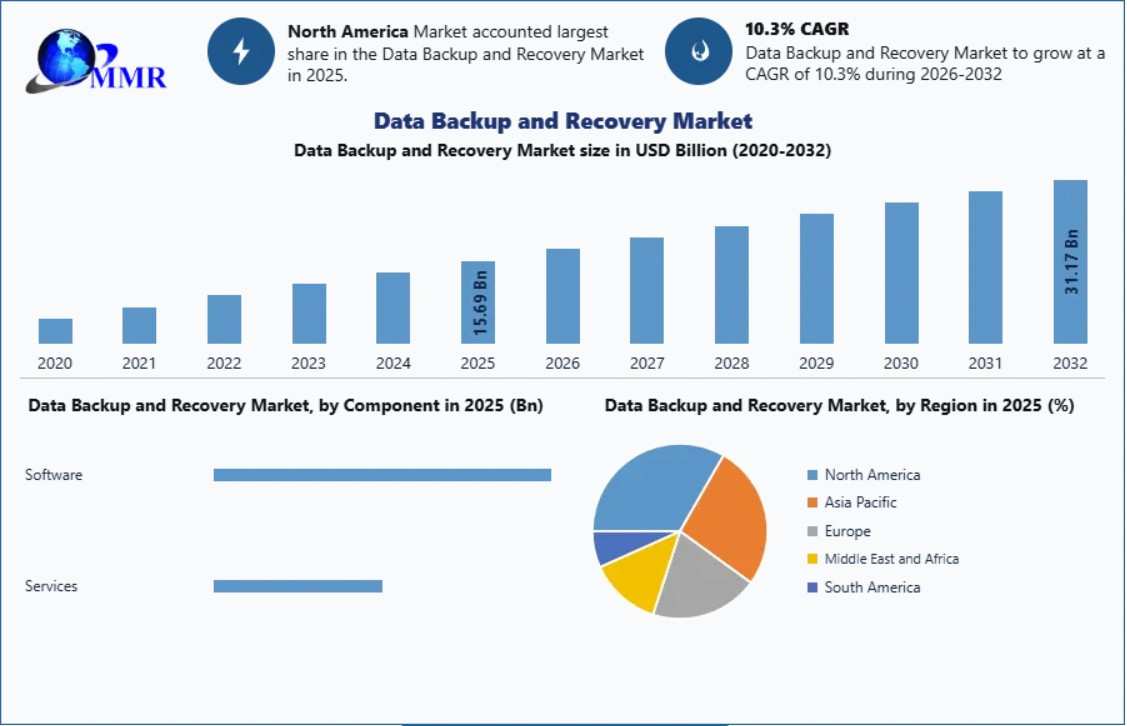

- Resilience and backup: Distribute copies across on-prem, cloud, and DR sites for faster recovery and geo-redundancy.

Key architecture patterns for hybrid storage

Common hybrid storage architectures include:

- Tiered storage with lifecycle policies: Active data remains on-prem; warm/cold data migrates to cloud object storage or archive.

- Transparent caching/gateway: Use an on-premises gateway that presents a local filesystem while syncing to cloud object storage for capacity.

- Data virtualization: A logical data layer provides unified access across on-prem and cloud stores without moving raw data.

- Cloud-burst compute: Primary storage remains local; ephemeral cloud compute mounts remote object storage for short-term processing.

SCALE Checklist: a named framework for hybrid deployments

Use the SCALE checklist to evaluate and plan hybrid cloud storage for big data:

- Security & compliance — encryption, access controls, and audit trails that meet industry standards (reference NIST guidelines where applicable).

- Cost & capacity — tiering strategy, lifecycle policies, and chargeback accounting for cloud egress and storage rates.

- Access patterns — identify hot, warm, cold data and select storage types (block, file, object) accordingly.

- Latency & locality — ensure compute and data locality for low-latency analytics; consider caching and edge storage.

- Elasticity & operations — automation for provisioning, metadata cataloging, and backup/DR workflows across environments.

Practical deployment steps

Follow these steps to put hybrid cloud storage for big data into production:

- Map data flows and classify datasets by access frequency, sensitivity, and retention requirements.

- Choose storage types: local SSD/HDD for active workloads, NAS or distributed file systems for shared access, and S3-compatible object stores for large immutable datasets.

- Implement data tiering and lifecycle rules that automatically move data between tiers.

- Deploy secure transfer mechanisms (TLS, VPN, private links) and key management for encryption at rest and in transit.

- Integrate metadata and catalog tools (data catalogs, Glue, or Hive metastore) so analytics engines can discover data regardless of location.

Practical tips

- Benchmark end-to-end throughput and tail latency with representative workloads before committing to a tiering policy.

- Use checksums and data validation during migration to prevent silent data corruption (consider object storage versioning).

- Automate lifecycle transitions and include cost alerts tied to data egress and cloud storage growth.

- Consider co-locating metadata services close to compute to avoid metadata lookup latencies when data is remote.

Real-world example: retail analytics at scale

A retail company processes millions of clickstream events per hour for real-time personalization and historical BI. Hot session data is stored on-premises in a high-throughput file system attached to the analytics cluster for sub-second lookups. After 24 hours, session aggregates are migrated to an S3-compatible cloud object store using lifecycle policies. Data required for model training is retrieved on demand to a GPU-enabled cloud environment for burst processing, then models and derived features are stored in the cloud with backups on-prem for compliance. This hybrid approach reduced on-prem storage costs by 60% while retaining low-latency access for online personalization.

Trade-offs and common mistakes

Hybrid architectures introduce complexity. Common mistakes include:

- Underestimating data egress costs when frequent readbacks from cloud storage are needed.

- Failing to align storage classes with access patterns—storing 'warm' data in cold-optimized tiers can slow analytics and increase compute time.

- Neglecting metadata synchronization, which makes discovery and governance hard across environments.

- Overlooking security and key management differences between on-prem and cloud, creating compliance gaps.

Standards, governance, and recommended resources

Follow established frameworks for data governance and security. For guidance on big data practices and interoperability, consult resources from NIST and similar standards bodies. For example, see the NIST big data program for high-level guidance on risk, privacy, and data management: NIST Big Data.

Core cluster questions

- How does data tiering work in hybrid storage architectures?

- What are the latency implications of accessing cloud object storage for analytics?

- How to design backup and disaster recovery across on-prem and cloud?

- Which metadata and catalog solutions work best with hybrid environments?

- How to estimate and control cloud egress and storage costs for big data?

Monitoring and operations

Operational visibility is critical. Track capacity usage, egress volume, access frequency, and latency. Use logging and monitoring that aggregates metrics from on-prem storage controllers and cloud APIs into a unified dashboard. Automate alerts for anomalous access patterns that could indicate data leakage or runaway costs.

FAQ: Is hybrid cloud storage for big data the right choice?

Hybrid cloud storage for big data is ideal when workloads require a balance of low-latency access, cost efficiency, and compliance control. Evaluate based on data sensitivity, access patterns, and total cost of ownership including egress and management overhead.

How should data be tiered between on-prem and cloud?

Tiering should be driven by access frequency and SLAs: hot data on low-latency local storage, warm data in cloud object storage with occasional recall, and cold data in archive tiers. Automate transitions using lifecycle policies tied to metadata.

What security controls are required in a hybrid model?

Implement encryption at rest and in transit, centralized identity and access management (IAM), role-based access controls, and key management that spans environments. Audit trails and logging are essential for compliance and incident response.

How to estimate costs for hybrid deployments?

Model storage costs (per GB-month), egress charges, request-based API costs (object store GET/PUT), and cloud compute for burst processing. Include on-prem hardware, maintenance, and staffing in the total cost of ownership.

What are common migration strategies for moving petabyte-scale data?

Use phased migration with representative datasets, bulk transfer tools, secure physical transfer services if available, and validation checksums. Plan for incremental syncs and cutover windows to minimize downtime.