Chatbots as Healthcare Assistants: Benefits, Risks, and How They Work

Get a free topical map and start building content authority today.

Can Chatbots Be Your Next Healthcare Assistant?

The use of chatbots healthcare assistant tools is growing across clinical and administrative settings. These conversational AI systems apply natural language processing and machine learning to answer questions, guide triage, schedule appointments, remind patients about medication, and sometimes support clinicians with clinical decision support. Understanding what chatbots can and cannot do helps patients and healthcare organizations decide when these tools are appropriate.

- Chatbots can increase access to information, automate routine tasks, and support patient engagement.

- They commonly use natural language processing, integration with electronic health records, and rule-based or machine learning models.

- Limitations include accuracy, potential bias, privacy risks, and regulatory considerations such as HIPAA and FDA oversight.

- Patients and providers should verify clinical oversight and data protections before relying on a chatbot for health decisions.

chatbots healthcare assistant: Roles, capabilities, and typical uses

Chatbots healthcare assistant applications vary by design and setting. Common roles include administrative support (appointment booking, insurance verification), patient engagement (follow-up messages, medication reminders), symptom checkers and triage, and clinician-facing tools that summarize patient information or flag abnormal results. In telemedicine workflows, chatbots may collect pre-visit information and share educational materials to make encounters more efficient.

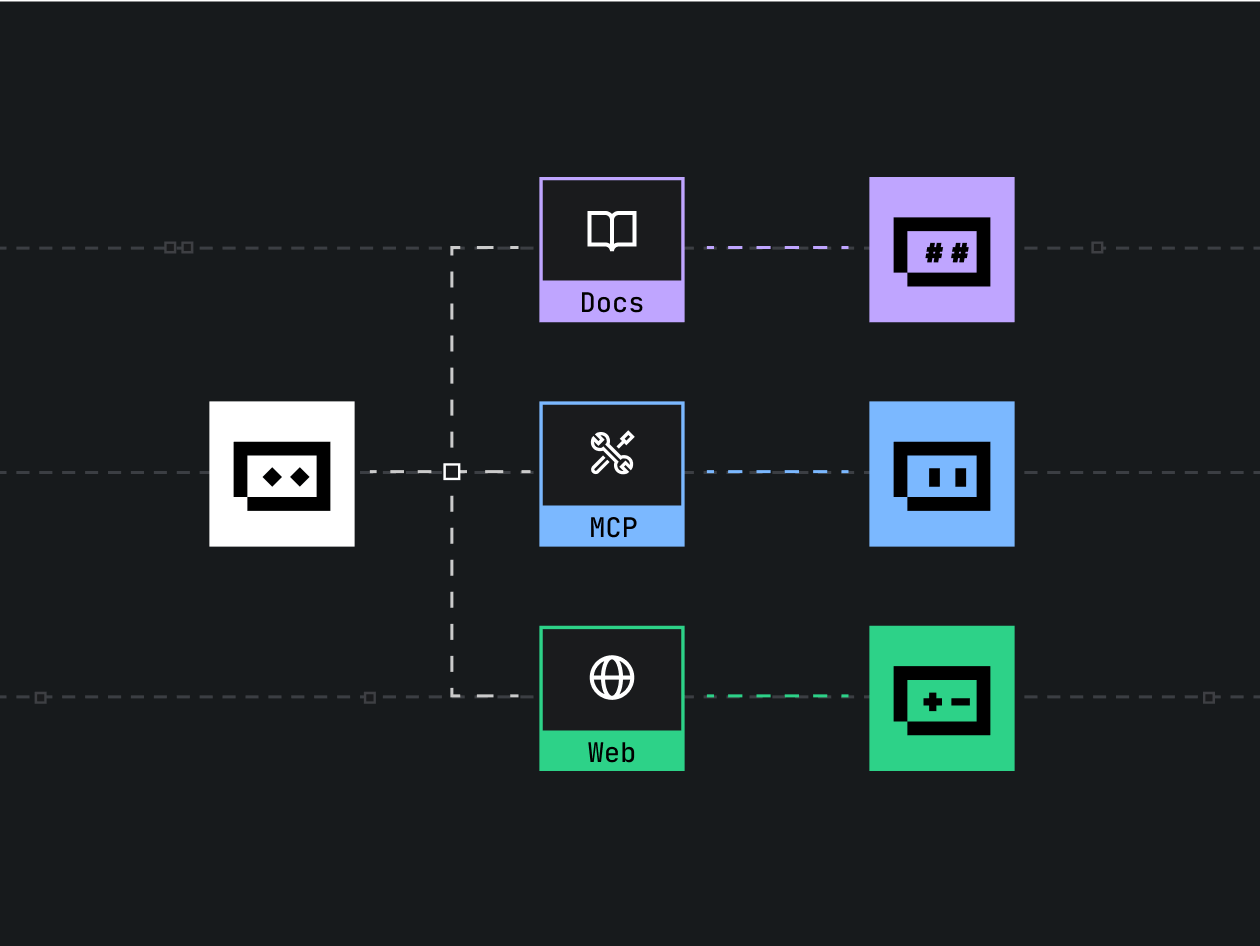

How they work

Most chatbots rely on a combination of natural language processing (NLP) to interpret user input and either rule-based logic or machine learning models to generate responses. Integration with electronic health records (EHRs) and APIs can enable personalized reminders or retrieval of medication lists. Some systems are supervised by clinicians and incorporate clinical decision support logic, while others operate as general informational agents.

Common use cases

Typical use cases include triage assistants that suggest whether urgent care is needed, scheduling and reminders that reduce missed appointments, mental health conversation agents that provide coping strategies, and patient education chatbots that explain procedures or test results. Research in journals such as the Journal of Medical Internet Research documents increasing adoption and varied effectiveness depending on design and clinical oversight.

Benefits and limitations

Potential benefits

Chatbots can provide 24/7 availability, standardize information delivery, reduce administrative workload, and extend access in areas with clinician shortages. When integrated with telemedicine and EHR systems, they can streamline workflows and improve patient engagement metrics such as medication adherence or follow-up rates.

Key limitations

Limitations include risk of incorrect or incomplete recommendations, lack of empathy or nuance in sensitive conversations, potential bias in training data, and challenges in interpreting ambiguous symptoms. Chatbots are not a replacement for professional clinical judgment. Clinically actionable recommendations should involve clinician review or be supported by validated clinical decision support systems.

Privacy, security, and regulation

Handling personal health information requires attention to privacy and security. In the United States, the Health Insurance Portability and Accountability Act (HIPAA) sets standards for protecting medical information; organizations deploying chatbots should ensure appropriate safeguards and business associate agreements where applicable. For guidance on legal protections and HIPAA requirements, official resources are available at U.S. Department of Health & Human Services - HIPAA.

Regulatory oversight of clinical AI varies by jurisdiction. In some cases, the Food and Drug Administration (FDA) provides guidance on clinical decision support and software as a medical device. Developers and healthcare organizations should monitor regulatory updates and follow best practices for validation, monitoring, and reporting of safety issues.

Practical considerations for patients and providers

For patients

When interacting with a chatbot, verify whether the tool is intended for general information or clinical decision support. Look for transparency about the chatbot's data handling, whether conversations are stored, and how personal data are protected. Always follow up with a licensed clinician for serious, urgent, or unclear symptoms.

For providers and health systems

Selecting or deploying a chatbot should include evaluation of clinical validity, data security measures, interoperability with EHRs, and governance processes for monitoring performance and addressing errors. Institutional review, clinician oversight, and patient consent practices help mitigate risk. Peer-reviewed evaluations and pilot studies can inform procurement decisions.

Evidence and ongoing research

Evidence on effectiveness varies by application. Randomized trials and observational studies have shown benefits for certain tasks such as appointment reminders and behavioral interventions, while diagnostic triage tools show variable sensitivity and specificity. Academic and clinical research continues to evaluate long-term outcomes, equity impacts, and best practices for safe deployment.

Are chatbots healthcare assistant reliable for medical advice?

Chatbots can provide general information and support for routine administrative tasks, but they are not a substitute for professional medical advice. Reliance on chatbots for diagnosis or treatment decisions without clinician oversight is not recommended. For urgent or serious health concerns, contacting a licensed healthcare provider or emergency services is essential.

How do chatbots handle private health data?

Handling of private health data depends on the system design and applicable laws. Secure transmission, encryption, access controls, and clear policies on data retention help protect information. Patients should review privacy notices and ask providers how conversational data will be used and stored.

Will chatbots replace clinicians?

Chatbots are designed to augment, not replace, clinicians by automating routine tasks and supporting decision-making. Clinical judgment, hands-on examination, and complex decision-making remain the domain of trained healthcare professionals.

What regulations apply to healthcare chatbots?

Regulation depends on the function of the chatbot and the jurisdiction. Tools that qualify as medical devices or provide diagnostic/therapeutic recommendations may fall under medical device regulations. Data protection laws such as HIPAA in the United States govern handling of protected health information. Providers and developers should consult legal and regulatory experts when deploying clinical tools.

How to evaluate a chatbot before use?

Check for documented clinical validation, clear disclosures about limitations, privacy and security safeguards, and mechanisms for escalation to human clinicians. Patient reviews, pilot study results, and institutional endorsements provide additional context but should not replace formal evaluation.

Further reading and regulatory guidance are available from official sources including national health regulators and peer-reviewed literature for those seeking detailed evaluations and current standards.