Cloud Availability and Reliability: Practical Guide to Uptime, Redundancy, and High Availability

Get a free topical map and start building content authority today.

Cloud availability and reliability are the foundations of any service that must stay online and responsive. This guide explains key terms, uptime metrics, redundancy patterns, and practical steps to design systems that meet business availability goals while balancing cost and complexity.

- Availability and reliability measure uptime, fault tolerance, and recovery behavior.

- Common patterns: replication, failover, load balancing, isolation across fault domains.

- Use an explicit checklist — e.g., the 3-2-1 Redundancy Checklist — and set RTO/RPO targets tied to SLAs.

Cloud availability and reliability: core concepts

Definitions and metrics

Availability is the proportion of time a system is operational and accessible; reliability is the likelihood that a system will function without failure over a time period. Key metrics include uptime percentage (five 9s = 99.999%), mean time between failures (MTBF), mean time to repair (MTTR), and service-level objectives (SLOs) and service-level agreements (SLAs). RTO (Recovery Time Objective) and RPO (Recovery Point Objective) translate business tolerance for downtime and data loss into technical targets.

Related terms and components

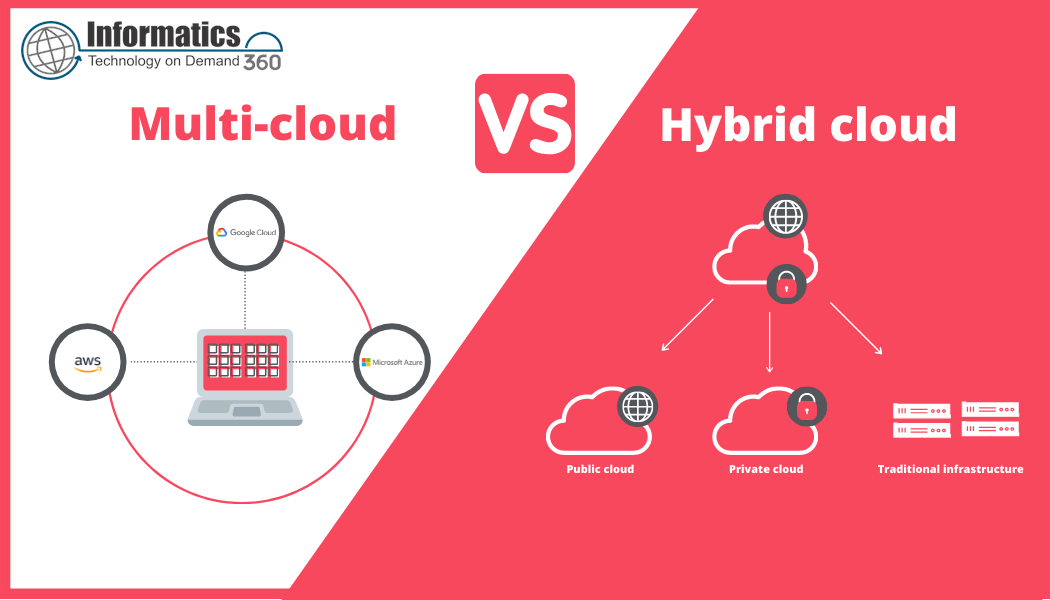

Important concepts include fault domains (hardware, rack, AZ), redundancy (active-active, active-passive), replication (synchronous vs asynchronous), failover, load balancing, and disaster recovery (DR). These terms appear across architecture docs and compliance standards from bodies such as NIST — see the NIST cloud computing program for definitions and best practices: NIST Cloud Computing.

Design patterns: uptime and redundancy strategies

Common redundancy patterns

Typical patterns to increase availability include:

- Active-active multi-zone deployments: distribute traffic across zones for zero-downtime maintenance.

- Active-passive failover: standby instances take over when primary fails (simpler but longer RTO).

- Read replicas and sharding: offload reads and isolate failures in data layer.

- Stateless services with externalized session state: enables horizontal scaling and easier failover.

Trade-offs between consistency and availability

Choices like synchronous replication improve durability but can increase latency. Asynchronous replication lowers write latency but accepts the risk of short windows of data loss. Align these trade-offs with RTO and RPO targets and business tolerance for data loss.

3-2-1 Redundancy Checklist (named checklist)

The 3-2-1 Redundancy Checklist adapts the backup principle to availability planning:

- 3 copies of critical services or data (primary + 2 replicas).

- 2 different fault domains (separate racks or availability zones).

- 1 offsite copy or separate region for disaster recovery.

Apply this checklist to compute, data, and networking layers. For example, keep 3 database replicas across 2 AZs and a cross-region backup for full DR.

Practical implementation: steps and checklist

Step-by-step actions

- Define business SLAs, translate to SLOs, then to technical RTO and RPO targets.

- Map dependencies and fault domains; identify single points of failure.

- Select redundancy patterns (active-active, active-passive, geographic replication) that meet targets.

- Design automated health checks and failover playbooks; test them regularly.

- Monitor MTTR and iterate: use post-incident reviews to harden the design.

Real-world example

Scenario: An e-commerce platform requires 99.95% availability during business hours and an RTO of 15 minutes for checkout service. Architecture choice: stateless checkout service deployed active-active across two availability zones, a distributed session store with cross-zone replication, and a write-ahead database replicated asynchronously to a standby in a second region. Automated health checks and DNS failover reduce RTO; offline backups follow the 3-2-1 checklist for recovery from catastrophic region failure.

Practical tips

- Instrument and measure: collect uptime, MTTR, and error budgets before making architecture changes.

- Test failure modes with scheduled chaos tests; validate recovery playbooks end-to-end.

- Design for graceful degradation: serve reduced functionality rather than complete outage when components fail.

- Automate failover and recovery steps where human error is likely under stress.

- Keep runbooks concise and version-controlled alongside infrastructure as code.

Trade-offs and common mistakes

Common mistakes

- Assuming cloud provider availability guarantees without architecting for provider-level failures.

- Over-relying on a single fault domain (one AZ, one VPC) for critical components.

- Not testing backup restores or failover procedures; an untested DR plan is a risk.

- Chasing “five 9s” without considering cost and diminishing returns.

Key trade-offs

Higher availability typically increases cost and complexity. Active-active designs lower RTO but require more sophisticated state management and load balancing. Cross-region redundancy improves disaster resilience but raises latency and data transfer costs. Align architecture decisions with business value and error budgets.

What to monitor

Track uptime, latency percentiles, MTTR, database replication lag, error rates, and infrastructure health metrics. Correlate alerts with runbooks and incident management tools to shorten resolution time.

Next steps and governance

Set measurable SLOs, implement the 3-2-1 Redundancy Checklist, and schedule regular DR drills. Maintain clear ownership for critical systems and tie availability objectives to release and change management processes to avoid regressions.

What is cloud availability and reliability?

Availability is the measurable percentage of time a system is operational; reliability describes the probability of continuous operation over time. Both are achieved through redundancy, monitoring, and tested recovery procedures that meet defined RTO and RPO targets.

How to calculate uptime and SLA penalties?

Uptime is typically calculated as (Total time - Downtime) / Total time. SLAs often define measurement windows and exclusions; read the SLA fine print to understand uptime calculations and penalty caps.

How many availability zones or regions are needed?

The number depends on business risk tolerance: two AZs may cover most hardware and rack failures; multi-region strategies are required for full geographic disaster resilience. Use the 3-2-1 checklist to guide decisions.

What's the difference between redundancy and disaster recovery?

Redundancy keeps services running despite component failure (short-term resilience). Disaster recovery focuses on restoring service after a catastrophic event, often involving cross-region failover and data restores.

Can availability be improved without doubling costs?

Yes—prioritize high-value components for active-active deployment, use targeted redundancy where it matters, and optimize by reducing blast radius and automating recovery to reduce human-error costs.