How Distributed Systems and AI Will Shape the Future of Cloud Computing

Get a free topical map and start building content authority today.

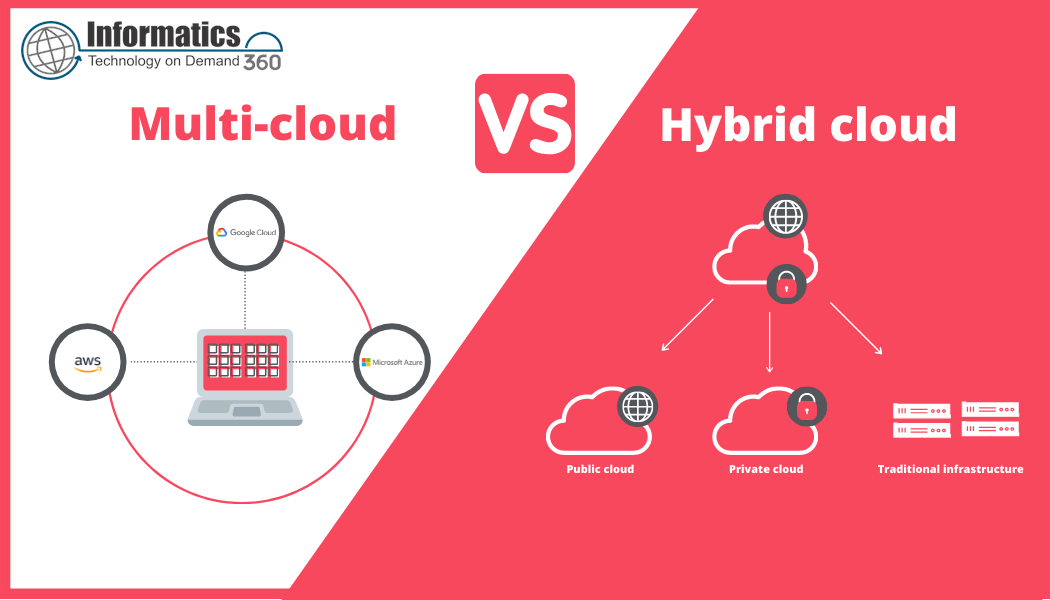

The future of cloud computing is shifting from centralized resource pools toward hybrid, distributed systems that natively integrate AI workloads. This transition changes how applications are designed, where models are trained and served, and which operational disciplines are required to keep systems resilient, performant, and cost-effective.

The future of cloud computing: core trends and definitions

Expect tighter coupling between distributed systems and AI. Distributed microservices, containers, and orchestration platforms will host model training and inference side-by-side with traditional services. Terms to know: model serving, federated learning, data locality, model parallelism, parameter servers, MLOps, and serverless inference.

Standards and definitions from organizations such as NIST provide a baseline for classification and risk assessment; see NIST Cloud Computing for foundational guidance on cloud models and security controls.

Key architecture patterns where distributed systems and AI meet

Hybrid training and federated learning

Training can be distributed across cloud clusters and edge devices to meet privacy or latency needs. Federated learning keeps data on-device and aggregates model updates, reducing data movement for regulated workloads.

Cloud-native AI infrastructure and model serving

Cloud-native AI infrastructure brings together orchestration (Kubernetes), GPU/accelerator scheduling, model registries, and feature stores. This pattern emphasizes reproducible pipelines and automated rollouts for models in production.

Edge inference and data locality

Edge computing for AI reduces inference latency and bandwidth usage by running lightweight models near users or sensors. The trade-offs include limited compute, model compression needs, and more complex deployment tooling.

SCALE framework: a practical checklist for AI + distributed cloud design

Use the SCALE framework to evaluate and design systems:

- Scalability: Can training and inference scale horizontally across nodes and regions?

- Compliance: Are data residency, encryption, and audit requirements satisfied?

- Availability: How will model serving remain resilient under node failures?

- Latency: Where must inference run to meet SLOs—cloud, edge, or hybrid?

- Efficiency: Are cost and energy trade-offs optimized through batching, quantization, or serverless bursts?

Real-world example: distributed ML across cloud and edge

A retail chain runs store-level sensors that detect shelf stock. Training occurs in the central cloud using aggregated anonymized data, while per-store fine-tuning and inference run on-site devices to meet sub-second SLA for restocking alerts. Model updates are validated in a staging namespace, then pushed via a CI/CD pipeline to edge orchestrators which apply a rollback-safe strategy.

Operational checklist and practical tips

Practical tips for implementing distributed systems and AI integration:

- Design pipelines for reproducibility: use versioned data, deterministic preprocessing, and immutable model artifacts in a registry.

- Automate deployment with blue/green or canary releases for models; include automated performance regression tests in CI.

- Use workload-aware orchestration: schedule GPU/TPU jobs and use autoscaling rules that consider model warm-up cost.

- Apply model compression and quantization for edge inference to reduce latency and energy use.

- Monitor model drift and data drift separately from system health; capture input distributions and prediction histograms.

Common mistakes and trade-offs to evaluate

Common mistakes and trade-offs include:

- Over-centralizing inference: central clouds simplify management but increase latency and bandwidth costs for real-time use cases.

- Neglecting observability: models without input/output telemetry cause slow detection of accuracy degradation.

- Underestimating data governance: distributing data across regions increases compliance complexity and audit surface.

- Premature optimization: aggressively compressing models can harm accuracy—validate on representative workloads.

Tooling and integration roadmap

Start with a minimal, repeatable pipeline: containerized training jobs, a model registry, and automated testing. Expand to multi-cluster orchestration for geo-distributed workloads. Add hardware-aware schedulers, feature stores, and federated learning libraries as needed. Choose open standards where possible to avoid vendor lock-in and to improve portability.

Conclusion: balancing innovation and operational rigor

The future of cloud computing will be defined by hybrid, distributed architectures that make AI a first-class workload. Success depends on designing for locality, observability, and reproducibility while making informed trade-offs between latency, cost, and governance.

FAQ

What is the future of cloud computing with respect to AI and distributed systems?

Expect hybrid models combining centralized training and distributed inference, standardized model lifecycle management, and stronger emphasis on data locality, observability, and compliance. Architectures will balance edge and cloud resources depending on latency and privacy needs.

How do distributed systems and AI affect operational costs and complexity?

Costs can rise due to specialized hardware (GPUs/TPUs), increased networking, and more complex deployment pipelines. Complexity grows with multi-region orchestration and edge fleets; automation and clear SLAs mitigate operational burdens.

When should an organization use edge computing for AI instead of a central cloud?

Use edge computing when strict latency requirements, bandwidth limits, or data locality/privacy rules make centralized inference impractical. Examples include industrial control, on-device personalization, and privacy-sensitive healthcare scenarios.

What security and compliance changes are needed for distributed AI deployments?

Implement end-to-end encryption, role-based access, audit trails for model updates, and data residency controls. Federated approaches help reduce data transfer, but require secure aggregation and robust authentication across nodes.

How can teams measure success when integrating AI into distributed cloud systems?

Track both system-level KPIs (latency, availability, cost per inference) and model-level KPIs (accuracy, drift, calibration). Use dashboards that correlate input distribution changes with performance regressions and set automated alerts for threshold breaches.