Build an AI Chatbot: Step-by-Step Guide to Design, Train, and Deploy

Get a free topical map and start building content authority today.

Building a useful, maintainable system requires planning. This guide explains how to build AI chatbot systems that are practical for production: from chatbot architecture design and training to deployment and monitoring. The primary focus is to help technical product teams and developers convert user needs into a working conversational service.

Build AI chatbot systems by defining scope and intents, choosing an architecture (rule-based, retrieval, or LLM), preparing data and models, deploying with observability, and iterating on feedback. Use the BUILD checklist for predictable progress and watch for common mistakes like skipping intent design or ignoring safety controls.

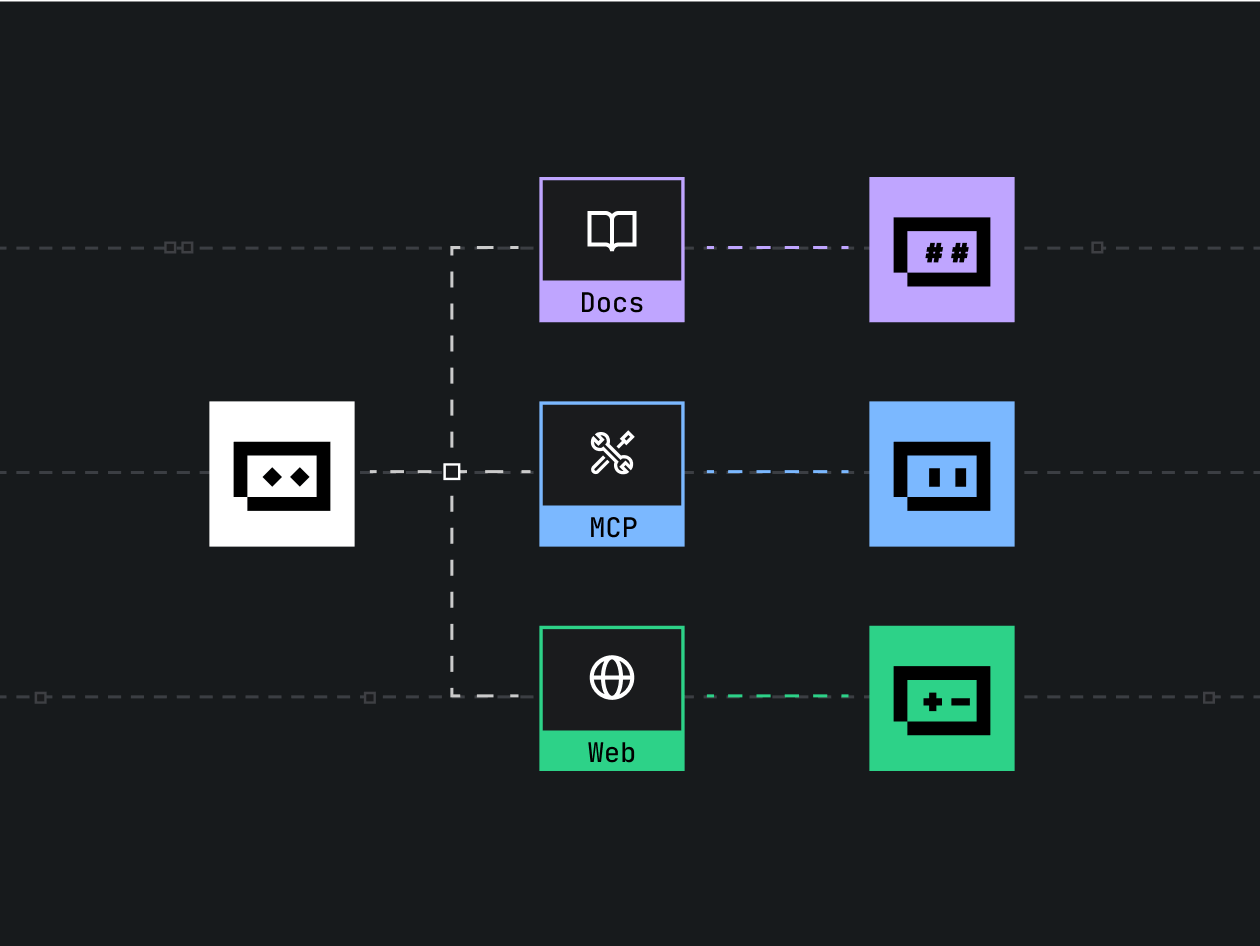

How to build AI chatbot: core components and choices

A working chatbot needs components that map directly to user value: intent detection, entity extraction, dialogue manager, response generation, and integrations (APIs, databases, authentication). This section covers the common patterns used in chatbot architecture design and decision factors for each element.

Chatbot architecture design: patterns and trade-offs

Common architectures include:

- Rule-based: predictable, low data requirement, good for limited flows but hard to scale.

- Retrieval-based: returns the best canned response from a knowledge base using semantic search (embeddings).

- Generative LLM-based: flexible free-text responses, supports open-ended dialog but needs guardrails for hallucination.

- Hybrid (RAG): combine retrieval with LLM generation to ground responses in documents and reduce incorrect outputs.

Key trade-offs: control vs flexibility, latency vs accuracy, and cost vs coverage. Use retrieval or hybrid approaches when accuracy and provenance matter; prefer rule-based for transactional flows with strict compliance requirements.

Step-by-step process to build AI chatbot

Follow these steps as a repeatable workflow to build AI chatbot features that meet requirements and can be maintained.

1. Define scope, users, and success metrics

Specify supported intents, expected user journeys, and objective KPIs (resolution rate, average turns, user satisfaction). Map out must-have flows first (e.g., order status, password reset).

2. Design intents and dialogue flows

Document intent taxonomy (primary, fallback) and slot/entity requirements. Create example utterances for each intent to use as training and testing data.

3. Choose models and data strategy

Decide whether to fine-tune a model, use instruction prompts, or apply RAG. For many use cases, combining semantic search over documents with an LLM generator balances accuracy and flexibility. For compliance-sensitive tasks, prefer controlled templates or supervised models.

4. Build and train

Prepare labeled data for intent classification and entity extraction; use augmentation when data is limited. Train models and validate using holdout sets and real-user simulations.

5. Deploy, monitor, and iterate

Deploy with logging, latency tracking, and quality evaluation (user ratings, NPS). Use automated tests and shadow modes before full traffic rollout.

BUILD checklist: a named framework for delivery

Use the BUILD checklist to keep development predictable:

- Boundaries: define scope, data, and compliance limits.

- Users: create personas and sample conversations.

- Integration: plan APIs, auth, and backend calls.

- Logging: enable request/response logging, telemetry, and error tracking.

- Deploy: stage rollout, monitor performance, and establish rollback criteria.

Real-world example: e-commerce order-status chatbot

Scenario: An online retailer needs a chatbot to answer shipping status, process returns, and escalate payment issues. Start by defining intents (check_order, initiate_return, payment_help), gather historical chat logs for training, and set SLAs for response latency. Use a hybrid architecture: retrieve the latest shipment details via API and generate user-friendly messages with an LLM. Add a fallback flow that hands off to human agents with context when confidence is low.

Practical tips for implementation

- Label a diverse set of example utterances early—this improves intent detection and reduces bias.

- Use embeddings for semantic search when answers must reference documents or product data.

- Start with a small, high-value scope and expand after measuring key metrics (throughput, resolution rate).

- Include automated tests that simulate common user paths and edge cases before each release.

- Implement rate limits, input sanitization, and monitoring to control cost and prevent abuse.

Common mistakes and trade-offs

Common mistakes

- Skipping intent design: leads to poor classification and frequent fallback responses.

- Ignoring provenance: not providing source citations causes trust issues for factual queries.

- Not monitoring quality: live drift and degraded performance go unnoticed without telemetry.

- Over-reliance on fine-tuning for tasks better solved by RAG or rule engines.

Trade-offs to consider

Latency vs. accuracy: more retrieval and grounding steps can increase response time. Cost vs. coverage: larger models are more capable but more expensive to run at scale. Control vs. flexibility: templated responses are safe and auditable but less natural.

Safety, governance, and standards

Apply risk assessment and data governance practices. For structured guidance on managing AI risk, consult the NIST AI Risk Management Framework: NIST AI Risk Management Framework.

Evaluation and metrics

Measure both technical and user-facing metrics: intent accuracy, entity F1, response latency, resolution rate, escalation rate, and user satisfaction. Perform A/B testing for changes in response generation or dialog strategy.

Deployment and operations

Follow chatbot deployment best practices: deploy in stages (canary, blue/green), instrument for observability, and provision autoscaling for inference. Ensure backup strategies and privacy controls for stored conversation data.

Practical maintenance routine

- Weekly review of low-confidence or fallback conversations.

- Monthly retraining or prompt refresh with new labeled examples.

- Quarterly audit for compliance and data retention policies.

Next steps and iteration

After launch, prioritize high-impact improvements: expand intent coverage, improve entity extraction for business-critical slots, and refine grounding sources to reduce hallucinations. Keep users in the loop with feedback prompts after resolved sessions.

FAQ: common questions

How to build AI chatbot that handles sensitive customer data?

Limit data retention, mask or tokenize PII before storage, apply role-based access controls, and enforce encryption in transit and at rest. Use privacy-preserving designs like on-device or isolated inference where required.

What are the costs involved to train conversational AI?

Costs include annotation, compute for training or inference, hosting, and data storage. Hybrid approaches reduce cost by using smaller models for classification and retrieval, and using LLMs only for generation where needed.

How to evaluate whether to fine-tune or use prompt engineering?

Fine-tuning is appropriate when consistent, domain-specific behavior is needed and labeled data is available. Prompt engineering or RAG is efficient for rapidly changing knowledge or when provenance is required without large-scale retraining.

How to build AI chatbot that scales to high traffic?

Design for horizontal scaling of inference endpoints, use batching where possible, apply caching for repeated queries, and set up autoscaling and circuit breakers to protect downstream services.

How to build AI chatbot with measurable success metrics?

Define KPIs before development (resolution rate, average handle time, NPS) and instrument the system to capture those metrics. Run controlled experiments to measure impact on support load and customer satisfaction.