Resilient Cloud Recovery: How to Build Automated Disaster Recovery Capabilities

Designing and operating reliable systems requires a clear plan for disaster recovery with cloud automation to reduce downtime, protect data, and support business continuity. This article explains core objectives, architectural approaches, and operational practices for building resilient systems that use automated cloud processes to meet recovery time and recovery point objectives.

- Define RTO and RPO, and map them to infrastructure and application priorities.

- Use infrastructure as code, orchestration, and automated failover to speed recovery.

- Combine backups, replication, and multi-region deployment for layered resilience.

- Establish testing, monitoring, and governance aligned with recognized guidance such as NIST.

Why automate disaster recovery?

Automation reduces manual steps and human error during crisis response, accelerates recovery, and enables repeatable testing. Typical goals include meeting business continuity requirements, limiting financial and reputational impact, and ensuring regulatory compliance. Automation complements traditional backup and replication by orchestrating failover, reconfiguration, and validation tasks across compute, storage, networking, and application layers.

Principles of disaster recovery with cloud automation

Adopting cloud automation for disaster recovery is most effective when guided by a few core principles:

Define objectives and scope

Establish recovery time objectives (RTO) and recovery point objectives (RPO) for each application or service. Classify workloads by criticality so automated processes target the most important systems first.

Automate infrastructure and configuration

Represent infrastructure as code (IaC) and store configurations in version control. Automate provisioning, configuration, and policy enforcement so environments can be recreated reliably in a secondary zone or region.

Orchestrate recovery workflows

Use orchestration to sequence recovery steps such as DNS updates, database failover, cache warming, and health checks. Automated runbooks reduce decision overhead during an incident.

Design for layered resilience

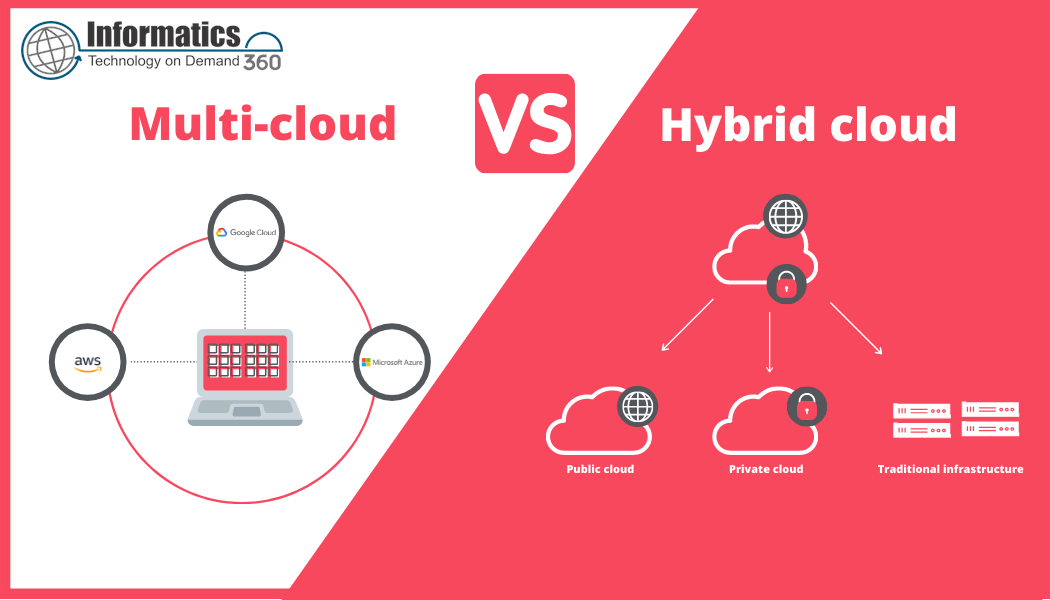

Combine frequent backups, continuous replication, and active-active or active-passive architectures. Layering provides options that balance cost against recovery speed and data loss tolerance.

Architectural approaches and patterns

Cold standby

Resources are provisioned only after a failover decision. This approach lowers cost but increases recovery time. Automation accelerates provisioning and data restore steps.

Warm standby

Maintain a scaled-down replica environment that can be scaled up automatically on demand. Automation handles scaling, synchronization, and traffic routing.

Hot standby / active-active

Active replicas run concurrently across zones or regions with near real-time replication. Orchestrated failover is minimal, focused on routing and consistent state management.

Operational practices for reliable automated recovery

Testing and exercises

Regular, automated testing validates recovery procedures and uncovers gaps. Tests should include full failover drills, partial component failures, and recovery validation tests. Maintain test logs and track metrics such as mean time to recover in test runs.

Monitoring and verification

Automated checks should verify data integrity, service health, and performance after recovery. Integrate these checks into incident workflows so remediation steps can be triggered automatically if validation fails.

Change control and configuration drift

Integrate disaster recovery automation into the change management lifecycle. Use drift detection and policy enforcement to ensure the recovery environment reflects production configurations.

Governance, compliance, and guidance

Formalize roles, escalation paths, and documentation for recovery operations. Align policies with industry standards and government guidance. For example, contingency planning guidance from recognized authorities provides a structure for developing and testing recovery plans. See the NIST contingency planning guidance for recommended practices and planning templates: NIST SP 800-34 Rev. 1.

Common pitfalls and how automation mitigates them

Overreliance on manual runbooks

Manual steps slow recovery and introduce errors. Automating repeatable tasks and validating runbooks with tests reduces this risk.

Insufficient testing

Occasional untested processes often fail under pressure. Continuous automated testing keeps recovery procedures reliable.

Undervalued interdependencies

Failure to map dependencies between services can cause partial recoveries to fail. Automated dependency discovery and orchestration ensure ordering and prerequisites are handled correctly.

Measuring success

Key indicators include time to detect an incident, time to failover, post-recovery validation success rate, and alignment with RTO/RPO targets. Track test results, incident timelines, and improvement actions in a continuous improvement loop.

Getting started checklist

- Inventory applications and dependencies; set RTO/RPO per workload.

- Implement infrastructure as code and store artifacts in version control.

- Automate backups, replication, and deployment pipelines for recovery environments.

- Create orchestrated recovery runbooks and automate validation checks.

- Schedule regular automated tests and incorporate findings into operations.

Frequently asked questions

What is disaster recovery with cloud automation?

Disaster recovery with cloud automation is the use of automated provisioning, orchestration, and validation processes in cloud environments to restore services and data after an outage or incident. It focuses on reducing recovery time and human error while meeting RTO and RPO targets.

How often should disaster recovery tests be run?

Testing frequency depends on risk tolerance and regulatory requirements. Many organizations run quarterly or semi-annual full drills, with more frequent automated component tests such as daily backup verification and weekly failover rehearsals for critical systems.

Can automation fully replace human decision-making in recovery?

Automation can handle repeatable, deterministic tasks and provide rapid recovery pathways, but human oversight remains important for complex decisions, coordination, and communication with stakeholders. Define clear escalation policies and human-in-the-loop checkpoints where needed.

What metrics indicate a successful recovery program?

Useful metrics include test pass rate, mean time to recover (MTTR) in tests and incidents, percentage of systems meeting RTO/RPO, and time to validate post-recovery integrity. Trend these metrics to measure improvement.

How does infrastructure as code support recovery efforts?

Infrastructure as code captures environments as versioned artifacts that can be recreated reliably. IaC reduces provisioning time, ensures configuration consistency across regions, and enables automated deployments during recovery.

How should an organization start implementing automated disaster recovery?

Begin by classifying workloads, defining RTO/RPO, and implementing basic automation for backups and provisioning. Introduce orchestration for end-to-end recovery workflows and schedule regular automated tests. Integrate findings into continuous improvement and governance processes.

Where can organizations find formal guidance on contingency planning?

Organizations can consult recognized standards and guidance such as those from NIST and ISO for contingency planning and business continuity frameworks; these resources provide structured approaches to planning, testing, and documentation.