AI Infrastructure Essentials: Compute, GPUs, and Cloud Systems Explained

Get a free topical map and start building content authority today.

AI infrastructure determines how quickly models train, how reliably they serve predictions, and how much projects cost. This guide explains compute choices, GPU options, and cloud systems so technical and non-technical stakeholders can make practical decisions. The primary goal is to make trade-offs explicit and actionable for teams building or buying infrastructure.

- AI infrastructure covers compute (CPUs, GPUs, TPUs), storage, networking, and orchestration.

- Key trade-offs are cost vs. latency, single-node GPU power vs. multi-node networking, and cloud elasticity vs. on-premise control.

- Use a checklist and the SCALE framework to evaluate needs; follow practical tips to avoid common mistakes.

What is AI infrastructure and what components matter?

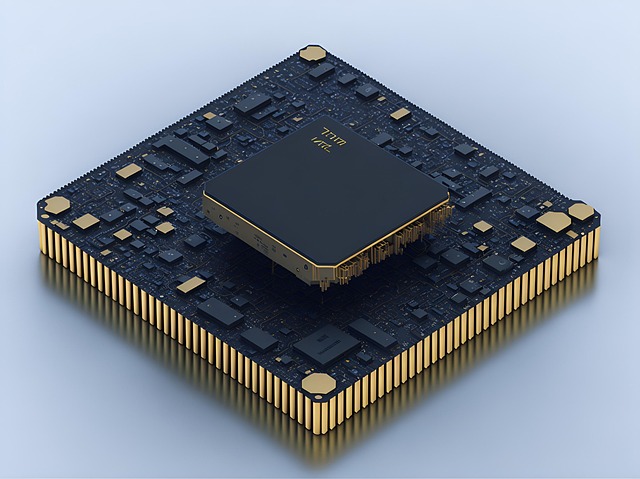

The term AI infrastructure refers to the combined hardware and platform services used to train, validate, and deploy machine learning models. Core components include compute (CPU and accelerator selection), GPUs and their memory and interconnects, storage (object and block), networking (latency and bandwidth), and orchestration tools like Kubernetes. Decisions at each layer affect performance, cost, and developer velocity.

AI infrastructure tiers: from laptop to hyperscale

Local and development tier

Local workstations and single-GPU servers are ideal for prototyping. They minimize iteration latency and often use consumer or small datacenter GPUs for experiments.

Scale-out training tier

Distributed training uses multi-GPU, multi-node clusters with high-bandwidth interconnects (NVLink, InfiniBand) and high-memory GPUs (HBM). Frameworks rely on collective communication libraries (NCCL, MPI) and checkpoint-friendly storage.

Inference and edge tier

Inference can be served on CPU, GPU, or specialized accelerators depending on latency and throughput needs. Edge AI deployment architecture often favors model quantization and smaller accelerators to reduce cost and power.

Compute, GPUs, and networking: what to choose

Picking the right compute mix starts with workload characterization: training large transformers needs GPU memory and fast interconnect; high-concurrency inference may prefer many cheap CPUs or smaller GPUs. GPU details matter: VRAM size, memory bandwidth (HBM), tensor cores, and interconnect (PCIe, NVLink) all influence achievable batch sizes and throughput.

Common accelerator options

- GPUs (NVIDIA, AMD): general-purpose, strong ecosystem for deep learning tools.

- TPUs (Google): high efficiency for some TensorFlow/PJIT workloads.

- Inference accelerators (Edge TPUs, ASICs): optimized for low-power, low-latency models.

Networking and storage

Large-model training is often network-bound; choose low-latency, high-bandwidth fabric and colocated storage. Object storage is common for datasets and checkpoints; high-performance block storage or NVMe is needed for read-heavy training loops.

The SCALE framework: a decision checklist

Use the SCALE framework to evaluate infrastructure needs:

- S — Scale: expected dataset size, model parameters, and concurrency.

- C — Compute: GPU type, CPU cores, and memory per node.

- A — Accelerator interconnect: NVLink, PCIe, InfiniBand.

- L — Latency & locality: on-prem vs cloud region choices, edge needs.

- E — Economics: cost of instances, storage, egress, and operational overhead.

Checklist: DEPLOY

- D — Define workload: training size, inference QPS, latency SLOs.

- E — Estimate throughput: FLOPS, memory footprint, effective batch size.

- P — Pick platform: cloud instances, bare-metal, or hybrid.

- L — Load-test: simulate production traffic and training runs at scale.

- O — Observe: set up metrics, profiling, and cost reporting.

- Y — Yield plan: autoscaling, spot/interruptible usage, and fallbacks.

Real-world example

Scenario: Training a 2-billion-parameter transformer on cloud GPUs. Using the SCALE framework reveals a need for GPUs with 40–80 GB VRAM, low-latency inter-node networking for model parallelism, and high-throughput object storage. Cost modeling shows that combining reserved instances for baseline needs with spot instances for burst training reduces AI training infrastructure costs by 20–40%, while a parallelized checkpoint schedule reduces restart time after preemption.

Practical tips

- Profile early: measure memory and compute utilization on a realistic batch to avoid downstream surprises.

- Start with managed services for orchestration, then optimize with bare-metal for stable, high-utilization workloads.

- Use mixed-precision and model parallelism libraries to reduce memory and speed training without changing architectures.

- Reserve capacity or negotiate committed use discounts when long-term predictable workloads exist.

- Monitor cost at the job level — track GPU-hours, egress, and storage I/O as separate metrics.

Common mistakes and trade-offs

Common mistakes include underestimating network requirements for multi-node training, over-provisioning expensive GPUs for low-utilization inference, and ignoring egress/storage costs when moving large datasets. Trade-offs are inevitable: choosing the fastest GPUs increases hourly cost but reduces time-to-train; selecting cloud elasticity increases flexibility but can raise long-term expenses compared with optimized on-prem hardware for steady workloads.

Standards, benchmarks, and resources

Benchmarking with community standards helps compare options objectively. MLPerf provides widely-used benchmarks for both training and inference that reveal real-world differences between accelerators and systems. For details and latest suites, see the MLPerf site: MLPerf benchmarks.

How to get started

Begin with the DEPLOY checklist, run a small-scale test that mimics production load, and iterate using the SCALE framework. Capture performance and cost metrics, and adjust compute types and orchestration based on observed bottlenecks. For edge projects, prioritize model compression and specialized inference hardware.

FAQ: What is AI infrastructure?

AI infrastructure is the combined set of hardware, networking, storage, and platform services used to build, train, and serve machine learning models. It spans local workstations to cloud clusters and affects performance, cost, and developer productivity.

FAQ: How do GPUs affect AI training performance?

GPUs provide high parallelism and specialized tensor units; performance depends on VRAM, memory bandwidth, and interconnects. GPUs with more memory enable larger batches or bigger model shards, while fast interconnects reduce synchronization overhead in distributed training.

FAQ: How to estimate AI training infrastructure costs?

Estimate cost by multiplying expected GPU-hours by instance price, add storage and egress estimates, and include operational overhead. Use small pilot runs to measure true GPU-hours per epoch and scale from there to model full training runs. Consider reserved or committed use options to lower unit costs.

FAQ: When should teams use cloud vs. on-premises AI infrastructure?

Choose cloud for flexibility, quick scaling, and managed orchestration; choose on-premises when sustained high utilization, data governance, or network locality justify upfront capital and operational investments.

FAQ: AI infrastructure tips for edge AI deployment architecture?

For edge deployments, prioritize model optimization (quantization, pruning), select low-power accelerators, and design for intermittent connectivity. Minimize on-device storage and plan for secure model updates.