Choosing the Right Technical Hiring Assessment Tool: A Practical Framework and Checklist

Get a free topical map and start building content authority today.

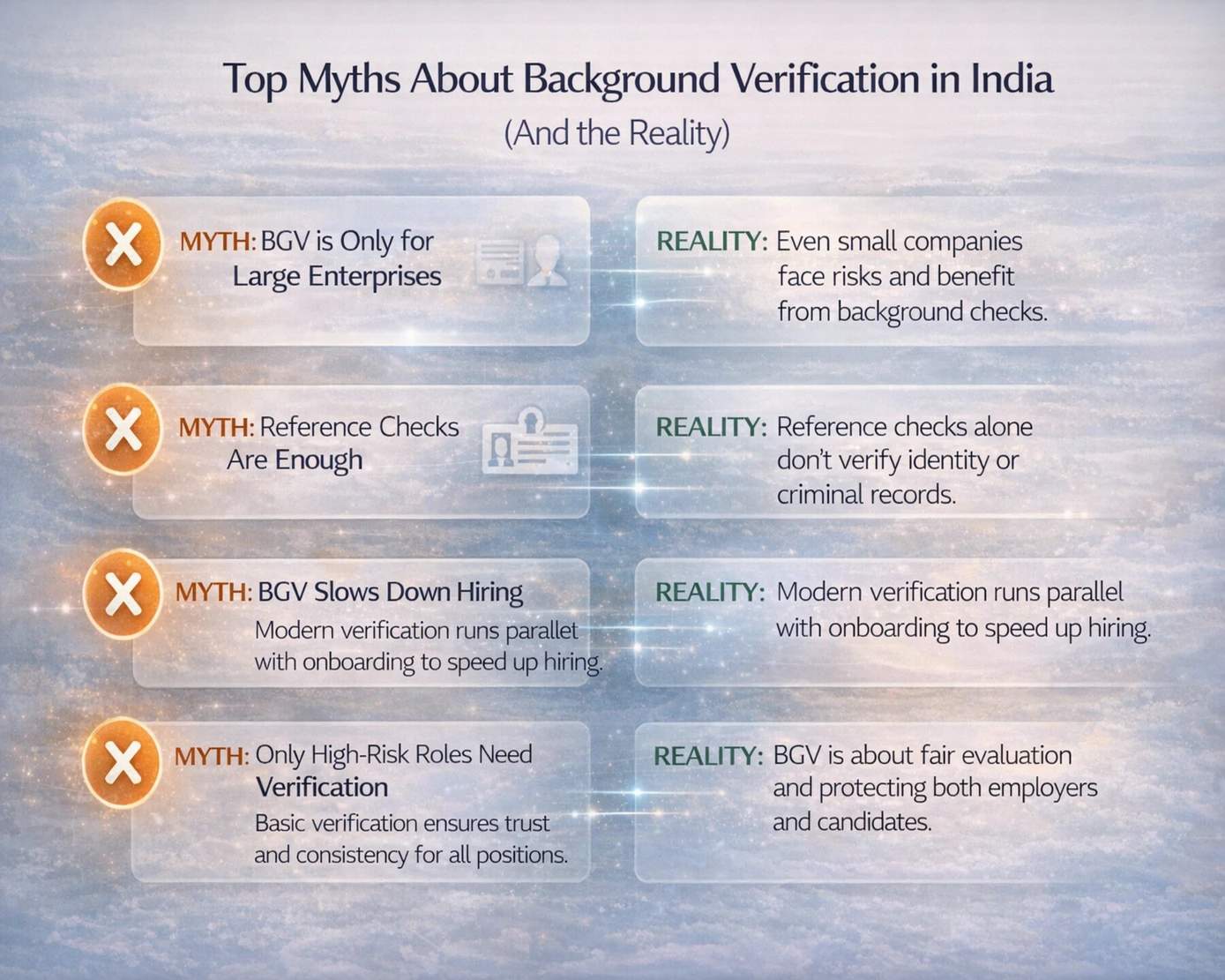

Selecting a technical hiring assessment tool requires balancing accuracy, candidate experience, and operational cost. A technical hiring assessment tool should measure job-relevant skills, reduce bias, and integrate with hiring workflows without creating unnecessary friction for candidates.

- Define the skills to measure (coding, system design, debugging, domain knowledge).

- Use the HIRE assessment framework for consistent test design and scoring.

- Balance automated grading with human review to improve validity.

- Watch for common mistakes: vague tasks, over-reliance on one format, and ignoring fairness.

What a technical hiring assessment tool should do

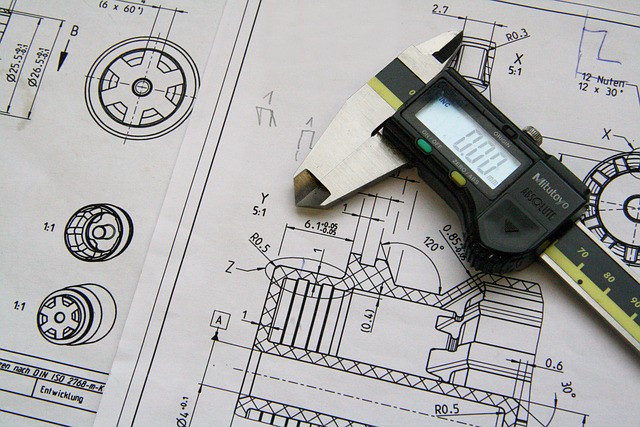

A technical hiring assessment tool must map tests to job tasks, provide objective scoring, and support multiple formats: timed code challenges, take-home projects, pair programming sessions, and multiple-choice knowledge checks. Look for features that enable reproducible scoring (auto-grading plus rubric-based manual review), integration with applicant tracking systems (ATS), and analytics that show item-level difficulty and candidate performance distributions. Terms to watch: validity (does the test measure the intended skill?), reliability (are results consistent?), and fairness (is the test non-discriminatory?).

technical hiring assessment tool: evaluation checklist and framework

Use a named framework to standardize evaluation and vendor comparison. The HIRE assessment framework below is designed for repeatable, defensible hiring assessments.

HIRE assessment framework (Checklist)

- H - Hold-to-job mapping: Create a task matrix linking each test to real job activities (e.g., bug-fixing, API design, performance tuning).

- I - Instrumentation: Ensure scoring methods (auto-grader, rubric, peer review) and analytics (item stats, pass rates) are available.

- R - Reliability & validity checks: Pilot questions, measure inter-rater reliability for manual scoring, and validate against on-the-job performance where possible.

- E - Experience & fairness: Evaluate candidate UX, time burden, accommodations, and bias mitigation policies (language, cultural assumptions).

Scoring rubric template

Design a simple numeric rubric for each task: 0 = Incorrect, 1 = Partial, 2 = Correct but inefficient, 3 = Correct and optimal. Combine auto-grader output with rubric scores for things like architecture or code readability.

Comparing formats and trade-offs

Different test formats serve different goals. Understanding trade-offs will guide the choice of a tool.

Trade-offs and common mistakes

- Timed code challenges (fast, scalable) vs. take-home projects (higher validity, lower scalability). Over-reliance on short timed tests can miss collaboration and design skills.

- Automated grading (consistent, cheap) vs. human review (nuanced, costlier). Relying solely on auto-grading misses code quality and architecture decisions.

- Complex real-world tasks increase validity but raise evaluation effort and risk of plagiarism. Not providing clear requirements in take-home assignments causes inconsistent grading.

- Ignoring test fairness or accessibility can expose the organization to legal risk and reduce diversity in candidate pools; consult guidelines from relevant authorities to design non-discriminatory assessments.

Integration and operational considerations

Evaluate whether the tool supports single sign-on, ATS integration, candidate reminders, and data exports for analytics. Check for audit logs and data retention policies to meet compliance. Also validate whether the vendor provides API access if custom workflows or dashboards are needed.

Real-world example

A mid-sized SaaS company needed to screen backend engineers. The hiring team used a blended workflow: a short automated coding assessment for basic data structures and algorithms, followed by a 48-hour take-home micro-project focused on API design, and a final 60-minute live session for system design and debugging. Results were scored with the HIRE framework: each stage mapped to job tasks, rubrics standardized scoring, and the three-stage approach reduced false negatives while keeping time-to-hire reasonable.

Practical tips for immediate implementation

- Start small: Pilot assessments with a representative sample of current employees to validate that test scores correlate with job performance.

- Mix formats: Use a short coding assessment to screen broadly and reserve take-home or live sessions for later rounds.

- Standardize rubrics: Create clear, numeric rubrics and train reviewers to increase inter-rater reliability.

- Track metrics: Monitor completion rates, average scores by role, and time-to-hire to spot bottlenecks and bias.

Legal and fairness checklist

Consult guidance on employment testing and fairness. Tests should be job-related and validated. Keep documentation on how each assessment maps to job tasks and maintain candidate accommodations records. For legal best practices, refer to the Equal Employment Opportunity Commission guidance on employment testing: EEOC.

Vendor selection and cost considerations

Price models vary: per-assessment, per-seat, or enterprise subscription. Choose based on hiring volume. Consider hidden costs such as reviewer time for manual grading, integration engineering, and custom test creation. Ask vendors for sample item-level analytics and references from companies in similar hiring contexts.

FAQ

What is a technical hiring assessment tool and how should it be used?

A technical hiring assessment tool is software that delivers and scores tests to evaluate candidate technical abilities. Use it to screen for core competencies, validate skills claimed on resumes, and structure follow-up interviews with targeted questions based on assessment results.

How reliable are coding assessment platforms for predicting job performance?

Reliability depends on test design and validation. Short, well-targeted tasks can predict specific skills (e.g., algorithmic problem solving), while broader job performance prediction requires multi-format assessments and validation against on-the-job outcomes.

What are common mistakes when building pre-employment technical screening tests?

Common mistakes include using overly generic questions, lacking clear rubrics, relying only on timed puzzles, and ignoring accessibility and fairness. These errors reduce validity and introduce bias.

How to combine automated grading and manual review effectively?

Use automated grading for objective items (test cases, performance), and reserve manual review for architecture, design, and code readability using standardized rubrics. A hybrid approach balances scalability and nuance.

How to run a pilot to validate a technical hiring assessment tool?

Run the tool on a sample of current employees and recent hires, compare scores to performance reviews, measure inter-rater reliability for manual items, and iterate on tasks that show weak correlation to on-the-job performance.