Why Your Agile Sprint is Lagging: The 2026 Shift to Autonomous QA

FREE SEO Topical Map Generator: Find Your Next Content Ideas

In 2026, the competitive advantage in software development comes down to one thing: how fast you can ship quality code. Thanks to AI-assisted development tools, engineering teams are writing, reviewing, and merging code at unprecedented velocity. Features that once took weeks are now delivered in days.

But somewhere between the last commit and the production deployment, something quietly eats your timeline alive.

It is your QA process.

Not because your QA engineers are slow or unskilled. But because the core workflow — reading a requirement, interpreting acceptance criteria, designing test cases, writing automation scripts — was never designed for the speed that 2026 demands. It was built for a world where software moved slowly. That world no longer exists.

The Invisible Bottleneck: Manual Translation

Every time a Jira ticket lands in your sprint, a QA engineer sits down and begins a translation exercise. They read the user story. They interpret the acceptance criteria. They make judgment calls about what "done" really means. Then they manually write test steps, one by one, hoping their interpretation matches what the product team actually intended.

This manual translation from requirement to test case is where most sprints quietly bleed momentum — and most teams never even measure it.

The consequences are predictable and expensive.

Inconsistent Coverage. Two QA engineers reading the same user story will produce different test cases. Neither is wrong, but neither is complete. When acceptance criteria are vague — and they almost always are — human interpretation fills the gap differently every time. Critical edge cases get missed. Duplicate effort gets created. Coverage becomes a guess, not a guarantee.

Maintenance Debt. Requirements change constantly in agile environments. A story gets updated in Jira. A new acceptance criterion is added mid-sprint. The corresponding test cases? They may or may not get updated, depending on who notices, who has time, and whether the traceability chain was maintained manually. It usually was not. Over time, your test suite becomes a historical artifact of how requirements used to look — not how they look today.

Script Flakiness. Manually written automation scripts rely on selectors, element IDs, and UI structures that change with every release cycle. A front-end refactor or a minor UI update breaks dozens of tests at once. Your automation engineers spend more time fixing broken scripts than writing new ones. The maintenance burden compounds with every sprint.

These are not niche problems. They are the standard operating reality for the majority of QA teams in 2026. And they share a single root cause: the requirement and the test exist in completely separate worlds, connected only by a human manually bridging the gap.

The Rise of Autonomous QA: Requirement-Driven Testing

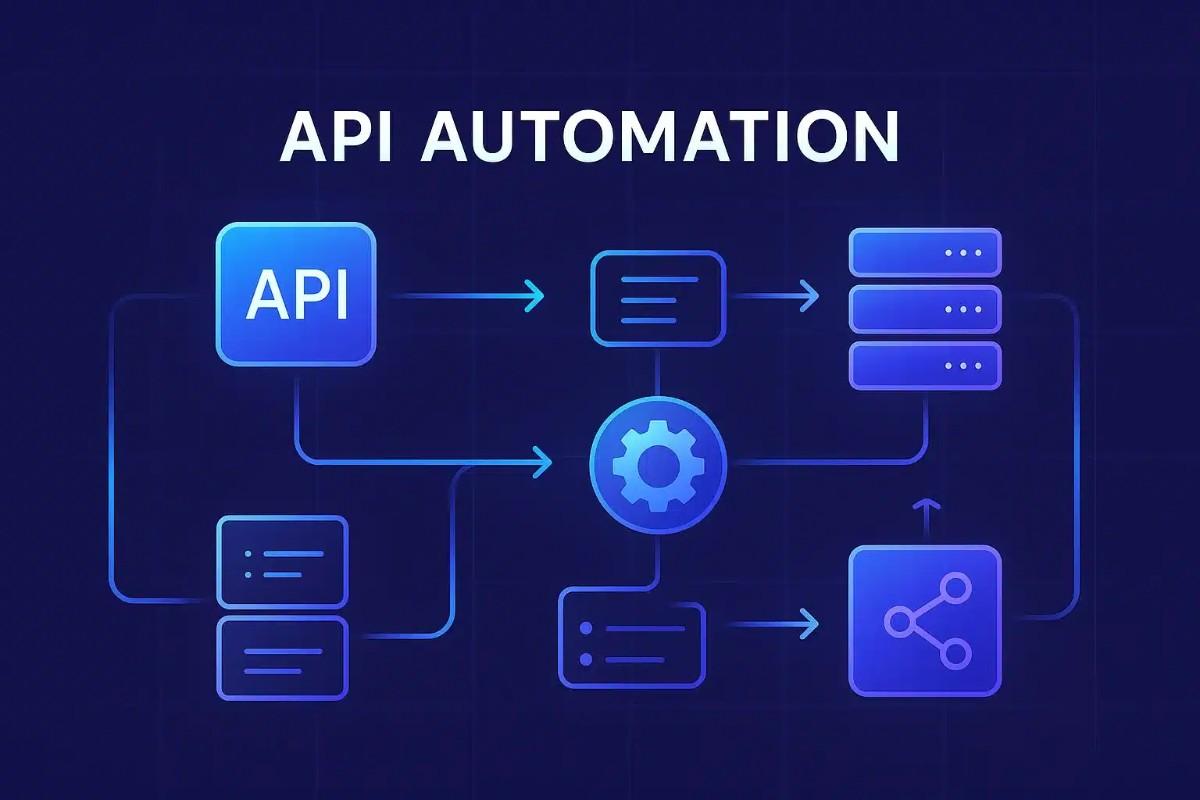

The industry's response to this bottleneck is a new category of tooling: AI-driven software testing platforms that eliminate the manual translation layer entirely.

The leader in this space is TestMax an AI test automation platform built around a fundamentally different philosophy. Instead of asking your QA team to start from a blank script, TestMax starts from the business requirement itself.

This approach is called requirement-driven autonomous testing, and it changes everything about how quality is delivered.

Here is how the TestMax workflow operates in practice:

Read and Evaluate. TestMax ingests requirements directly from Jira or Azure DevOps. It does not just import the text it evaluates each requirement for testability, flagging ambiguity and incomplete acceptance criteria before a single test is created. Problems are caught at the source, not discovered three sprints later.

Generate Test Scenarios. From each validated requirement, TestMax's AI automatically generates a comprehensive set of test scenarios functional tests, negative tests, boundary conditions, and edge cases. The coverage decisions are made by AI, based on the requirement itself, not by a QA engineer's subjective interpretation on a given afternoon.

Deploy Playwright Scripts. This is where TestMax separates itself from every generic AI test case generator on the market. It does not stop at test scenarios. It converts those scenarios into production-grade Playwright scripts automatically complete, executable, and ready to run without a human writing a single line of code.

For teams that have been searching for the best AI tool for Playwright automation, TestMax is the direct answer. It is not a code assistant that helps you write Playwright faster. It is a platform that writes Playwright for you, derived directly from your requirements.

No-Code AI Test Automation: Scaling Quality Without Scaling Headcount

The deeper strategic value of a no-code AI test automation platform is what it does to your team's capacity model.

Traditional automation scales with people. You want more test coverage? You hire more automation engineers. You want faster execution? You add more infrastructure. The human bottleneck is always the ceiling.

With TestMax, automation scales with requirements. Every requirement that enters the system produces test cases and executable scripts automatically. Your coverage grows as your product grows not as your QA headcount grows. A team of five can now maintain the test coverage that previously required fifteen, because the manual translation layer no longer exists.

This is not an incremental improvement to your QA process. It is a structural change to what QA costs and what it can deliver.

Shift Left or Fall Behind

The principle of shift-left testing has been discussed for years. But in practice, most teams still treat QA as a phase that begins after development ends. Requirements go to dev. Dev ships code. QA tests it. Bugs come back. The cycle repeats.

TestMax makes shift-left testing operationally real. When your requirements flow directly into test generation — automatically, at the moment they are written QA is no longer downstream of development. It runs in parallel. Coverage is established before the first line of application code is written.

If your team is still operating in the cycle of manual test design, you are not just slower than your competitors — you are spending engineering budget on a problem that has already been solved.

The pipeline exists. Requirements flow directly into execution. The only question is whether your team is using it.

See how TestMax works — start your free trial at testmax.ai