Batch Image Generation with AI: Practical Step-by-Step Workflow

Get a free topical map and start building content authority today.

Batch generate images with AI by turning a clear, repeatable prompt and pipeline into automated jobs that produce hundreds or thousands of assets. This guide explains the practical steps, a named checklist for reliable results, integration points with APIs and asset pipelines, and a short e-commerce example to show the technique in a real scenario.

How to batch generate images with AI: step-by-step workflow

Batch generation requires a workflow that bundles prompt design, parameter variation, parallel execution, and post-processing. Core steps are: define targets and constraints, create templated prompts (for controlled variation), schedule API calls or local inference, validate outputs, and store images with metadata for reuse. This approach supports an automate image generation workflow for catalogs, marketing, or testing creatives.

Step 1 — Define scope and constraints

Decide target dimensions, file format (PNG, JPG, WebP), visual style, and rejection rules (nudity, text artifacts). Document tolerances for quality and consistency; these feed into prompt templates and validation rules used downstream.

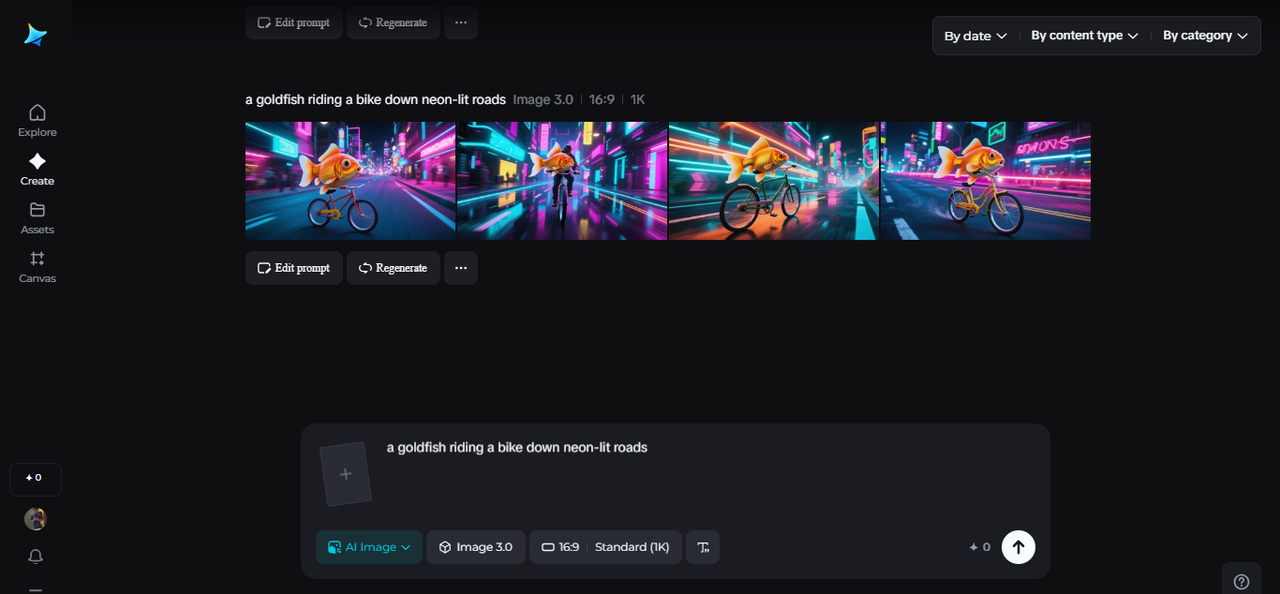

Step 2 — Create templated prompts and variations

Make a base prompt and generate controlled variations using variables (color, background, angle). Create a prompt file or CSV with one row per desired output. This is where batch image prompts are constructed so automation can iterate deterministically or with seeded randomness for variety.

Step 3 — Parallelize around rate limits

Use worker queues or serverless functions to submit requests while respecting API rate limits and concurrency. Track request IDs, retry transient failures, and log quota usage. For self-hosted models, monitor GPU utilization and memory to avoid OOM errors.

Step 4 — Validate outputs automatically

Run automated checks: image dimensions, format, perceptual hash for duplicates, NSFW filter, and basic aesthetic heuristics (contrast, bounding-box presence). Flag failures for re-generation with adjusted prompts or human review.

Step 5 — Post-process and store with metadata

Apply upscaling, background removal, or color correction as needed. Store files in object storage and attach metadata: prompt text, model and version, seeds, generation timestamp, validation status, and any review notes. This supports reproducibility and auditing.

BATCH checklist (named framework) for reliable bulk generation

- Base prompt: create a single clear baseline prompt.

- Augment: define variables and controlled permutations.

- Test: run small pilot batches and record failures.

- Control: set validation rules and rate-limit handling.

- Handover: store images with metadata and versioning.

Short real-world example: 250 product thumbnails for an ecommerce catalog

Create a CSV with SKU, color, and angle columns. Use a templated prompt: "Product photo of {SKU} on a white background, 45-degree angle, {color}, high detail." Run a 50-item pilot to tune the prompt for consistent lighting and background. After validating the pilot, use worker processes to submit jobs in parallel batches of 10 to respect API rate limits. Post-process by cropping and naming files SKU_variant.jpg, then attach metadata (prompt, seed, model) for each image.

For model-specific guidance on images and usage that affects prompt and format choices, follow official API guidance: OpenAI image API guide.

Practical tips for automation and quality

- Use deterministic seeds or controlled randomness to reduce drift between runs.

- Store prompts and seeds in a single source of truth so batches can be reproduced.

- Implement a staged rollout: pilot → small batch → full batch to surface issues early.

- Cache results for repeated tasks and avoid regenerating identical prompts.

- Log metrics: success rate, validation failures, average generation time, and cost per image.

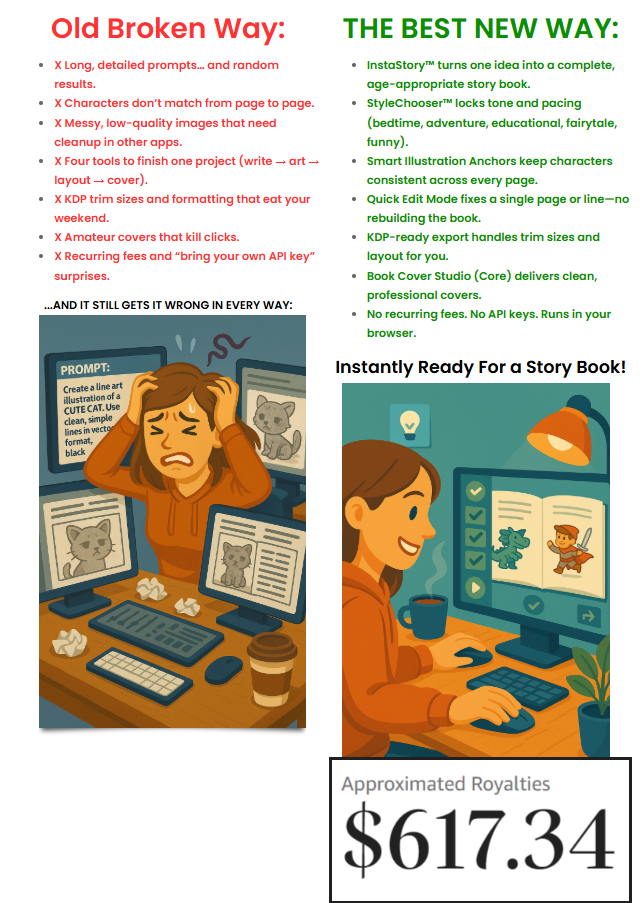

Common mistakes and trade-offs

Trying to generate everything in one uncontrolled burst risks hitting rate limits, producing inconsistent images, and wasting budget. Excessive prompt variation without governance reduces brand consistency. Overly strict validation can reject usable outputs and create rework. Balance cost, speed, and quality: higher consistency typically requires more prompt engineering and post-processing, while lower cost may accept greater variability. Typical mistakes include not versioning prompts, skipping metadata, and failing to pilot variations.

Integration points and tooling

Key integration components: prompt templating (CSV, JSON), job scheduler (queue, cron, serverless), concurrency manager (respect API rate limits), validation service (image checks), and asset storage (S3-compatible buckets). Include monitoring and alerting for failures and quota exhaustion. Consider automated upscaling or background processing pipelines to avoid manual intervention.

Security, compliance, and governance

Store only required data with generated assets, and track provenance. Apply content filters to avoid producing disallowed content. Consult provider policies and data protection standards when storing or sharing assets. For enterprise projects, maintain an audit trail linking prompts, model versions, and approvals.

FAQ

How can I batch generate images with AI for product catalogs?

Prepare a prompt template and structured input (CSV or JSON) listing variables per SKU. Run a pilot batch to tune the prompt and validation rules. Use parallel workers respecting API limits, validate images automatically, then store validated images with metadata for tracking and reproduction.

How to handle API rate limits when generating many images?

Use a queue system that throttles concurrency, exponential backoff for errors, and adaptive batch sizing. Monitor quota usage in real time and implement retries with jitter. For high-volume needs, negotiate higher limits with the provider or use self-hosted models if feasible.

What checks should be included in automated validation?

Include dimension and format checks, perceptual duplicate detection, NSFW filtering, prompt-to-image matching heuristics, and basic visual heuristics (contrast, face detection if relevant). Flag borderline results for human review and log failure reasons for prompt refinement.

Which trade-offs matter most between speed, cost, and consistency?

Speed favors larger parallelism and looser validation, increasing cost and variability. Consistency requires tighter controls, more prompt engineering, and often manual review, which increases cost and slows throughput. Choose the balance that matches business requirements: fast prototypes vs. production-grade assets.

Can existing images be used to improve batch results (fine-tuning or reference)?

Using reference images or fine-tuning (when supported) improves consistency and brand alignment but may require more setup, data curation, and governance. Consider image-to-image workflows or embedding references into prompts as a lower-effort alternative.