How the Data Cloud Is Transforming Data Management and Analytics

👉 Best IPTV Services 2026 – 10,000+ Channels, 4K Quality – Start Free Trial Now

The data cloud is an evolving architecture and service model that centralizes storage, processing, governance, and analytics of data across distributed environments. Organizations adopting the data cloud aim to make data more discoverable, interoperable, and usable for analytics, machine learning, and operational reporting while reducing duplication and friction.

- Definition: A unified layer for managing data at scale across clouds, on-premises, and edge environments.

- Core components: centralized metadata, data catalog, unified storage, compute decoupling, and governance controls.

- Benefits: improved analytics, faster access to reliable data, simplified governance, and better collaboration.

- Challenges: security, cost management, regulatory compliance, and technical integration.

What is the data cloud?

At its core, the data cloud is a design paradigm and set of services that replace fragmented data silos with a cohesive environment for data ingestion, cataloging, storage, processing, and consumption. It blends concepts from cloud computing, data warehousing, data lakes, and data fabric to enable large-scale analytics, real-time processing, and machine learning workflows while prioritizing governance and discoverability.

Core components and architecture

Unified storage and compute decoupling

Modern implementations separate compute from storage so analytics engines can scale independently from persistent data. This allows multiple compute engines—batch, streaming, and interactive—to operate on the same underlying datasets without creating copies.

Metadata, cataloging, and data lineage

Comprehensive metadata management and a searchable data catalog are essential. Lineage tracking shows how data moves and changes across systems, supporting reproducibility, auditing, and trust in analytical results.

Governance, access control, and policy enforcement

Governance frameworks in a data cloud include role-based access control, attribute-based policies, encryption controls, and automated policy enforcement. These controls align with regulatory obligations and organizational risk frameworks.

Benefits for analytics and operations

Improved data accessibility and collaboration

By consolidating metadata and providing common APIs and query layers, the data cloud reduces the time needed for analysts and data scientists to find and prepare datasets. Shared catalogs and standardized schemas improve collaboration across teams.

Scalability and performance

Decoupled compute and elastic provisioning allow workloads to scale for large analytics jobs or high-concurrency query traffic while controlling cost through on-demand resources.

Challenges, compliance, and governance considerations

Security and privacy

Implementing strong encryption in transit and at rest, fine-grained access controls, and continuous monitoring is essential. Aligning controls with standards and regulators such as the European Union data protection framework (GDPR) and national authorities helps manage legal risk.

Data residency and regulatory constraints

Data sovereignty requirements can affect where data is stored and processed. Architectures must support hybrid and multi-region deployments to meet jurisdictional rules while maintaining a unified catalog and governance model.

Operational complexity and cost control

Migrating to a data cloud often requires re-architecting pipelines and establishing monitoring and cost governance practices to avoid runaway expenses from cloud compute and egress fees.

Adoption patterns and best practices

Start with use cases and data domains

Prioritizing high-value use cases—such as customer analytics, supply chain visibility, or fraud detection—helps demonstrate value quickly. Organizing by domain and creating federated governance teams supports scale.

Automate cataloging and lineage

Automated ingestion of metadata and lineage reduces manual effort and improves reliability of the catalog. Integrating metadata standards (for example, schema registries and OpenMetadata) supports interoperability.

Monitor compliance and measure outcomes

Regular audits, automated policy checks, and outcome-oriented metrics (time-to-insight, query latency, cost per analytic job) guide continuous improvement.

Future trends

Expect stronger integration of real-time streaming, edge analytics, and model governance for deployed machine learning. Interoperability standards and increased regulatory scrutiny will influence data residency, consent management, and traceability requirements. Research from academic and standards organizations continues to refine best practices for trust, transparency, and efficiency.

For authoritative definitions and foundational concepts related to cloud computing and service models, refer to guidance from the National Institute of Standards and Technology (NIST): NIST Special Publication on Cloud Computing.

Frequently asked questions

What is the data cloud and how does it differ from traditional cloud storage?

The data cloud emphasizes a unified ecosystem for cataloging, governance, and analytics across diverse storage and compute environments, while traditional cloud storage focuses primarily on persistent object or block storage without integrated metadata, lineage, or analytics layers.

How does governance work in a data cloud?

Governance combines policies, automated enforcement, role and attribute-based access controls, encryption, and monitoring. It often includes a centralized policy engine and federated controls that allow domains to enforce local rules within a global framework.

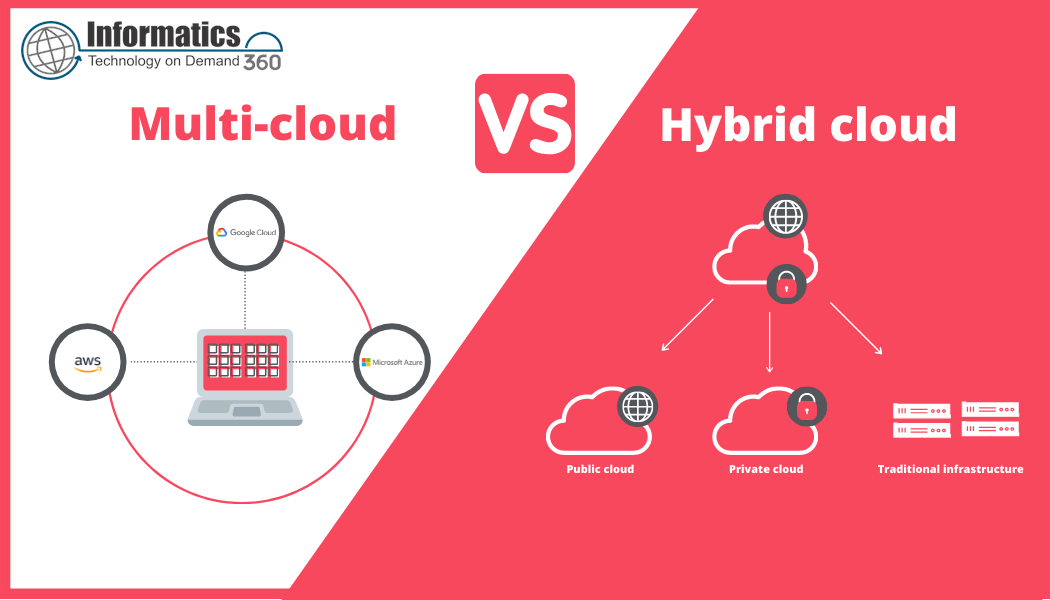

Can a data cloud support multi-cloud and hybrid deployments?

Yes. Architectures often use virtualization, replication, and federated metadata layers so data and queries can span on-premises systems and multiple cloud providers while preserving governance and lineage.

What are common risks when moving to a data cloud?

Common risks include data sprawl, cost overruns, insufficient access controls, and lack of interoperability between legacy systems. Addressing these risks requires phased migration, strong metadata practices, and ongoing cost monitoring.