Implementing AI for Customer Support: Strategy, Framework, and Best Practices

Get a free topical map and start building content authority today.

The following explains how to deploy AI for customer support in a way that reduces repetitive work, preserves customer experience, and keeps compliance and escalation clear. Implementing AI for customer support starts with defining use cases, mapping data flows, and setting success metrics so automation improves response time and resolution rates without increasing risk.

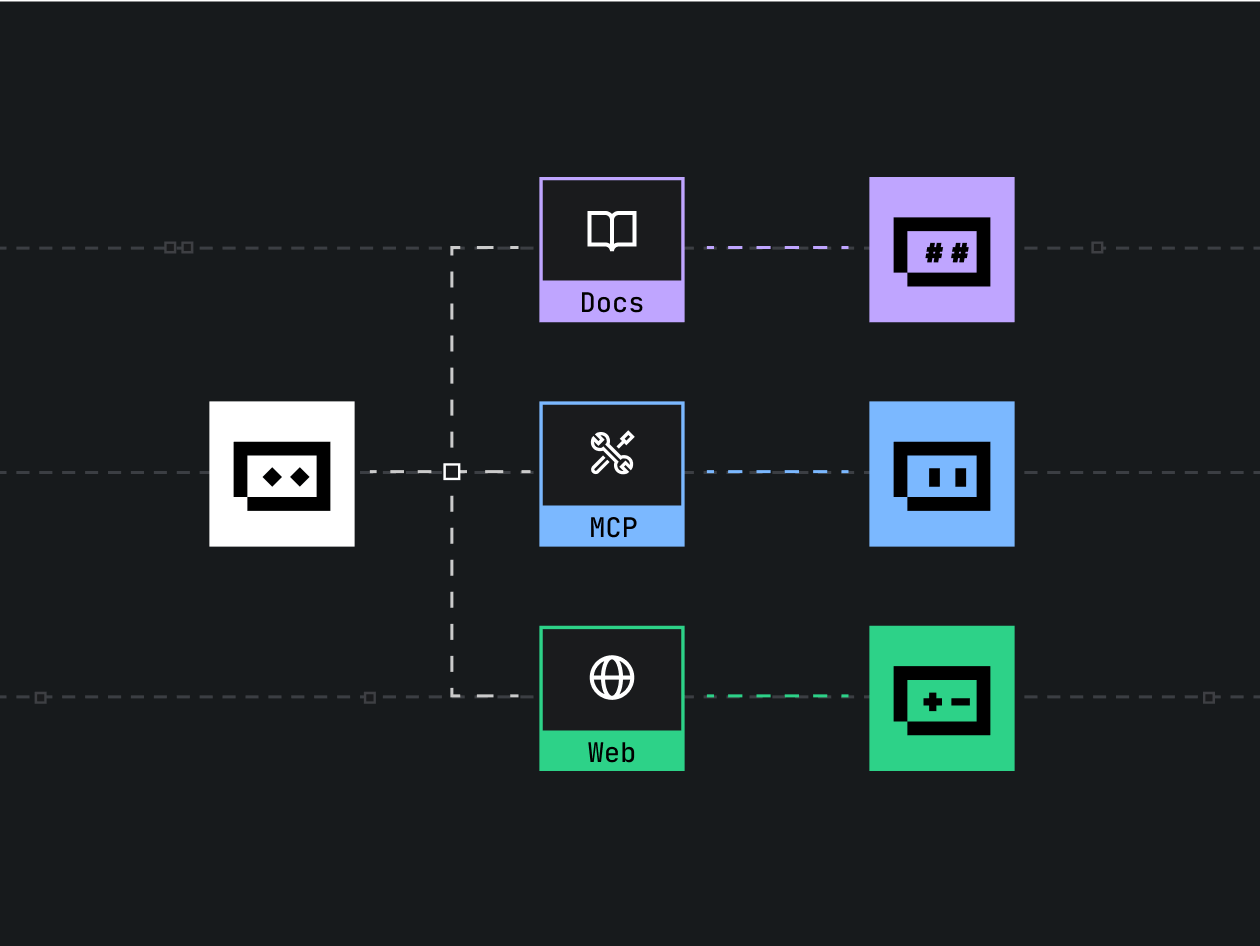

AI for customer support: an implementation roadmap

Start with a roadmap that addresses business goals, compliance, integration, and operational changes. Use cases range from conversational chatbots and automated responses to routing, summarization, and ticket triage. Key related terms include chatbot, virtual agent, natural language processing (NLP), intent recognition, entity extraction, knowledge base, CRM, ticketing system, human-in-the-loop, escalation policy, SLAs, deflection rate, and first contact resolution (FCR).

Step 1 — Align goals and use cases

Identify the highest-impact use cases: FAQ automation, pre-triage for human agents, status updates, and agent assist (response suggestions and summarization). Map each use case to success metrics such as reduced handle time, higher CSAT, fewer escalations, or lower cost per contact.

Step 2 — Data and privacy review

Inventory customer data in CRM and ticketing systems. Apply data minimization and retention policies, and redact sensitive fields before using data to train models. For guidance on risk management and best practices, refer to the NIST resources on secure AI deployments.

Step 3 — Build and integrate

Integrate the selected model with the knowledge base, CRM, and ticketing platform. Ensure intent and entity outputs map to business workflows and that escalation paths to human agents are enforced. Implement monitoring for latency, error rates, and drift.

Step 4 — Operate and measure

Track quantitative KPIs (deflection rate, FCR, CSAT, SLA compliance, average handle time) and qualitative metrics (customer sentiment, agent feedback). Schedule periodic model retraining and governance reviews.

ABTM checklist (named framework)

The ABTM checklist standardizes deployment steps: ALIGN, BUILD, TEST, MEASURE.

- ALIGN — Define objectives, stakeholders, compliance requirements, and target KPIs.

- BUILD — Select models, integrate with knowledge and CRM, configure escalation and human-in-the-loop flows.

- TEST — Run closed beta with sample traffic, measure accuracy, false positives, and user acceptance; validate privacy safeguards.

- MEASURE — Monitor live metrics, set retrain cadence, and establish rollback criteria.

Practical deployment example

Scenario: A mid-market SaaS company receives 1,200 monthly support tickets with frequent password reset and billing questions. Using AI for customer support, the team implements a rule-backed chatbot that handles password resets and a triage model that classifies billing inquiries for specialist routing. Results after three months: a 28% deflection rate for password issues, 15% faster average resolution for billing tickets due to better triage, and maintained CSAT. The system kept human-in-the-loop for ambiguous billing cases, reducing misrouted tickets.

Practical tips (actionable)

- Start small: automate one high-volume, low-risk flow (e.g., password resets) before expanding.

- Keep humans in the loop for exceptions: implement confidence thresholds that trigger agent takeover.

- Instrument telemetry: log intents, confidence, escalations, and customer outcomes for continuous improvement.

- Sanitize training data: remove PII and use synthetic examples for rare cases to avoid overfitting.

- Define rollback criteria: specify KPIs that require pausing or reverting a model release.

Trade-offs and common mistakes

Trade-offs

Speed vs. accuracy: higher automation often reduces human oversight and may lower accuracy for complex intents. Privacy vs. personalization: richer personalization requires more data, increasing compliance burden. Cost vs. coverage: comprehensive automation requires investment in data preparation and integration.

Common mistakes to avoid

- Deploying models without clear escalation rules — leads to poor customer experience when the bot handles complex cases badly.

- Training on unclean or biased ticket data — causes systematic errors and poor intent recognition.

- Ignoring metrics and drift — models degrade over time without retraining and monitoring.

- Not involving frontline agents early — misses critical edge cases and reduces adoption.

Governance, security, and standards

Ensure alignment with internal privacy policies and external regulations (e.g., GDPR where applicable). Use role-based access control for model training data, and maintain an audit trail for automated decisions. Reference standards and guidance from recognized bodies such as NIST for risk management and secure deployment patterns.

FAQ: What is the best first use for AI for customer support?

High-volume, low-risk tasks such as password resets, order status checks, and basic billing questions are ideal starting points. They provide measurable impact with limited risk and allow the team to refine intent models and escalation flows before handling complex cases.

How to measure ROI of AI customer service automation?

Measure reduced handle time, deflection rate, lower cost per contact, agent productivity gains, and CSAT changes. Include implementation and ongoing maintenance costs when calculating payback period.

When should human-in-the-loop be mandatory?

Require human-in-the-loop for low-confidence predictions, regulatory cases, refunds, legal requests, or any area where incorrect answers cause financial or reputational harm.

How can AI integrate with existing ticketing systems and CRMs?

Use APIs to push classification labels, summary text, and suggested responses into ticket fields; ensure synchronization with ticket state and implement idempotent operations to avoid duplicates.

What monitoring is essential after launch?

Track intent accuracy, confidence distributions, false positive rates, escalation rates, CSAT trends, latency, and model drift. Schedule periodic reviews to update training data and business rules.