The Silicon Hegemony: Why Nvidia AI Hardware is Paving the Way for a $1 Trillion Revenue Milestone b

FREE SEO Topical Map Generator: Find Your Next Content Ideas

Introduction: The Dawn of the Accelerated Computing Era

The global landscape of technology is currently undergoing a seismic shift, the likes of which have not been seen since the dawn of the internet. We are moving away from general-purpose computing, dominated for decades by the Central Processing Unit (CPU), toward a new paradigm: accelerated computing. At the absolute epicenter of this revolution is Nvidia. What started as a company focused on enhancing the visual experience of video games has now become the fundamental architect of the artificial intelligence age.

The sheer velocity of this transition is reflected in the massive demand for Nvidia AI hardware. From Silicon Valley startups to sovereign nations in the Middle East, the race to acquire Nvidia's H100, H200, and the newer Blackwell GPUs has become a modern-day gold rush. However, this isn't just about selling chips; it’s about building the infrastructure of the future.

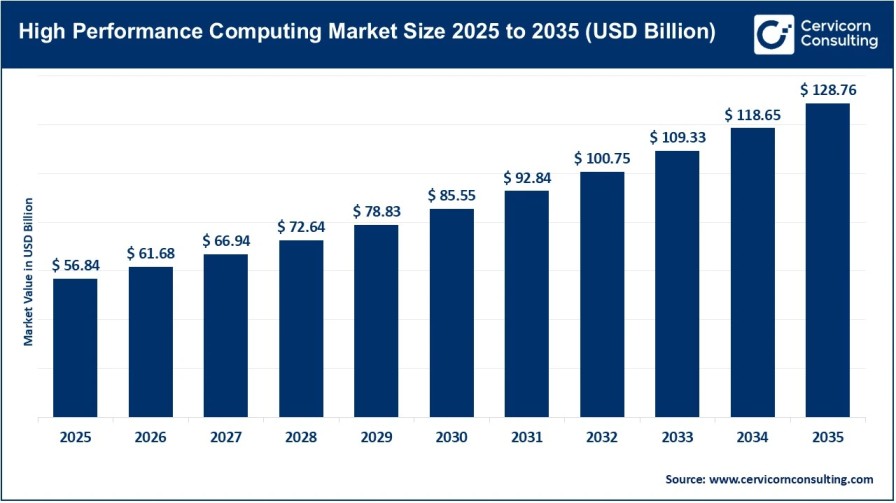

The Phenomenal Growth Trajectory

When we discuss the financial future of the semiconductor industry, the numbers often seem astronomical. Yet, the data suggests that Nvidia is on a path that few companies in history have ever trodden. Market analysts and tech economists are now pointing toward a specific, monumental target. The strategic deployment and constant innovation of Nvidia's specialized chips are projected to catapult the company toward a staggering $1 trillion in annual revenue by the year 2027.

To put this in perspective, reaching such a milestone would require a consistent compound annual growth rate that defies traditional market logic. But AI is not a traditional market. It is an exponential one. As reported by Compute Report, the convergence of generative AI, robotics, and industrial automation is creating a "perfect storm" of demand that Nvidia is uniquely positioned to fulfill.

Part 1: Why Nvidia AI Hardware is Unrivalled

To understand why the world is betting so heavily on Nvidia, we must look under the hood. It isn't just about raw speed; it's about a sophisticated integration of hardware, software, and networking.

1. The Architecture of Power

Nvidia’s GPUs (Graphics Processing Units) are fundamentally different from CPUs. While a CPU is designed to handle complex serial tasks one after another, a GPU is built for massive parallel processing. An AI model, which consists of billions or even trillions of parameters, requires these parameters to be processed simultaneously. Nvidia AI hardware is specifically engineered for this task, offering mathematical throughput that makes training Large Language Models (LLMs) feasible in weeks rather than decades.

2. The CUDA Moat

One of Nvidia's greatest strengths isn't actually physical—it's the software. CUDA (Compute Unified Device Architecture) is a parallel computing platform and programming model that Nvidia released in 2006. For nearly two decades, developers have been building AI libraries and frameworks exclusively on CUDA. This has created a "moat" that competitors like AMD or Intel find nearly impossible to cross. When a company buys Nvidia products, they are buying into a matured, bug-free ecosystem.

Part 2: Industry-Specific Impact and Growth Drivers

1. Healthcare and Drug Discovery

The pharmaceutical industry is perhaps the most significant beneficiary of high-end compute power. Traditionally, discovering a new drug takes 10 years and billions of dollars. With Nvidia AI hardware, researchers can now simulate how proteins fold and how billions of molecules interact in virtual space. This "Digital Biology" is reducing the discovery phase from years to months, creating a multi-billion dollar demand for Nvidia-powered data centers in the medical sector.

2. The Financial Sector and High-Frequency Trading

In finance, milliseconds equal millions. Banks and hedge funds are deploying AI clusters to predict market shifts, detect fraud in real-time, and automate complex trading strategies. The reliability and low latency of Nvidia’s networking gear (like InfiniBand) make them the default choice for Wall Street’s digital transformation.

3. Sovereign AI: The New National Security

We are seeing a new trend where nations view AI compute power as a matter of national security, similar to oil or food reserves. Countries like Saudi Arabia, the UAE, and France are investing billions to build domestic AI clouds. They do not want to rely on foreign providers for their critical data processing. This "Sovereign AI" movement represents a massive, recurring revenue stream for Nvidia's high-end hardware.

Part 3: Technical Roadmap - From Blackwell to Vera Rubin

As we track the progress on Compute Report, it’s clear that Nvidia’s "one-year release cadence" is their most aggressive strategy yet.

- The Blackwell Era (2024-2025): The introduction of the B100 and B200 GPUs has redefined the performance ceiling, offering up to 20 petaflops of horsepower. These chips are optimized for Large Language Model inference, making AI response times almost instantaneous for end-users.

- The Vera Rubin Era (2026-2027): This next-generation architecture (Rubin) will focus on ultra-high-bandwidth memory (HBM4) and liquid cooling as standard. It is designed specifically for "Agentic AI"—systems that can reason and take actions autonomously without human intervention.

Part 4: The Role of Next-Gen Memory (HBM4)

A GPU is only as fast as the data it can access. This brings us to the importance of High Bandwidth Memory. The next leap in performance will be driven by HBM4 technology. Companies like Micron are already mass-producing these memory modules to ensure that the Vera Rubin chips aren't throttled by slow data transfer. This synergy between memory and logic is what will sustain Nvidia's dominance through 2027.

Part 5: Challenges and Market Risks

While the path to $1 trillion looks clear, it is not without obstacles:

- Supply Chain Constraints: Relying on a single manufacturer (TSMC) for advanced 2nm and 3nm chips is a risk.

- Geopolitical Tensions: Export controls on high-end AI chips to certain regions could impact revenue.

- The Energy Wall: Data centers are consuming massive amounts of electricity. Nvidia's focus on liquid cooling and energy efficiency is a direct response to this challenge.

Conclusion: A New Era of Global Intelligence

The story of Nvidia is no longer just about a company; it is the story of human progress in the 21st century. By providing the "shovels" for the AI "gold rush," Nvidia has made itself indispensable. The journey toward the $1 trillion revenue mark is a testament to the fact that computing power has become the most valuable commodity in the world.

For anyone looking to stay ahead of the curve, monitoring the updates on Compute Report is essential. As Nvidia AI hardware continues to evolve, it will unlock capabilities we can currently only imagine—from curing complex diseases to solving global energy challenges.

Frequently Asked Questions (FAQs)

Why is Nvidia AI hardware the standard for the industry?

It combines superior parallel processing with the CUDA software ecosystem, which has been the industry standard for nearly 20 years.

How does Nvidia plan to reach $1 trillion in revenue?

Through a combination of annual hardware releases (Blackwell, Rubin), the rise of Sovereign AI, and expansion into robotics and automotive sectors.

What is the "Vera Rubin" architecture?

It is Nvidia’s upcoming architecture designed for the 2026-2027 cycle, focusing on HBM4 memory and high-efficiency inference.

Is there a competitor to Nvidia?

While AMD and Intel are creating powerful chips, Nvidia’s software moat (CUDA) and networking technology keep them significantly ahead in market share.

Where can I find more technical details on these trends?

You can visit Compute Report for deep-dive technical and financial analysis.