Practical Guide to Web Testing Automation for Reliable Delivery

Get a free topical map and start building content authority today.

Web testing automation is a set of practices and tools that run repeatable checks against web applications to find defects faster and improve release confidence. Automation helps teams validate functionality, performance, accessibility, and security across browsers and environments, freeing manual effort for exploratory testing and design review.

- Define test goals and choose the right mix of unit, integration, and end-to-end tests

- Use CI/CD pipelines to run automated suites on each change

- Apply strategies to reduce flakiness and maintenance cost

- Measure coverage, pass rates, and test execution time to guide investment

Web Testing Automation: core concepts and test types

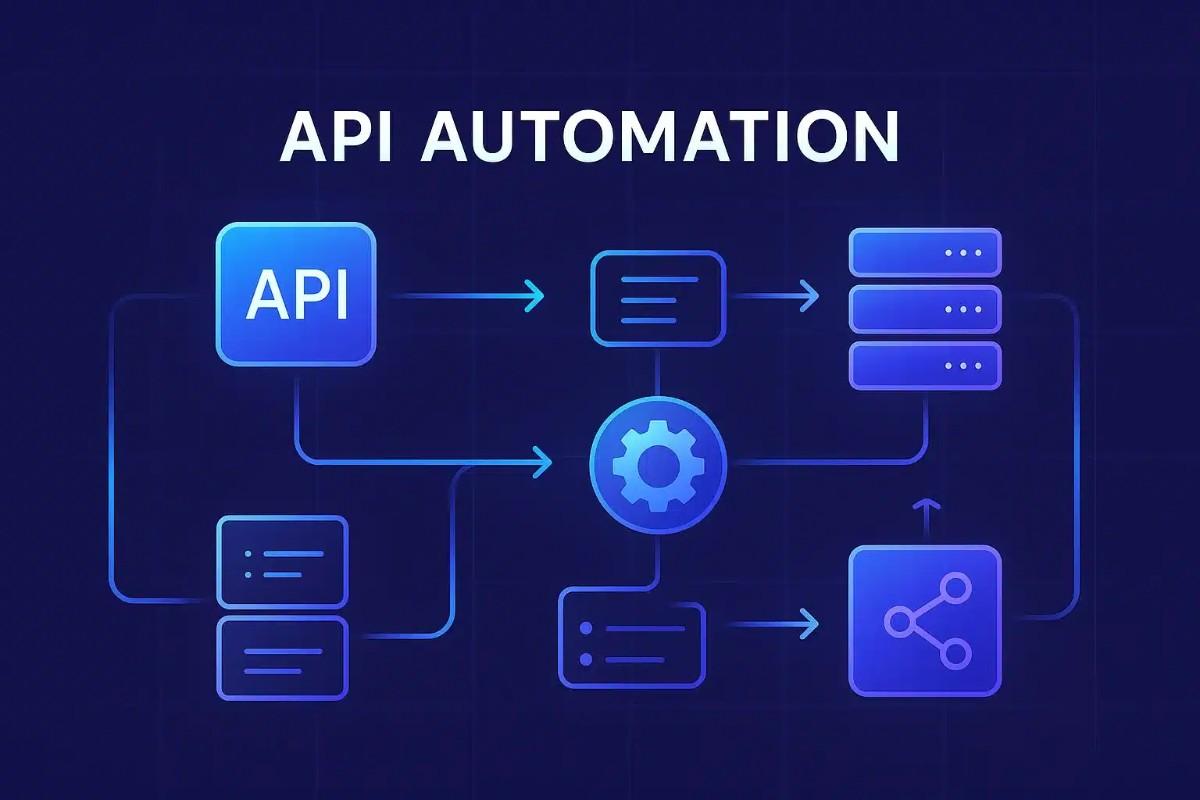

Effective web testing automation groups checks by scope and purpose. Unit tests validate small pieces of code quickly; integration tests check interactions between modules; end-to-end (E2E) tests exercise the full user flow, often through a browser. Other automated checks include API testing, performance testing, and accessibility audits. Choosing the right balance follows the testing pyramid: many fast unit tests, fewer integration tests, and a small number of stable E2E tests.

Planning and strategy

Define clear objectives

Start by specifying what failure modes are most costly: regressions in core workflows, cross-browser issues, security vulnerabilities, or performance regressions. Prioritise automating checks that provide high confidence and fast feedback to developers.

Select test cases to automate

Automate deterministic, repeatable scenarios such as form validation, login flows, API contracts, and critical user journeys. Avoid automating brittle UI elements that change frequently unless paired with robust selectors or visual diff strategies.

Tools, protocols, and standards

Automation frameworks and browser control

Frameworks and drivers that control browsers are central to E2E testing. Standards such as the W3C WebDriver specification define a protocol for remote control of browsers and are implemented by many automation tools. Refer to the W3C WebDriver specification for details on the standard interface between clients and browsers: W3C WebDriver specification.

Test runners and assertion libraries

Choose a test runner that supports parallel execution and integrates with the development environment. Pairable assertion libraries and mocking/stubbing utilities help isolate units and simulate external services without relying on live endpoints.

Integration with CI/CD and environments

Pipeline design

Run fast unit and linting checks on pull requests, and gate merges with integration or targeted E2E tests for critical paths. Schedule longer-running test suites, performance benchmarks, or cross-browser matrices on nightly runs to catch regressions that are expensive to run on every commit.

Environment management

Use containerised or ephemeral test environments to ensure consistency between local and CI runs. Service virtualization or dedicated test doubles reduce dependence on third-party services and make tests repeatable.

Reducing flakiness and maintenance overhead

Design resilient selectors and waits

Prefer stable attributes for UI selectors, avoid brittle CSS paths, and use explicit waits for elements or network conditions rather than fixed timers. This reduces intermittent failures caused by timing issues.

Test data and isolation

Manage test data so each test can run independently: reset state between tests, use unique identifiers, or seed databases. Isolated tests run faster and are easier to reason about.

Review and refactor tests

Treat automated tests as code: apply code review, refactor duplicate logic into helpers, and keep suites small and focused. Regular audits help retire low-value tests and reduce execution time.

Measuring effectiveness

Key metrics

Track pass/fail rates, time to detect and fix a broken test, test execution time, and test coverage across units and integration layers. Combine these metrics with deployment frequency and mean time to recovery (MTTR) to assess overall impact.

Observability and reporting

Provide clear failure logs, screenshots, and recording of browser sessions for failed E2E tests. Integrate test results with issue trackers and dashboards so failures can trigger triage and fixes quickly.

Security, accessibility, and performance testing

Security testing

Incorporate automated security scans for common web vulnerabilities and include tests for authentication and authorization paths. Follow guidance from organisations such as OWASP for common web security risks.

Accessibility and performance

Automate accessibility checks to detect common issues (missing ARIA attributes, contrast problems) and run performance budgets to detect regressions in page load or API response times. These checks can be included in CI or scheduled runs depending on cost.

Governance and standards

Adopt testing standards and role-based responsibilities. Industry bodies such as ISTQB provide terminology and testing principles useful for governance. Maintain a test strategy document that outlines scope, acceptance criteria, and owner responsibilities for automated suites.

Costs and ROI

Automation requires upfront investment in tooling, authoring tests, and CI resources. Expected returns include faster releases, fewer production defects, and lower manual testing effort. Use pilot projects to estimate maintenance costs and demonstrate value before expanding coverage.

Frequently asked questions

What is web testing automation and why is it important?

Web testing automation is the practice of running scripted checks against a web application to verify behavior, performance, accessibility, and security. It is important because it speeds up feedback, reduces regression risk, and enables more frequent, confident releases.

Which types of tests should be automated first?

Automate fast, deterministic checks with high return on investment: unit tests for business logic, API contract tests, and critical user flows at the integration or E2E level. Prioritise tests that prevent costly production defects.

How can flaky tests be reduced in automated suites?

Reduce flakiness by using robust selectors, explicit waits, isolated test data, stable test environments, and avoiding reliance on external services. Regular test refactoring and monitoring unstable tests help keep suites reliable.

How does web testing automation fit into CI/CD pipelines?

Automated tests are typically integrated into CI/CD pipelines to validate each change. Quick unit and lint checks run on every commit; integration and targeted E2E tests gate merges; longer suites run on scheduled pipelines or pre-release jobs to ensure broader confidence.

What metrics indicate successful automation?

Useful metrics include test pass rate, execution time, coverage by test type, time to detect and fix failures, and downstream deployment metrics such as reduced incidents or faster release cycles.