How Data Science Is Reshaping Industries: Practical Use Cases, Roadmap, and Risks

Get a free topical map and start building content authority today.

The modern economy is undergoing a measurable shift driven by data, analytics, and machine learning — a transformation captured by the phrase how data science is transforming industries. Organizations that convert data into reliable decisions reduce costs, discover new products and services, and respond faster to market changes. This guide explains the main pathways of impact, practical steps for implementation, and common mistakes to avoid.

- Data science drives value through automation, personalization, and operational efficiency.

- CRISP-DM is a practical framework for projects; a short checklist can prevent common failures.

- Start with high-impact use cases, build reliable data foundations, and measure outcomes.

Informational

How data science is transforming industries: key pathways

Data science changes how companies make decisions in four repeatable ways: predictive insights (forecasting demand, failures, churn), automation (processes and decisions moved from humans to algorithms), personalization (tailored experiences or products), and discovery (new product signals or research findings). These pathways apply across manufacturing, healthcare, finance, retail, logistics, energy, and public sector operations.

Common industry use cases and real-world example

Financial services

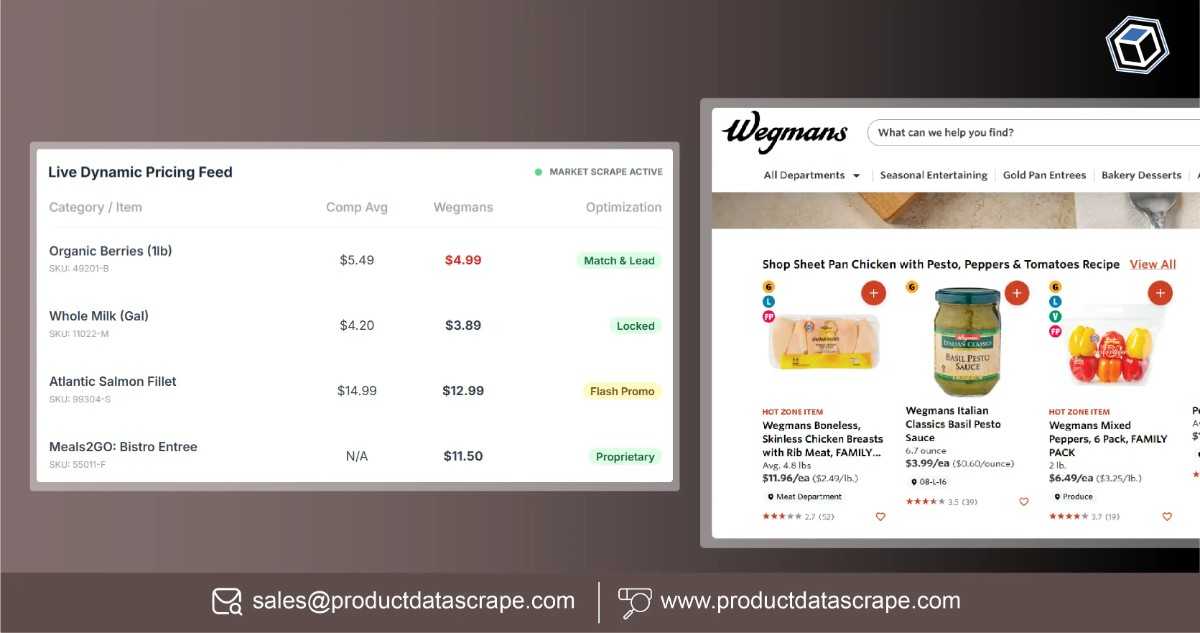

Fraud detection models and risk scoring reduce losses and speed approvals. Linked systems use streaming data to flag anomalies in real time.

Healthcare

Predictive models prioritize patient risk and optimize staff allocation; image analytics speed diagnosis and triage.

Manufacturing and supply chains

Predictive maintenance extends equipment life and reduces downtime; demand forecasting improves inventory turns.

Short real-world scenario

A regional logistics operator deployed a pilot predictive-maintenance model on 100 delivery vehicles. By combining telemetry, service records, and environmental data, the model predicted brake-related failures two weeks earlier than routine inspections, reducing roadside breakdowns by 30% and fleet downtime by 18% in six months.

Data science use cases by industry: prioritizing projects

Prioritize projects that are (1) measurable, (2) data-ready, and (3) aligned with strategic value. Use a simple scoring matrix: Expected value x Feasibility (data + skills) x Time to impact. Pilot low-risk, high-feedback projects to build internal confidence and reusable assets.

CRISP-DM checklist: a named framework for practical projects

CRISP-DM (Cross-Industry Standard Process for Data Mining) remains a practical framework for structuring projects. Use this quick checklist based on CRISP-DM:

- Business Understanding: Define objective, metric, and success thresholds.

- Data Understanding: Inventory data sources, quality, and gaps.

- Data Preparation: Cleaning, feature engineering, and pipeline design.

- Modeling: Baselines, experiments, and cross-validation strategy.

- Evaluation: Compare models against business metrics and safety checks.

- Deployment: Monitoring, rollback plan, and documentation.

Data science implementation roadmap

Successful implementation follows stages: strategy, pilot, scale, and operationalize. A pragmatic roadmap:

- Set measurable goals and required KPIs.

- Run a 6–12 week pilot using existing data to demonstrate value.

- Architect production pipelines (data ingestion, feature store, model serving).

- Implement monitoring and model governance (performance, drift, fairness).

- Integrate models into business processes and measure ROI continuously.

Data science implementation roadmap vs. quick wins

Balance quick wins (immediate cost savings or process improvements) with longer-term platform investments (data warehouses, feature stores, MLOps). Quick wins validate funding; platform investments reduce long-term operational costs.

Practical tips for building productive data science teams

- Hire cross-functional teams that include domain experts, data engineers, and applied analysts to reduce handoff delays.

- Use reproducible pipelines and version control for data and models to enable safe iteration.

- Measure business outcomes, not just model metrics; connect models to the revenue, cost, or risk levers.

Trade-offs and common mistakes

Common mistakes and trade-offs to consider:

- Overfitting to historical data: Models that look great in development can fail in production if data distribution shifts.

- Chasing complexity: Simple models with clear monitoring are often more robust and maintainable than complex, opaque solutions.

- Ignoring data quality: Poor input data multiplies downstream issues—invest early in data engineering.

- Governance gaps: Deploying models without monitoring, versioning, or rollback increases operational risk.

Core cluster questions

- What are high-impact data science use cases for small and mid-size companies?

- How to measure ROI from data science projects?

- What infrastructure is needed to scale machine learning to production?

- How to build a cross-functional data team that delivers results?

- What monitoring practices detect model drift and data quality issues?

Standards, regulation, and workforce trends

Data initiatives should align with privacy laws and industry standards. Regulatory environments (such as GDPR for data protection) require clear data governance and consent management. For workforce context, authoritative labor statistics show ongoing demand for analytics and data roles — a practical reference on employment trends is available from the U.S. Bureau of Labor Statistics: BLS — Data Scientists.

Practical tips section

- Start with a hypothesis and a small, measurable pilot: define success metrics before modeling begins.

- Automate data validation and model performance checks to detect issues early.

- Document assumptions, edge cases, and expected data inputs for every production model.

- Use feature stores and pipelines to reuse work and reduce technical debt.

Measuring success and governance

Track leading indicators (data freshness, pipeline failures) and lagging indicators (revenue uplift, cost reduction). Implement governance that ties models to owners, lifecycle stages, and rollback procedures.

How data science is transforming industries?

Answer: By converting raw data into automated decisions, targeted experiences, and predictive signals that change operational behavior and product strategy. The transformation is practical and measurable when projects focus on clear business outcomes and maintain data quality and governance.

What is a practical framework for starting a data science project?

Answer: CRISP-DM is a practical starting framework: define business goals, understand and prepare data, build models, evaluate against business metrics, and deploy with monitoring.

How should organizations prioritize data science use cases?

Answer: Prioritize by expected business value, feasibility given existing data and skills, and time to impact. Use small pilots to validate assumptions before scaling.

What are common mistakes in deploying models to production?

Answer: Common mistakes include skipping data validation, lacking monitoring, failing to version models and datasets, and ignoring shifts in data distribution leading to performance degradation.

How can companies measure ROI from data science projects?

Answer: Tie model outputs to business KPIs (e.g., reduced churn percentage, cost savings from automation, increased revenue from personalization) and measure before/after performance with proper A/B testing or controlled experiments.